SoundHound OASYS turns voice AI into an operations layer

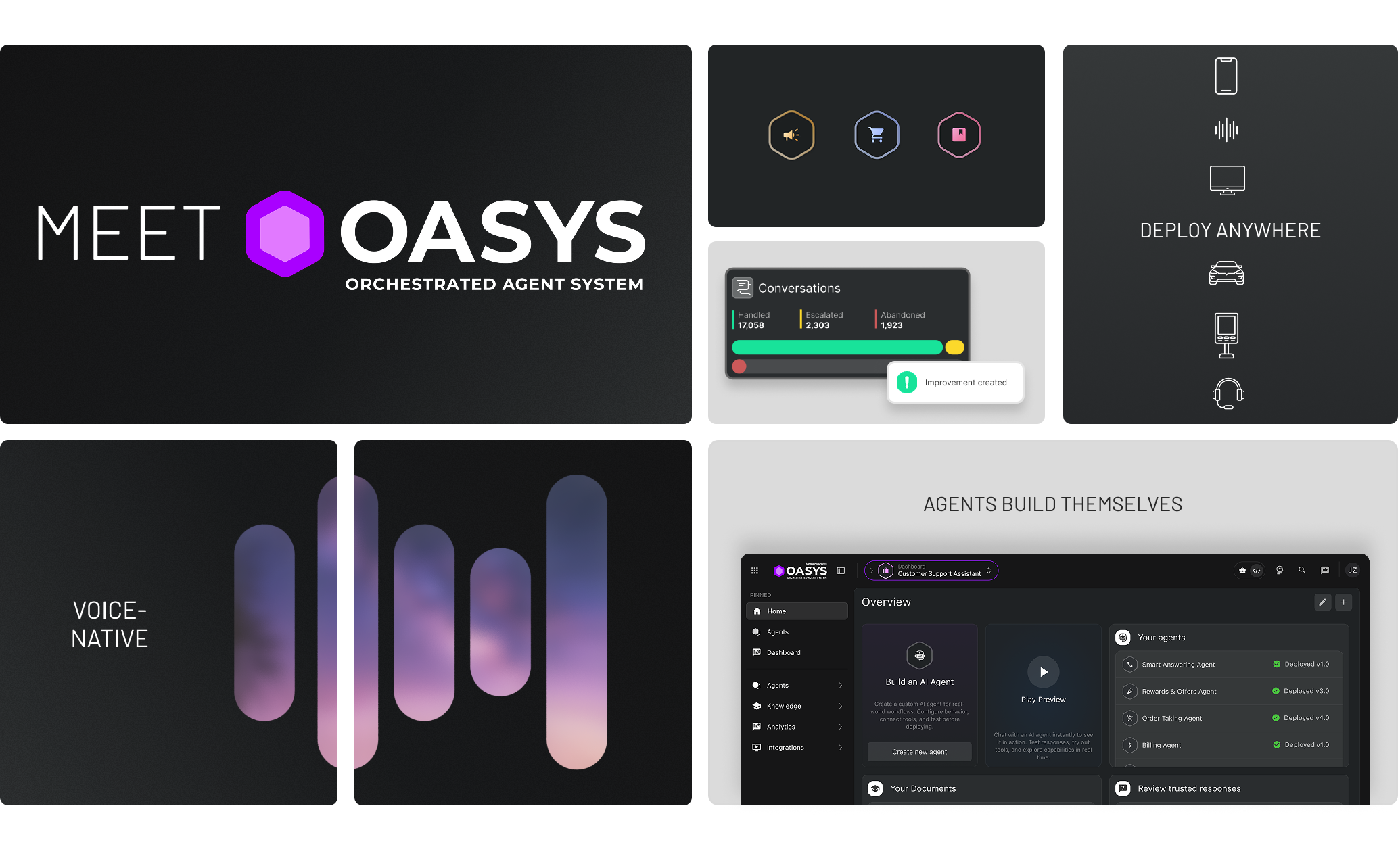

SoundHound OASYS frames voice agents as a self-learning operations layer across phones, cars, kiosks, stores, and customer workflows.

- What happened: SoundHound AI introduced

OASYSon May 5, 2026.- The official pitch is a self-learning platform that ingests documents, transcripts, and process material, then generates AI agent sets and suggests improvements after launch.

- Why it matters: The competitive axis is shifting from better speech recognition toward

agent lifecycle, field channels, transaction handling, and human-in-the-loop operations. - Developer lens: A voice agent is no longer just an STT, LLM, and TTS pipeline. Production systems need tests, guardrails, context, escalation, and workflow ownership.

- Watch: The launch is ambitious, but the real test is customer adoption, margin discipline, and differentiation from general voice models from OpenAI, Google, and others.

SoundHound AI introduced OASYS on May 5, 2026. On the surface, it looks like another enterprise AI agent platform. The name is big enough: Orchestrated Agent System. The official announcement puts a strong message up front: AI can build, manage, and improve AI. After months in which almost every enterprise software company has started talking about agents, orchestration, and control planes, that sentence alone does not automatically sound new.

The interesting part is where OASYS starts. Many agent platforms begin inside digital workspaces: browsers, documents, code, CRM systems, Slack, and internal ticket queues. SoundHound's stage is different. It is phone calls, drive-thrus, in-car infotainment, store kiosks, hotel front desks, contact centers, and IT service desks. These are not places where someone calmly types a prompt into a chat box. They are places where customers speak, wait, pay, complain, change reservations, and expect a service outcome. So this launch is less about "voice models got better" and more about a voice AI company trying to own the operations layer around voice.

That difference matters for developers. The period when a voice agent could be treated as a simple speech-to-text -> LLM -> text-to-speech pipeline is ending quickly. In real customer channels, users interrupt, switch languages, speak through noise, ask for sensitive actions, and expect the system to handle payment, healthcare, insurance, reservations, or account work. A model producing one plausible spoken answer is not enough. Teams need to know what the agent understood, which tool it called, when it escalated, and how it recovered from failure. OASYS is aimed directly at that operations problem.

What SoundHound announced

According to SoundHound's official announcement, OASYS lets companies create conversational AI agents in minutes, deploy them across digital and physical channels, and improve them after they go live. The announcement says OASYS can ingest documentation, transcripts, and integration information, generate agent sets and transaction flows, and visualize the resulting logic so developers can inspect it step by step.

The important word is not only "build." It is lifecycle. SoundHound argues that most agentic AI platforms help developers create agents, while OASYS manages creation, orchestration, evaluation, and improvement. After launch, the platform analyzes interactions, finds performance gaps, engineers updates autonomously, and proposes them to human experts. That sounds less like unsupervised self-modification and more like an operating loop where the system finds candidate improvements while people keep control.

The channel list is broad. SoundHound mentions phone, text, web chat, in-store kiosks, social media, TV, in-vehicle infotainment, and custom hardware. The official product article packages this as "build once, deploy anywhere." In practice, that is a hard promise. What SoundHound is selling is not just a model API. It is a channel abstraction: a customer can begin on the phone, continue on a smartphone or car screen, receive staff assistance through an earpiece in a store, or complete a drive-thru order that touches POS and payment systems.

The product article gives concrete examples: a customer service agent that resolves a billing dispute and then suggests an upgrade, in-car commerce that confirms a regular coffee order and pays before arrival, a retail floor assistant that listens to a store conversation and recommends a bundle to an employee, an outbound retention agent that responds to churn signals, a healthcare agent that checks an EHR and handles prescription refills, and an IT service desk agent that processes access requests.

These examples are about handling work, not only answering questions. Voice AI is moving from reading FAQs to changing business state and creating transactions. If that works, the upside is not just reducing agent cost. It can create revenue. If it fails, the downside is wrong payments, wrong healthcare or insurance handling, churn, and regulatory exposure. That is why the OASYS materials keep returning to guardrails, deterministic flows, human touchpoints, and escalation.

Voice AI competition is changing shape

Most recent voice AI news has focused on model quality: more natural speech, lower latency, better transcription, and stronger real-time translation. OpenAI's Realtime model releases fit that pattern too. But teams that operate production voice agents soon run into a different set of problems. Natural speech does not matter much if the system cannot finish the workflow. Accurate transcription does not help if policy boundaries are weak enough to block deployment. Smooth conversation does not create revenue if the agent is disconnected from transaction systems.

OASYS interprets that bottleneck as an operations-layer problem. SoundHound's official product article lists the blockers for function-specific AI deployment: voice systems that break in noisy environments, agents that cannot reliably handle sensitive tasks, limited channels, failures in transaction and exception handling, and long build cycles. That is not a model benchmark list. It is an operations checklist.

From a developer perspective, this shift changes the architecture. Older voice bots were built around intent classification and scripted dialog trees. If a user said "change my reservation," the bot filled the required slots and handed off to a human when it hit an exception. LLM-based voice agents are much more flexible, but flexibility itself is a risk. The model must not invent unsupported claims, execute unauthorized actions, or process payment, healthcare, or account changes without confidence and permission.

Production voice agents need at least four layers. First, a real-time audio layer handles interruptions, background noise, multilingual switching, and partial transcripts. Second, a tool orchestration layer safely calls CRM, POS, EHR, payment, identity, and ticketing systems. Third, a policy and guardrail layer defines which actions must follow deterministic flows and when human approval is required. Fourth, an evaluation and observability layer scores interactions at the agent level, tracks failure causes, and reviews improvement proposals.

SoundHound's Agentic+ orchestration framework targets the second and third layers. The announcement says OASYS combines autonomous reasoning with rule-based guardrails and human touchpoints. It also points to SoundHound's Human Augmented Resolution, where a person can intervene behind the scenes when AI gets stuck without breaking the customer conversation. That is a more realistic position than a fully autonomous agent. Field operations cannot usually accept 100% automation immediately, while agent escalation can hurt both cost and customer experience. A background human-assist model is easier to sell into enterprise workflows.

What "AI builds AI" really means

The strongest phrase in the OASYS launch is "AI builds AI." It needs careful reading. In an enterprise agent platform, letting an unsupervised system create and deploy new agents is still risky. The official explanation points to a more grounded pattern. A company feeds the platform existing documents, conversation transcripts, training material, process documentation, and integration information. OASYS then generates candidate workflows and agent configuration. Teams review, test, launch, and receive improvement proposals after live usage.

That is more serious than "make a bot from a prompt." Customer support and field operations already have years of call recordings, agent manuals, FAQs, escalation policies, CRM disposition codes, and POS exception documents. The problem is that this knowledge is rarely organized as tool schemas and workflows an AI agent can safely use. If OASYS can ingest those materials, produce transaction flows, and let developers inspect the logic, it can reduce build time in a practical way.

For this to work, generated agents have to be explainable. "The AI made it, so trust it" will not work for enterprise buyers. Teams need to see which document produced which rule, which slots are required, and which confirmations must happen before a tool call. That is why SoundHound emphasizes transaction-flow visualization. The more agent generation is automated, the more important reviewable intermediate artifacts become.

Self-learning has the same tension. "The system improves itself" sounds attractive, but in customer operations it can also sound dangerous. A live agent should not freely rewrite policy. The more plausible interpretation is that OASYS analyzes interactions every day, finds failure patterns, drop-off points, containment failures, escalation reasons, and conversion gaps, then proposes changes for human approval. That is a blend of contact-center analytics, MLOps, prompt optimization, and agent evaluation.

This is where OASYS overlaps with recent agent observability tools such as Honeycomb Agent Observability and LangSmith. The difference is that SoundHound is not pitching generic developer observability. It is aiming at operational analysis for voice, customer channels, and transaction flows. A failed voice agent is not just a red trace in a dashboard. Teams need to know whether the customer repeated themselves, interrupted, abandoned the call, escalated to a human, skipped payment, or contacted support again later.

SoundHound's edge and its pressure points

SoundHound has a clear advantage in this market. The company has worked on voice recognition and voice AI for years, and it has deployment experience in automotive, restaurants, contact centers, and smart devices. The announcement cites more than 400 patents and billions of interactions processed. The product article points to deployments with brands such as Hyundai, Chipotle, and GoDaddy. That kind of operational knowledge is hard for a foundation model provider to replicate quickly.

Physical channels are another advantage. Many AI agent platforms are comfortable inside browsers and SaaS workflows. Drive-thrus, vehicles, stores, phone networks, and kiosks are different. They involve noise, latency, hardware, payment terminals, POS integration, local compliance, staff training, and customer complaints. OASYS repeatedly uses the phrase "digital and physical spaces" because SoundHound knows this distinction is part of its defensible story.

The weaknesses are also large. First, general voice models are improving quickly. If OpenAI, Google, Anthropic, xAI, and others keep improving real-time voice, tool use, and multimodal context, SoundHound's model-level differentiation may narrow. Discussion in r/Soundhound also reflected concern that OpenAI's new voice models could reduce SoundHound's moat. SoundHound has to prove differentiation through integration, compliance, workflow ownership, and customer outcomes, not only by saying its model hears better.

Second, the platform vision requires sales execution. Even if OASYS is technically strong, enterprise buyers look for adoption and ROI. Simply Wall St described market reaction as cautious despite the OASYS launch and strong revenue growth. Zacks reported that SoundHound's stock fell 16.4% after the May 7, 2026 Q1 earnings release, with margin pressure and continuing losses weighing on investors. From an investor perspective, customer conversion, gross margin, deployment cost, renewal, and expansion matter more than a large product vision.

Third, SoundHound has to find its position between horizontal platforms and vertical specialists. ServiceNow, Salesforce, Microsoft, and UiPath already sit near the center of enterprise workflow. Their voice capabilities may be weaker, but they own customer data, business process, approval systems, identity, and audit trails. On the other side, CX agent companies such as PolyAI, Sierra, Kore.ai, and Cognigy are specialized in customer-service automation. SoundHound can use its voice-native edge and channel depth, but becoming a broader operational AI platform requires more proof of system integration.

What development teams should take from this

Whether a team adopts OASYS or not, the launch gives voice-agent builders a useful baseline. First, voice is not just a frontend feature. From the moment a user speaks, the system may need identity verification, context lookup, tool selection, confirmation, transaction execution, logging, and escalation. A voice UI team, backend team, security team, and customer operations team cannot treat those pieces as separate products.

Second, agents should be designed in a channel-neutral way. The same customer intent can arrive through a phone call, web chat, vehicle screen, kiosk, or mobile app. The UX differs by channel, but core actions such as order status, appointment changes, refund requests, and prescription refills are shared. Good architecture separates channel adapters from business workflows. If the voice channel fails, the system should be able to continue through a web link or carry a kiosk interaction into an agent console.

Third, human-in-the-loop is not a sign of failure. It is an operations design choice. Many AI products hide human intervention as if it proves the model is weak. In high-stakes customer workflows, trust depends on when a person can intervene, which permissions they have, and what context they see. That is why OASYS emphasizes Human Augmented Resolution. The goal is not ideological full automation. The goal is getting the customer to resolution without forcing them to repeat the conversation.

Fourth, self-improvement has to be tied to governance. It is useful for an agent to analyze interactions and propose improvements. But teams must inspect which metric improves and which risk increases. A system should not raise containment rate by pushing inappropriate upsells, or reduce average handle time by escalating complex customers too quickly. Evaluation should consider cost, resolution, customer satisfaction, compliance, revenue, and fairness together.

Fifth, voice-agent observability is harder than text-agent observability. Transcripts alone are not enough. Teams need to connect raw audio quality, ASR confidence, interruption timing, language switches, barge-in behavior, silence duration, tool latency, human-assist timing, customer sentiment, and final outcome. The question is not only which call failed. It is why the call failed, and what the next agent version should change.

The realistic meaning of an agent operating system

Many companies now call their agent platforms operating systems. Fiserv talks about a banking agentOS. Other vendors talk about agent control planes. SoundHound OASYS uses similar language. It does not have to be dismissed as pure hype. For the operating-system metaphor to be useful, the platform needs to abstract resources, coordinate execution, and manage failure and authority.

The resources OASYS wants to abstract are voice channels and customer operations: phone networks, kiosks, vehicles, chat, POS, CRM, EHR, payments, and staff assist. Execution coordination appears in the idea of dynamically selecting and coordinating multiple agents inside one interaction. Failure and authority management appear as rule-based guardrails, human touchpoints, escalation, QA, and testing.

We still need customer cases and operating metrics to know how well this works. The launch materials use strong language, but concrete deployment metrics are limited. If OASYS is going to become more than a launch announcement, SoundHound will need industry-specific numbers such as containment rate, resolution rate, human-assist ratio, transaction completion, and deployment-cycle reduction. The "AI builds AI" claim needs operational evidence, not only demos.

Still, the direction is clear. Voice AI can no longer be explained only by speech quality. Real-time model APIs may commoditize quickly. The competition above them will be about who attaches deeply to customer systems, who builds safe workflows, who observes failures and improves them, and who integrates physical-world channels.

OASYS is a direct signal of that transition. SoundHound does not only want to be a good voice AI company. It wants to become the agent operations layer for field work and customer transactions. Whether that ambition succeeds is still open. But the question for developers and AI product teams is already sharp: does your voice agent speak well, or can it take responsibility for the operation end to end? Voice AI competition in 2026 may be decided by that answer.