OpenAI Codex mobile brings the approval loop to your hand

Codex is now inside the ChatGPT mobile app. AI coding agents are becoming remote work that developers supervise, steer, and approve from anywhere.

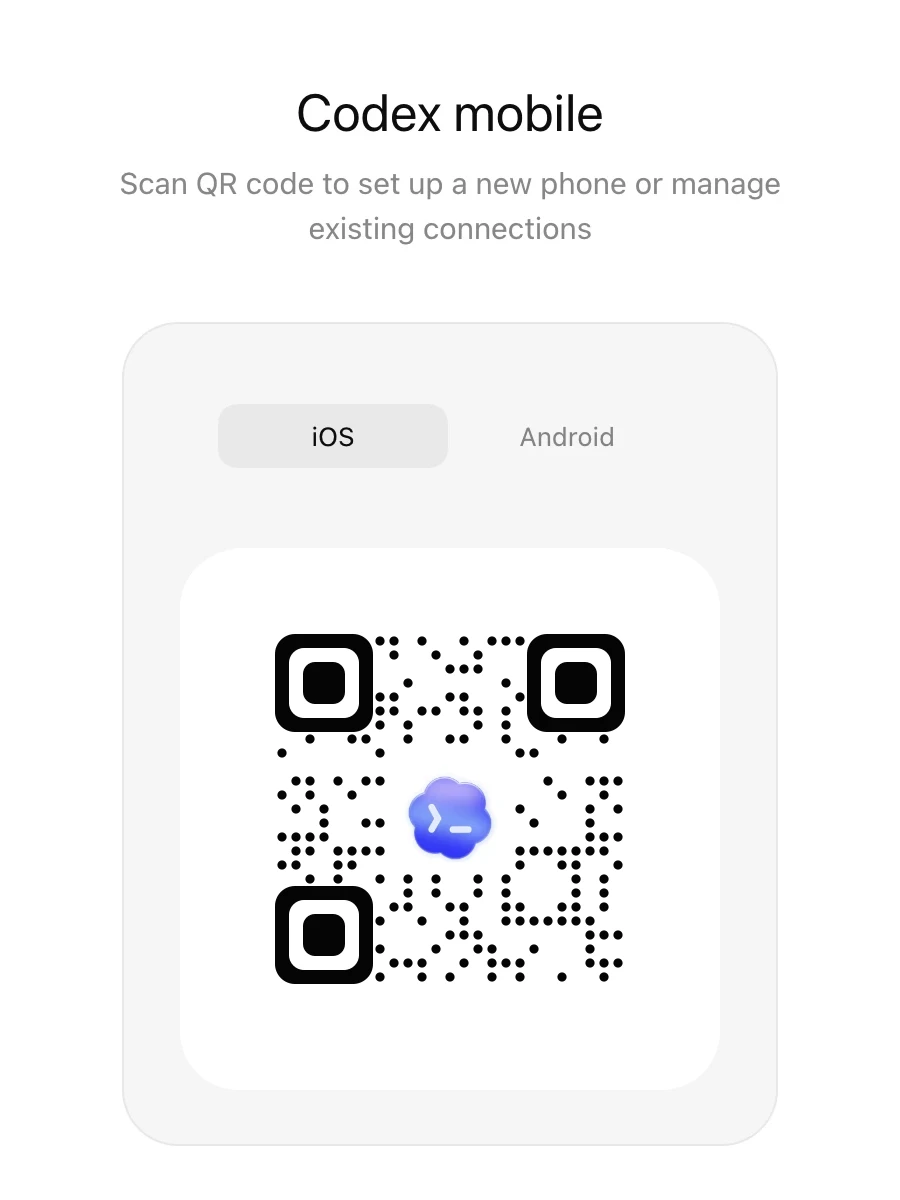

- What happened: OpenAI integrated

Codexinto the ChatGPT mobile app as a preview on May 14, 2026.- On iOS and Android, users can inspect active threads, review output, approve commands, switch models, and start new work.

- Why it matters: AI coding is shifting from typing on a phone to supervising long-running agents wherever the developer happens to be.

- Developer impact: Files and credentials stay on the host, while the phone becomes a review, approval, and steering control plane.

- Remote SSH, access tokens, and Hooks point toward team-scale agent operations rather than a standalone mobile coding feature.

- Watch: The current flow centers on connecting the macOS Codex app; Windows connection support is still listed as coming later.

OpenAI has moved Codex onto the phone. More precisely, Codex is not suddenly running code on a phone. In Work with Codex from anywhere, published on May 14, 2026, OpenAI said Codex is entering the ChatGPT mobile app as a preview. Users can start or resume Codex work on iOS and Android, review outputs, approve commands, change direction, and add new tasks while away from the machine where the work is actually executing.

That distinction is the story. This is not a claim that a phone should replace an IDE. Codex still runs on a laptop, Mac mini, devbox, or managed remote environment. The mobile app sits above that environment as a remote supervision surface. Files, credentials, local settings, permissions, repository state, shell output, screenshots, diffs, test results, and approval requests remain tied to the execution machine, while the phone receives the pieces a human needs to make a short decision.

So the announcement is bigger than a "mobile coding app" update. As AI coding agents become longer-running workers, the human role changes from constant operator to intermittent reviewer. The most important interface is no longer only the editor pane. It is the approval prompt, task log, test result, diff review, and branch decision. Codex mobile preview is one of the first mainstream product surfaces designed around that supervision loop.

The point is not coding on a phone

OpenAI's announcement frames the mobile feature around staying connected to "active work." A developer can answer Codex's questions while moving between meetings, inspect what it found, pick a direction, approve the next command, or add a new idea. OpenAI also said Codex has passed four million weekly users, which matters because this is no longer an isolated demo surface. It is a large product trying to support real developer workflows.

The deeper shift is rhythm, not location. Coding agents move more slowly than short chat answers. They reproduce bugs, read files, run tests, interpret failures, edit code, and verify the result. In the middle, they often need judgment. Should they take the conservative patch or broaden the refactor? Is this command allowed? Should they keep investigating the failing integration test? Is the current diff acceptable enough to continue?

Until now, many of those pauses were bound to the desk. If Codex asked a question while the user was commuting, in a meeting, or away from the laptop, the agent waited. The user also had to keep reopening the machine just to know whether the task had reached a blocking point. Mobile integration reduces that waiting time. The developer is still not doing deep code review on a small screen, but they can move the agent past a narrow decision point.

That may sound like a minor convenience feature. It becomes more important when agents enter actual team work. Long refactors, test-failure investigations, customer issue reproduction, release preparation, and documentation updates all involve short approval steps. If a phone can handle those steps, the developer's focused time may not magically increase, but the agent's idle time can decrease.

The security model depends on keeping code off the phone

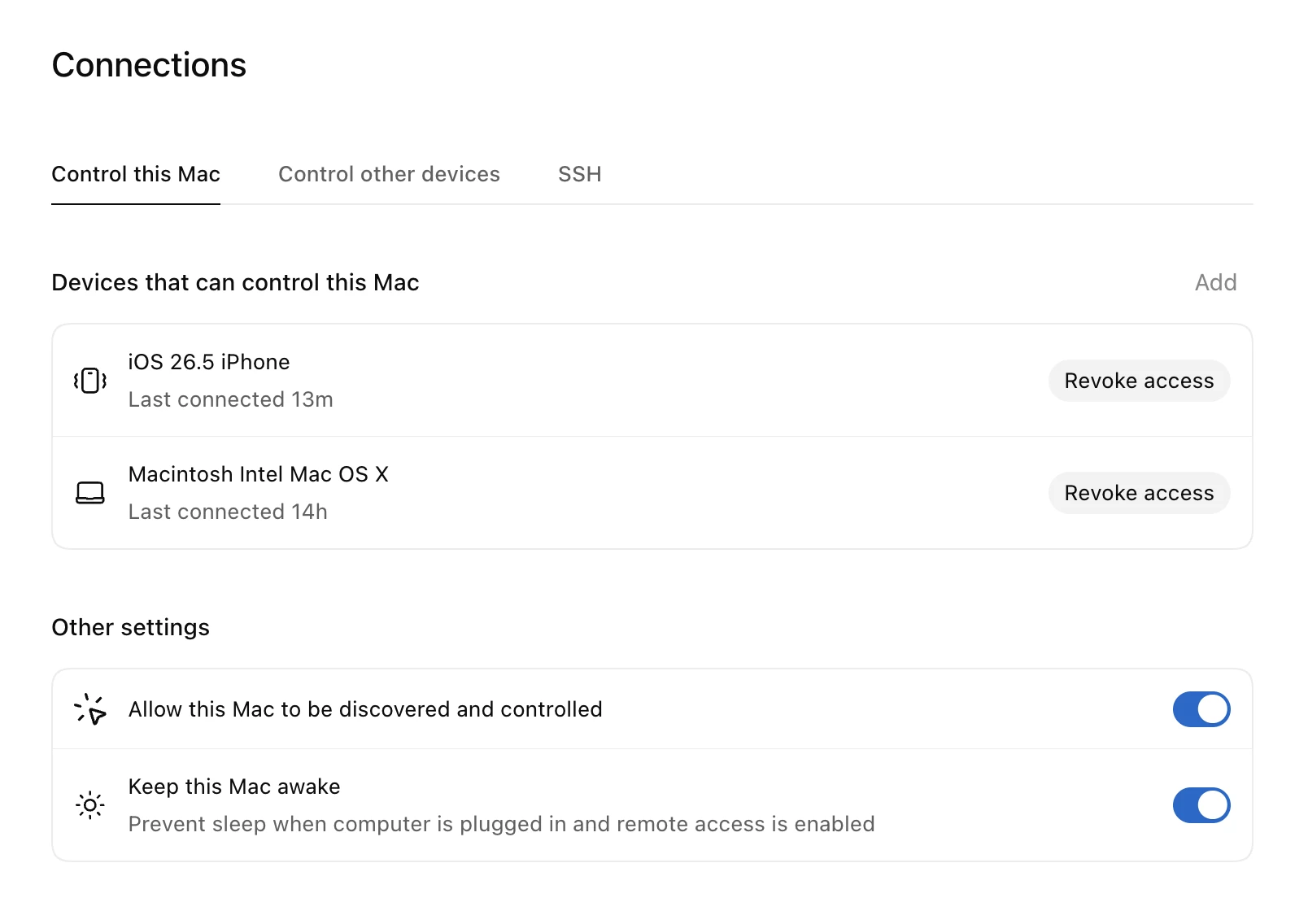

OpenAI repeatedly describes the boundary around this feature. The phone is not the replacement for the Codex execution environment. Project files, local documents, credentials, permissions, development tools, and browser sessions stay on the connected host or remote environment. The mobile app observes, reviews, and approves state from that environment.

The official announcement says Codex uses a secure relay layer. The goal is to make trusted machines reachable from authorized ChatGPT devices without directly exposing those machines to the public internet. OpenAI's Remote connections documentation states the same principle in operational terms: repository files and local documents remain on the connected host, shell commands run on that host or remote environment, and sandboxing, security controls, and action approvals continue to apply to the connected session.

That design answers one of the first questions teams will ask: does the phone now contain the repository? In the default model, no. Losing a phone is still serious, and mobile device authorization matters, but the repository and credentials are not supposed to be copied into a phone-based development environment.

The risk does not disappear. If the phone can approve a command, the phone can approve the wrong command. Deployment steps, data deletion, schema migrations, production credential access, and security-sensitive changes are difficult to evaluate from a small screen. A mobile control plane can reduce latency, but it does not design an approval policy for the team.

That makes the implementation question very practical: what should be allowed from mobile? Re-running tests, gathering logs, drafting documentation, and steering non-production refactors fit the model well. Production deployments, database changes, customer data access, and billing or authentication edits usually deserve full desktop context and a more deliberate review path.

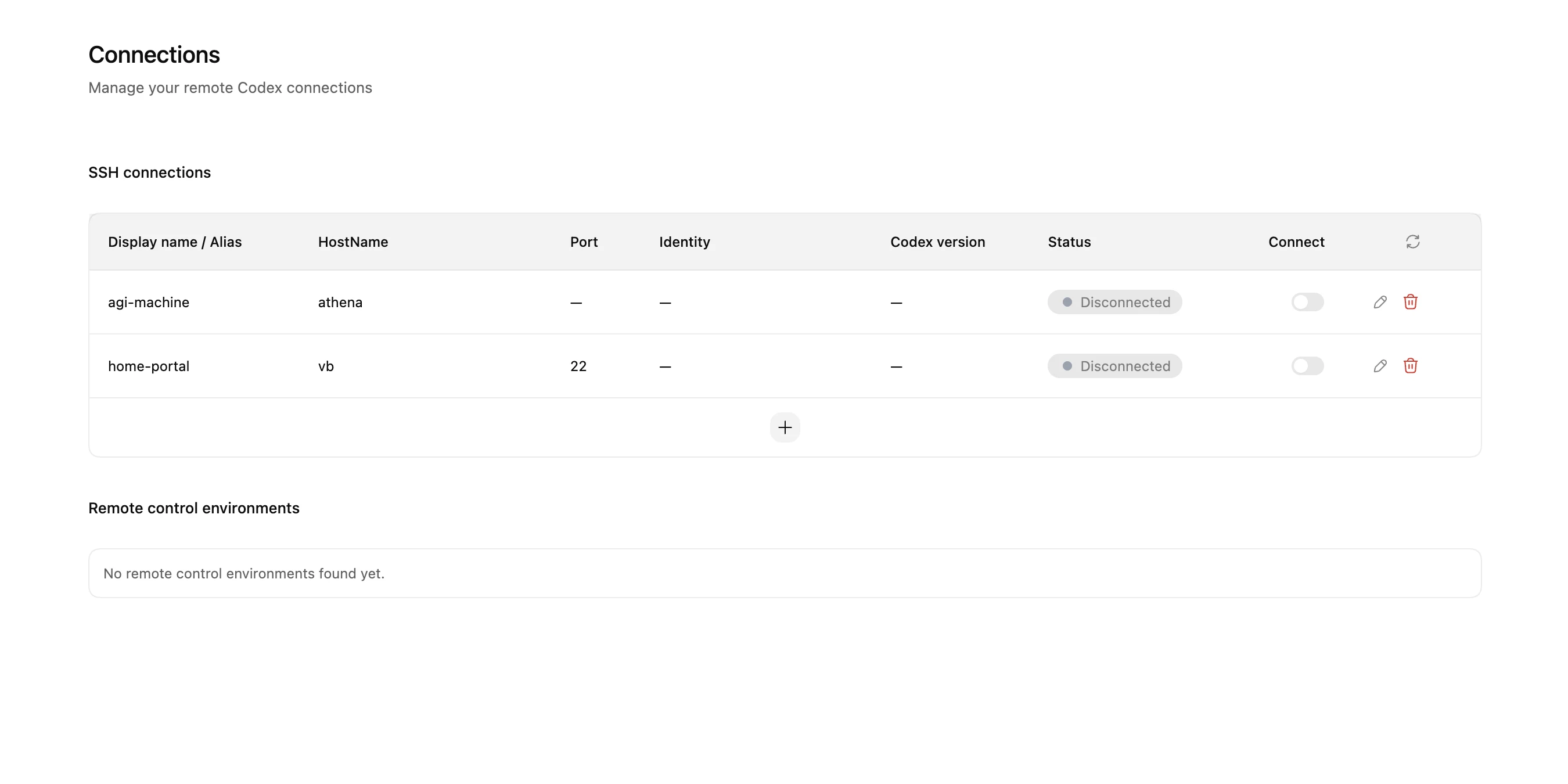

Remote SSH and access tokens show the larger strategy

If you only look at the mobile preview, this can feel like a feature for individual developers. OpenAI bundled it with Remote SSH, programmatic access tokens, and Hooks. Those pieces reveal a broader attempt to move Codex from a personal productivity app toward an agent operations layer.

Remote SSH matters for teams that already develop in managed environments. Many companies do not want critical work happening on unconstrained personal laptops. They use devboxes, bastion-protected servers, approved dependency mirrors, managed credentials, and remote machines with specific network access. OpenAI says Remote SSH is generally available, and Codex can detect hosts from SSH configuration to create remote projects and threads. The developer documentation explains that when a user works with an SSH host or managed devbox, the Codex app host connects to that environment first, and the phone connects to the Codex app host.

That is a realistic answer to where an agent should run. If the company repository, internal packages, private APIs, test data, and permission model all live inside a remote environment, the coding agent needs to run there too. The phone is not an SSH client dropped into the middle of that network. It is a thin control surface for approvals and status.

Programmatic access tokens push the story toward automation. OpenAI's Access tokens documentation describes them as workspace identity credentials for running Codex without browser login. CI pipelines, release workflows, and internal automations are the obvious use cases. The same documentation tells teams to treat tokens like automation secrets: keep them in a secret manager, avoid logging them, and rotate them regularly.

Hooks add policy and observability around agent execution. OpenAI's announcement describes using Hooks for prompt secret scanning, validators, conversation logging, memory generation, and repository-specific Codex behavior. Once agents can run for a long time, be approved from mobile, and execute through CI or internal automation, organizations will need to answer an audit question: who approved which action, under what repository policy, with which validation afterward? Hooks are an early surface for inserting those controls.

Competition is moving beyond model quality

AI coding tools spent a long time competing over which model writes better code. That still matters, but the 2026 product race is shifting. The operational questions are becoming just as important: where does the agent run, how does it get permission, what authority does it have, how are failures rolled back, and how does the organization observe what happened?

Anthropic is pushing Claude Code and the Agent SDK across interactive coding and programmatic automation. GitHub Copilot is building asynchronous coding-agent workflows inside GitHub and VS Code. Cursor, Replit, Warp, Coder, and other developer-tool companies are also trying to own the agent run, dev environment, pull request, and audit trail. OpenAI's Codex mobile integration is a move to own a specific moment in that chain: the moment a running agent needs a human.

Mobile is not just another distribution channel here. It is the fastest notification device most users carry. Compared with an IDE toast, email, GitHub notification, or Slack thread, the ChatGPT mobile app can become a direct approval route for Codex. From OpenAI's perspective, placing Codex inside ChatGPT is natural because the account, plan, workspace, device authorization, mobile notification channel, and conversation context already live there.

That same integration creates friction. In the Reddit r/codex discussion captured during research, some users noted that their personal ChatGPT account is on mobile while their work Codex account is separate. Others wanted a standalone Codex mobile app. Some Android users said the feature had not yet appeared for them, and others raised connection stability concerns when networks change. The broad reaction was not rejection. It was more precise: the direction makes sense, but enterprise adoption depends on account separation, rollout reliability, connection stability, and approval UX.

A small approval button can carry a lot of authority

Mobile approval can speed up agent work, but it also spreads responsibility. Coding agents are not ordinary chatbots. They can edit files, run commands, control browsers, call internal tools, and interact with credentials. When Codex asks on a phone whether a command should run, the user is exercising real authority from a small interface.

Teams should therefore define approval layers before turning mobile control into a norm. Read-only research, test execution, linting, documentation drafts, and log collection can be low-risk mobile actions. Dependency installation, broad file rewrites, migration generation, and external network calls may need more caution. Production deploys, secret access, customer data access, destructive database commands, and billing or authentication changes should not be approved casually from a notification card.

The access-token warning points in the same direction. A token runs with the creator's workspace identity, which means a leaked token can let someone else start Codex runs as that user. Public CI, forked pull requests, and shared machines deserve special care. Mobile approvals and automation tokens are different mechanisms, but they share the same governance problem: an agent is borrowing a person or organization's authority.

What changes in the developer's day

For an individual developer, the obvious change is reduced waiting. They can start a bug investigation on the way to work, choose between two proposed fixes from a phone, approve a log-gathering command between meetings, or drop a refactor idea into a new thread during lunch and inspect the diff later at a desk.

That can be useful. It can also make work feel more ambient. If the phone becomes an agent approval device, the boundary between work time and off time becomes easier to blur. Healthy teams will distinguish "this can be approved from mobile" from "this must be answered immediately." A blocked agent is not always an emergency.

At team scale, agent queues and approval expectations may become more explicit. A junior developer could ask an agent to prepare a small change and have a senior engineer steer the path from mobile. An SRE could start an incident-analysis thread that collects logs and runbook context, while reserving actual remediation for desktop review. A product manager could ask Codex to assemble issue context before an engineering conversation, with the developer reviewing only the code diff later.

The larger point is that Codex mobile preview is closer to "the coding agent has a channel for calling the human" than "developers now code on phones." If that channel works well, agents can run longer with less idle time. If it works poorly, teams get hasty approvals, account confusion, network instability, and permission mistakes.

What to watch next

The first thing to watch is rollout scope. OpenAI says the preview covers iOS and Android, all plans including Free and Go, and supported regions. In practice, availability can still depend on app version, region, workspace settings, Remote Control permissions, and SSO, MFA, or passkey flows. Windows connection support remains listed as coming later.

The second watch point is remote-environment reliability. If the feature works only with a personal Mac on a stable home network, it stays a convenience feature. If it works cleanly with Remote SSH and managed devboxes, it becomes more relevant to enterprise development.

The third is governance. Access tokens and Hooks may look like secondary updates next to the mobile announcement, but they are central to team adoption. Long-running agent sessions, mobile approval, and CI automation need audit logs, token rotation, approval policy, secret scanning, and repository-specific rules.

Finally, watch the competitive surface. AI coding tools are no longer single model calls. They are bundles of environment access, permissioning, notifications, remote execution, review, cost control, and failure handling. By putting Codex in the ChatGPT mobile app, OpenAI is trying to own the developer's short judgment moments. The screen developers may see more often is not only a prompt box. It may be a small decision card asking whether the agent should continue, run a command, or accept a diff.

Codex mobile preview makes that shift visible. Coding agents are working longer, connecting to more environments, and asking humans for permission more often. The phone is the nearest device for that permission. The remaining question is no longer whether the technology can reach the phone. It is which kinds of work should be approved there.