Codex comes to the phone, and the bottleneck is approval

OpenAI Codex mobile preview shows AI coding agent competition moving beyond model quality toward approvals, supervision, and remote execution.

- What happened: OpenAI brought

Codexinto the ChatGPT mobile app as a preview.- The rollout spans iOS and Android across plans including Free and Go, while the current connection flow centers on a macOS Codex host.

- Core shift: Developers can start threads, steer work, approve commands, and review diffs or test results from a phone.

- Why it matters: The bottleneck for coding agents is moving from code generation speed to where humans approve work.

- Watch: Faster mobile approvals can reduce idle time, but they also create a new path for approving the wrong command too quickly.

OpenAI brought Codex into the ChatGPT mobile app on May 14, 2026. The announcement was titled Work with Codex from anywhere, and the simple version is easy to understand: Codex is now on the phone. But reading this as just another mobile app feature misses the more important shift. The execution layer and the supervision layer for coding agents are starting to separate.

Until recently, competition in AI coding agents was usually described through three questions. How well does the model write code? How safely can the tool touch local files and terminal commands? How long can it keep working across a repository without losing the plot? Those questions still matter. Yet anyone who has actually let an agent run for a while has seen a different bottleneck appear. The agent stops because it needs a human to approve a command. It stops because a dependency install needs permission. It stops because there are two plausible implementation paths and it needs a call. It stops because a test failed and a person needs to decide whether to narrow the scope, rerun the suite, or change direction.

OpenAI's mobile preview moves that bottleneck into the user's pocket. Codex can continue running on a laptop, Mac mini, devbox, or managed remote environment, while the developer uses the ChatGPT mobile app to track the session. From the phone, the user can start new work, continue an existing thread, answer Codex's questions, approve execution, and review results. OpenAI also said Codex now has more than four million weekly users, and framed small interventions as the moments that keep long-running work moving.

That number is not just a vanity metric. If four million people are using Codex every week, the next product race is not only about smarter code generation. It is about fitting the agent into the daily rhythm of software work. Can a developer start a bug investigation at lunch? Can they choose a refactor direction on the commute? Can they ask Codex to summarize the latest state of a customer issue right before a meeting? OpenAI's examples are about developer productivity, but the broader message is that coding agents are becoming background workers that can keep operating while the user is away from the desk.

The real announcement is remote execution

OpenAI's mobile preview is not simply remote screen sharing. It is less like controlling one computer from a phone and more like surfacing the live state of the Codex execution environment inside ChatGPT mobile. The announcement says the app can handle active threads, approvals, plugins, project context, screenshots, terminal output, diffs, test results, and approval requests in real time.

The boundary matters. Files, credentials, permissions, and local settings remain on the machine where Codex is actually working. The phone is not carrying the whole development environment. It is a supervision and input surface. OpenAI's documentation describes the connection through a secure relay layer, which makes trusted machines reachable from authorized devices without directly exposing the machine to the public internet.

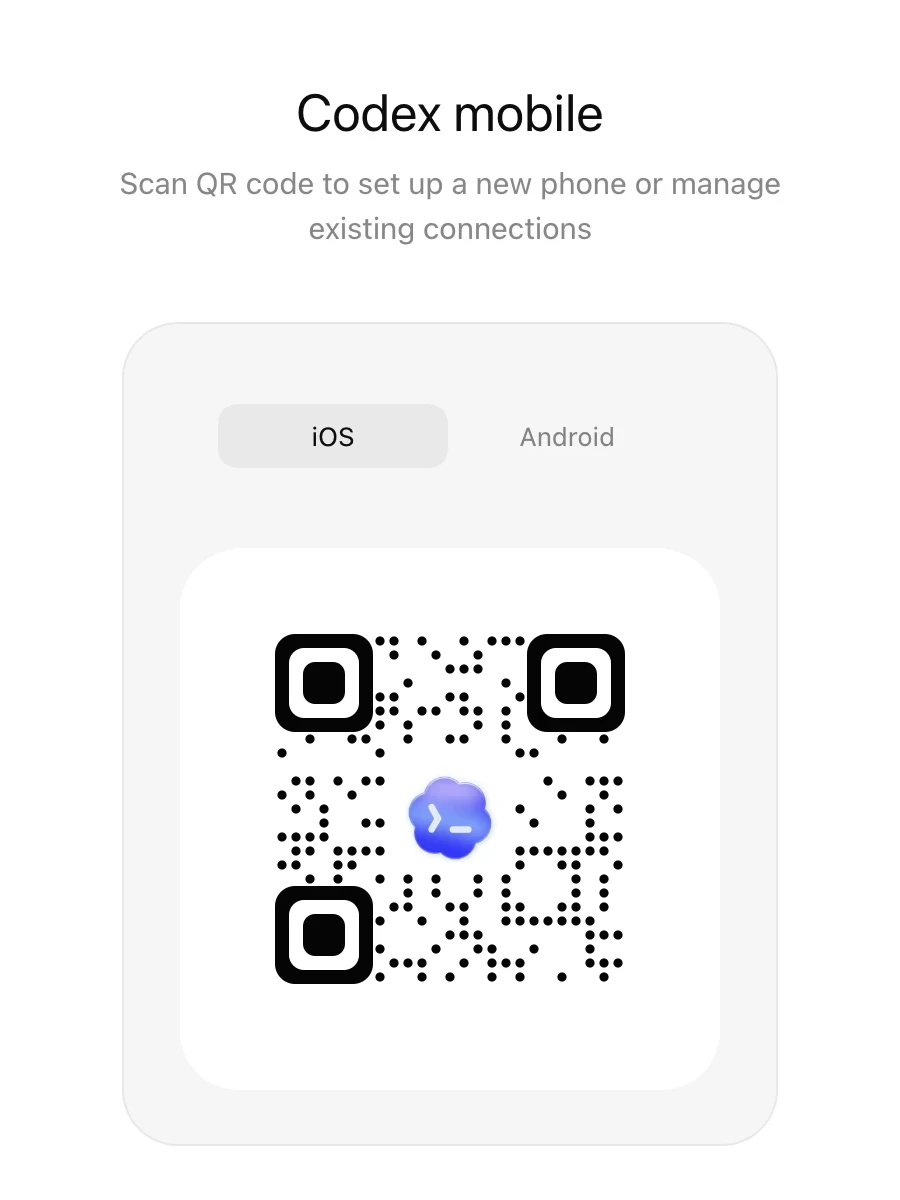

The Codex remote connections documentation makes the product intent clear. A user starts mobile setup from the Codex app on the host they want to connect. They scan a QR code with the phone and finish the connection in ChatGPT. The host must stay awake, connected to the network, and running Codex for the remote session to continue. In other words, "Codex mobile" does not mean the phone is building the code. The code still moves inside the development environment. The phone becomes the approval device for that environment.

ChatGPT mobile app: questions, approvals, steering, diff review

OpenAI secure relay: session state and context sync

Codex host: files, permissions, credentials, terminal, test execution

Local machine, Mac mini, devbox, or managed remote environment

This structure fits the larger evolution of AI coding products from IDE plugins into operational consoles. Autocomplete inside an IDE helps with the file the user is viewing. A terminal-style agent can read the repository and run commands. A mobile supervision layer lets the user decide the agent's next action even when they are not in front of the computer. At that stage, product quality depends not only on code generation, but on when the system asks a human to intervene.

The adjacent features explain the direction

If the mobile preview is viewed alone, it can look like a consumer app update. OpenAI announced it alongside general availability for Remote SSH, general availability for Hooks, programmatic access tokens, and HIPAA support for Enterprise local environments. That bundle is not accidental.

Remote SSH lets Codex enter an approved remote development environment. Many teams already do serious work outside a personal laptop: managed devboxes, internal remote machines, and build environments shaped by security policy. Codex can treat those environments as project workspaces, while mobile brings the state of that work back to the user's hand. Execution happens on the controlled machine. Intervention happens wherever the user is.

Hooks put organizational policy inside the Codex execution flow. OpenAI's developer documentation includes examples such as scanning prompts for secrets, running validators, logging conversations, generating memory, and tailoring behavior for a repository. When a coding agent is only a personal productivity tool, the main question is whether the model understood the instruction. In an enterprise environment, the question becomes what policy is checked before a command runs. Mobile approval makes this more important, because a user can approve from a smaller, more distracted context. Automated checks around that approval path become part of the safety model.

Programmatic access tokens point in the same direction. OpenAI describes them as a way for trusted automation or CI runners to run Codex with a ChatGPT workspace identity. The point is not a generic OpenAI API key. It is an identity path tied to ChatGPT workspace permissions, Codex entitlement, and enterprise governance. Codex is increasingly positioned between a personal app and an organizational automation layer.

Put these pieces together and the picture becomes clearer. Development environments are being standardized remotely. Hooks and policy sit around execution. Automation uses workspace identity. Human decisions move to mobile. The coding-agent race is shifting from a pure model benchmark race to an operations race.

The upside for developers is concrete

The most direct benefit is lower idle time. Coding agents need human judgment during long tasks. They ask whether a test may run, whether a dependency may be installed, which implementation path to choose, whether a flaky-looking failure should be rerun, or whether the scope should be reduced. If the user leaves the desk, the work stops. Mobile approval reduces that pause.

The second benefit is lower friction at task start. Opening a repository, creating a branch, and shaping a first instruction can be more work than the idea deserves in the moment. If a developer can open a phone and ask Codex to reproduce a bug or collect likely causes, the investigation can begin before the developer returns to a full workstation. That seems small, but it can change how often the tool is used. AI tools become stickier when they are things you can toss work into at the moment the thought appears.

The third benefit is a shorter review loop. If Codex can show diffs and test results on the phone, a user can at least judge whether the direction is sound. Deep code review is still difficult on a phone. But high-level decisions are possible: this approach is wrong, do not touch that file, narrow the test scope, split this issue out, stop refactoring and keep the patch minimal. Those interventions can prevent a long-running agent from continuing in the wrong direction.

The fourth benefit is the fit with remote development environments. If Codex keeps running on a managed devbox or Mac mini, the workflow is less tied to the user's laptop battery or local network state. Secrets and dependencies can stay inside a company-approved environment, while the user supervises from mobile. From a security team's perspective, that is easier to explain than copying every development asset onto a phone.

A small approval screen is also a risk surface

Axios covered the update and noted that approving agent actions from a small screen while multitasking can increase the chance of mistakes. That concern should not be dismissed. A coding agent's approve button is not the same as clearing a notification. In some cases, it can lead to file deletion, package installation, network access, test-environment changes, or access to repositories containing secrets.

Phones are good for quick decisions and weak for deep inspection. The screen is small, diffs are long, and terminal logs are compressed. The user may be walking, sitting between meetings, or checking quickly during a commute. If the agent asks for command approval and the user does not understand exactly what is being approved, mobile access becomes an incident path rather than a productivity feature.

Good mobile UX for coding agents therefore cannot stop at making the approval button convenient. It needs risk separation. Read-only investigation, test execution, file creation, dependency installation, network access, and deployment-related commands carry different weights. On a phone, there should be a clear distinction between low-risk approval and approval that should wait for desktop review. This is also why OpenAI's emphasis on Hooks and enterprise controls matters. A human should not have to evaluate every risk from a small screen every time.

Development teams should set policy before mobile approval becomes normal. They can decide which actions are acceptable from a phone and which must be deferred. Test runs, linting, read-only investigation, and draft PR creation may be good mobile candidates. Production credential access, migrations, deployment, destructive commands, and large file deletions should usually stay out of casual mobile approval. Without Codex Hooks, repository policy, and CI guardrails, mobile approval can become a shortcut around the team's control system.

The race is about operating agents

Recent coding-agent news has been dense. xAI entered the coding-agent runtime race with Grok Build. UiPath said it would connect Claude Code and Codex into enterprise automation workflows. Salesforce, SAP, Red Hat, and other enterprise vendors are also treating agents less like single chatbots and more like execution layers for work. In that context, Codex mobile asks a practical question: if the agent can write code, who gives it the next permission, and when?

Cursor, Claude Code, GitHub Copilot, and similar products will meet the same problem. The longer an agent works, the less realistic it is for the user to sit in front of the computer for every decision. Full automation is risky, but keeping every prompt inside the IDE keeps the user tied to the desk. The middle form is human-in-the-loop execution: the agent keeps working, but calls for a person when the situation is risky or ambiguous. If that call only exists inside an IDE, the work stalls when the user leaves. If it reaches mobile, the work can remain alive longer.

This also changes how teams may review work. Traditional pull request review happens after a person submits code. A coding agent that keeps producing intermediate results and sending mobile requests can split review into smaller steps. Instead of reviewing one finished PR, the team may approve design choices, file access, test execution, and draft diffs in stages. Strong teams will turn that process into policy. Weaker teams may end up with alert fatigue and careless approvals.

What this news really means

Codex mobile preview is not a flashy model release. There is no benchmark win at the center of the announcement, and OpenAI is not leading with a new coding score. For developers, that may make it more practical rather than less. The usefulness of an agent depends not only on momentary intelligence, but on how naturally it fits human intervention points into a workday.

OpenAI's direction is clear. Codex is being shaped as an execution layer that crosses local and remote development environments, attaches to ChatGPT accounts and enterprise workspace permissions, and can be supervised from mobile. If this works, the criteria for judging coding agents will change. "Which model writes better code?" will remain important, but "which product safely ties together approvals, policy, remote environments, audit trails, and mobile intervention?" will carry similar weight.

The feature is still a preview. The current mobile connection path centers on a macOS Codex host, and Windows host support is listed as coming later. Team policy, notification management, approval risk labels, and mobile review of long diffs still need to mature. But the direction is already visible. Coding agents are no longer just helpers inside an IDE window. They are becoming workers that continue while the user is away and ask for decisions from the nearest trusted device.

Teams that are excited by this should start with operating rules, not tool choice. Which tasks should an agent handle? Which approvals may happen from mobile? Which commands should automated policy block? Which results require desktop review? The core of the Codex mobile news is not portability. It is operational responsibility. The next bottleneck in AI coding may be less about how fast code is generated and more about when a human grants authority.