Microsoft MDASH shifts security AI from findings to proof

Microsoft says MDASH used 100+ agents to find 16 Windows vulnerabilities. The security AI race is moving from model scores to validation harnesses.

- What happened: Microsoft introduced

MDASH, a multi-model agentic scanning harness that it says helped find 16 Windows vulnerabilities.- The targets included

tcpip.sys,ikeext.dll,http.sys,netlogon.dll, anddnsapi.dll; Microsoft described four as critical remote code execution issues.

- The targets included

- Why it matters: Security AI differentiation is shifting from single-model scores to systems that combine validation, debate, proof, and patch loops.

- Developer impact: When evaluating AI security tools, ask less "which model is it?" and more "what evidence remains, and who pays the triage cost?"

- Watch: Microsoft's numbers are strong, but they mix internal code, historical MSRC context, and benchmark claims that outside teams still need to reproduce.

Microsoft introduced codename MDASH in a Security Blog post on May 12, 2026. The name stands for Multi-Model Agentic Scanning Harness. At first glance, this can look like another AI security product announcement. The more important story is not simply that "AI found vulnerabilities." It is that a vulnerability-finding AI workflow is being connected to the kind of proof, triage, and engineering process that can land in Patch Tuesday.

According to Microsoft, MDASH was used to find 16 CVEs in Windows networking and authentication components. Four of them were classified as critical remote code execution vulnerabilities. The disclosed targets include tcpip.sys, ikeext.dll, http.sys, netlogon.dll, dnsapi.dll, and telnet.exe. Ten were kernel-mode issues, six were user-mode issues, and Microsoft says several were reachable from a network position without credentials.

The performance claims are also aggressive. Microsoft says MDASH found all 21 vulnerabilities planted in private test drivers with no false positives. It reports 96% recall on five years of confirmed MSRC cases for clfs.sys, 100% recall for tcpip.sys, and 88.45% on 1,507 real-world CyberGym vulnerability tasks, which it describes as topping the public leaderboard at the time of writing.

Those numbers invite a simple reading: Microsoft has a stronger security model. But Microsoft's own framing points somewhere else. The model is one input. The product is the system around it. MDASH matters because security AI is running into a different bottleneck. The hard part is no longer only whether a model can smell a vulnerability. It is who challenges the candidate finding, who removes duplicates, who builds the trigger, who creates the proof, and who turns the result into evidence that a security engineering process can use.

This is role separation, not one big model

Microsoft describes MDASH as a pipeline with prepare, scan, validate, deduplicate, and prove stages. The prepare stage receives a source target, builds language indexes, studies historical commits, and maps attack surface and threat models. The scan stage has auditor agents walk candidate code paths and produce hypotheses with supporting evidence. In the validate stage, separate agents debate reachability and exploitability. Deduplication collapses semantically equivalent findings. The prove stage attempts to create and run trigger inputs for supported bug classes.

That is very different from asking a large model to read a repository and "find vulnerabilities." Microsoft says MDASH uses more than 100 specialized agents. An auditor does not behave like a debater. A debater does not behave like a prover. Each stage has a different role, prompt regime, tool set, and stop condition. The design assumption is that one prompt should not be trusted to do everything.

This structure is necessary because many vulnerabilities do not look like one-line mistakes. Microsoft's example CVE-2026-33827 is a use-after-free in tcpip.sys. It is not a neat case where a free and later use sit in the same obvious function. Reference ownership, concurrent cleanup, SSRR option handling, and path cache lifecycle are entangled. Microsoft argues that a single-model harness can miss this class because release and later reuse are separated by intervening control flow, and the decisive signal appears only when the code is compared with a correct pattern elsewhere.

CVE-2026-33824 follows the same pattern. In the IKEEXT service, a shallow copy fails to preserve heap allocation ownership correctly, leading to a double-free path. The relevant flow spans six files. A file-local analyzer is unlikely to see the full aliasing lifecycle. MDASH links cross-file pattern comparison, reachability analysis, debate, and proof construction. In this context, "agent" is less a marketing label than a way to decompose security work.

Security teams do not want candidate lists

The practical bottleneck in vulnerability discovery is false positives. Security teams already have large backlogs. Static analysis tools, dependency scanners, penetration tests, bug bounties, customer reports, and internal red-team findings all feed the triage queue. If AI only produces more plausible-looking candidates, security posture may not improve. It may simply add owner assignment, reproduction, severity judgment, exploitability review, and patch prioritization work.

That is why Microsoft emphasizes validation and proof. A scan-stage finding is not yet a security result. A debater has to challenge it. Deduplication has to collapse repeated forms. A prover has to create a trigger when the bug class allows it. Domain plugins have to inject system-specific invariants. Only then does the candidate start becoming a vulnerability that engineers can actually fix.

Microsoft gives a CLFS proving plugin as an example. Many CLFS findings can look interesting, but if the system cannot construct the triggering log file, they remain triage backlog. A useful plugin understands the on-disk container layout, block validation sequence, and in-memory state machine well enough to drive a candidate path to the sink.

This gives teams a more practical way to evaluate AI security tools. "The model finds vulnerabilities" is not enough. What source indexing does it perform? Can project-specific rules be added? Is there a separate challenge step? Which bug classes can produce proof-of-concept inputs? How does the result connect to existing ticketing, patch ownership, and regression checks? The cost of security AI is not just API tokens. One wrong finding can burn half a day from a senior security engineer.

Patch Tuesday is the important part

The most interesting part of the MDASH announcement is not the benchmark. It is Patch Tuesday. Benchmarks matter, but they can stay separate from a company's real operating system. Patch Tuesday is a shipping point inside Microsoft's security process. A vulnerability needs an owner, severity, reproduction, a fix, regression validation, an advisory, and an update package that customers receive.

The fact that 16 MDASH-found CVEs entered that flow means AI findings were not just research-assistant notes. They became input to a security engineering pipeline. That does not mean human review disappeared. It means the opposite. For AI-generated findings to become security updates, people and process matter more, not less. The better framing is not "AI replaced security researchers." It is that AI is changing the unit of work for security researchers.

TechRadar's May 14 coverage also emphasized that MDASH coordinates more than 100 AI agents and found 16 Windows flaws, including some reachable before authentication from a remote position. Community reaction is still forming, but Azure-related Reddit discussion has focused on IKEv2 LocalSystem RCE risk and disclosure timelines. If AI helps defenders find vulnerabilities faster, attackers can also use similar advantages to read patch diffs, advisories, and proof patterns faster.

That concern is not just hype. If AI vulnerability discovery enters production, existing disclosure rhythms such as 90-day windows will come under pressure. Defenders have to find and fix faster. Attackers can also increase their clock speed. MDASH is less a sign that AI security tools automatically make systems safe, and more a sign that the tempo of security competition is rising.

How to read the CyberGym number

Microsoft reports 88.45% on CyberGym. CyberGym evaluates defensive cyber workflows and vulnerability-oriented agents through real-world vulnerability reproduction tasks. Microsoft's post says the evaluation used 1,507 tasks from 188 OSS-Fuzz projects, with vulnerable source code and high-level vulnerability descriptions provided in the default level 1 setting.

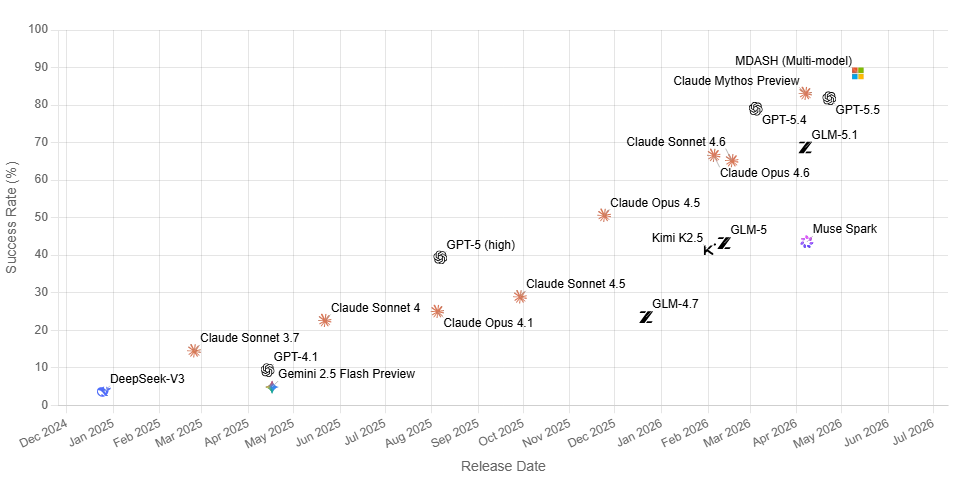

This number should be read carefully. Microsoft describes it as a public CyberGym benchmark and refers to the published leaderboard at the time of writing. An external BenchLM snapshot from May 13, 2026 showed Claude Mythos Preview at 83.1%, GPT-5.5 at 81.8%, and GPT-5.4 at 79.0%. That does not disprove Microsoft's claim. Leaderboard timing, evaluation scope, and entry display can differ. But it does mean the article should treat 88.45% as Microsoft's reported MDASH evaluation result, not as an independently settled universal ranking of all security models.

The more valuable part is Microsoft's failure analysis. Of the remaining failures, 82% of cases that targeted the wrong code area came from vague descriptions lacking function or file identifiers. Some attempts generated libFuzzer-style input when the benchmark harness expected honggfuzz-format input. That failure mode is very real. The agent may reason well, but if the problem statement or harness contract is misaligned, a plausible answer still fails.

Different from OpenAI Daybreak, but pointing the same way

OpenAI recently pushed cyber defense forward with Daybreak and Codex Security. Daybreak's message is about model access, trusted cyber workflows, a security partner ecosystem, and the Codex agentic harness. MDASH shows a different side of the same shift. The center of gravity is not "which model should defenders access?" but "how does a large software company turn AI findings into security updates?"

The two approaches compete, but they also converge. Security AI product quality does not come from a single prompt or a single model name. OpenAI emphasizes access control and partner integrations. Microsoft emphasizes internal codebases, Patch Tuesday, proof plugins, and model ensembles. Even if Anthropic or another model provider releases a stronger cyber model, real organizations will still return to the same question: what evidence does this system leave inside my code, my operating process, and my audit requirements?

That is why MDASH looks less like "Microsoft released another security product" and more like a change in evaluation criteria. Security AI demos that only show a candidate vulnerability will become less convincing. Teams will want to see why the finding is reachable, which objections it survived, what proof-of-concept or sanitizer evidence exists, which patch candidate passed regression tests, and which human received the result under which authority.

Questions development teams can take now

Most development teams cannot build an internal harness like MDASH today. Microsoft has a large proprietary codebase, MSRC case history, Patch Tuesday process, specialized security researchers, and domain plugins. A startup or ordinary enterprise team cannot copy those conditions directly. But the announcement still suggests useful operating principles.

First, do not turn AI security scan results directly into tickets without classification. Candidate findings and validated findings need to remain separate. Second, agent workflows need a challenge step. Having the same model produce a finding and then approve it is tempting, but it rarely reduces triage cost enough. Third, proof should be treated as a product requirement. Not every vulnerability can be proven automatically, but a tool should clearly state which bug classes can produce dynamic evidence and what form that evidence takes. Fourth, domain knowledge should live in systems, not only prompts. CodeQL databases, sanitizer harnesses, test fixtures, rules, plugins, and project-specific invariants are more durable than an instruction pasted into a chat box.

The same lesson applies to AI coding agents. As code-writing agents increase, security review agents will increase too. More review comments do not automatically improve quality. What matters is whether a comment connects to build output, tests, reproduction steps, exploitability, ownership, and merge gates. The security AI race is likely to move away from generating more warnings and toward leaving only warnings that an organization can fix.

What is still unknown

The MDASH announcement is strong, but public information leaves gaps. It is not yet clear where the limited private preview's product boundary sits, how customer code is processed, which models are used at which stage, whether customers can build their own domain plugins, or how far automated patch generation works in customer environments. It is also unclear whether results built from Microsoft internal code and MSRC history will transfer cleanly to other organizations with different languages, frameworks, and legacy systems.

The direction is still clear. Vulnerability discovery is becoming an agent-system problem. Models will keep improving, but security teams do not pay for model output in isolation. They pay for operational results: fewer candidates, stronger evidence, accepted patches, regression checks, and audit trails. MDASH is interesting not only because it found 16 Windows vulnerabilities, but because those vulnerabilities connected to a real security update loop instead of remaining a prompt demo.

The next question in AI security is moving from "which model finds the most issues?" to "which harness turns findings into work that can be fixed?" Microsoft MDASH is one of the clearest examples of that transition so far.