177,000 MCP Tools Show AI Agent Risk Has Moved to the Action Layer

AISI analyzed 177,436 MCP tools and found agent tooling shifting from reading and analysis toward file edits, browsers, payments, and other actions.

- What happened: A UK AISI and Bank of England study analyzed

177,436AI agent tools from public MCP servers.- The sample covers November 2024 through February 2026 and tracks both public tool creation and download patterns.

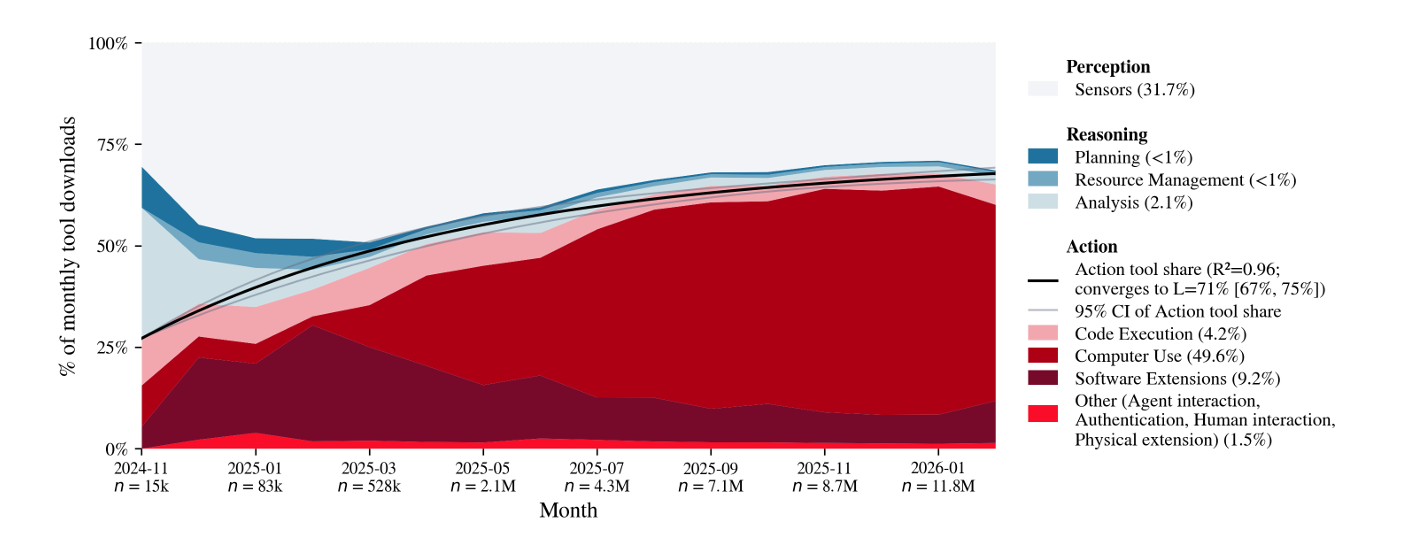

- The shift: By monthly downloads, action tools rose from roughly 24-27% to 65% of the ecosystem.

- The center of gravity is moving from reading and analysis toward tools that edit files, execute code, drive browsers, and initiate payments.

- Why it matters: Agent governance cannot stop at model output filters; teams need

MCPtool permissions, action classes, and audit logs. - Watch: The data is weighted toward public MCP packages and downloads, so private enterprise deployments and regional alternatives may be undercounted.

The UK AI Security Institute (AISI), working with the Bank of England, has published a useful snapshot of where AI agents are actually going. The post is titled "How are AI Agents used? Evidence from 177,000 AI agent tools", with a companion arXiv paper. The researchers tracked 177,436 agent tools created across the public Model Context Protocol (MCP) server ecosystem from November 2024 through February 2026.

The number is interesting, but the real point is not simply that MCP is growing. Agent discussions often stay focused on model quality: benchmark scores, prompt engineering, coding evaluations, and frontier model releases. In production, however, an agent becomes useful or dangerous when it can call tools. A tool that reads a file and a tool that deletes a file both sit under the polite label of "tool use," but the operational risk is entirely different. The same is true for a customer database lookup versus a payment API call.

AISI's framing is valuable because it looks at this tool layer directly. Instead of asking only what an agent can say, the study asks what tools are being published, which tools are being downloaded, and where action permissions are expanding. That is a practical lens for the 2026 agent market. The competition is moving from "what does the model know?" to "which systems can the model touch?"

MCP has become the agent USB port

MCP has grown quickly since Anthropic introduced it in 2024 as a standard way to connect AI agents to external systems. The idea is straightforward. An MCP server wraps capabilities such as Google Calendar, GitHub, databases, local files, browsers, payment wallets, or internal services. MCP-compatible clients can discover those capabilities and expose them as tools for a model to call. The model no longer needs to learn every API directly. It needs to choose a tool with a defined schema.

That is convenient for developers. A single MCP server can be reused across Claude Code, Cursor, Codex-style runtimes, internal agent platforms, and other clients. For organizations, the integration cost goes down. Adding a new SaaS system no longer requires every team to rebuild prompts, API wrappers, authentication flows, and tool descriptions from scratch. MCP succeeds because agents need to connect to the outside world, and MCP packages that connection.

But a connection standard also becomes a risk standard. If anyone can build tools, publish tools, and let agents compose them, the monitoring surface expands beyond model APIs into the whole tool ecosystem. That is why AISI's public MCP sample matters. Public repositories and package downloads are not the full picture, especially for enterprise deployments, but they are observable signals of where agent infrastructure is moving.

The clearest shift in 177,000 tools is action

AISI says the public MCP tool count grew from about 5,000 to 177,000, while download activity rose from 80,000 to 14 million. The growth rate is already notable. The composition shift is more important. Early MCP usage leaned toward perception and reasoning tools: file reading, data retrieval, search, and analysis. Over time, tools that directly change the external environment became the majority of downloads.

The exact starting point differs slightly by source. AISI's blog describes action tools rising from 24% to 65% of monthly downloads. The arXiv abstract summarizes the 16-month sample as moving from 27% to 65%. The difference does not change the message. The agent tool ecosystem is moving from agents that read to agents that execute.

In the AISI taxonomy, perception tools access or read data, reasoning tools analyze data or concepts, and action tools modify the external environment. File editing, email sending, code execution, browser automation, computer control, and payments all land on the action side. That is also where real operational incidents tend to happen. A wrong analysis is costly. A wrong file deletion, email, deployment, or payment is a different class of failure.

This path is familiar to developers. Coding agents already read and write local files, run tests, create branches, open pull requests, and sometimes touch CI or deployment pipelines. The productivity comes from action. A read-only agent can advise; an action-capable agent can finish work. The same property also lets a mistake travel all the way into a repository, service, or business process.

Software development is the current center

The largest domain in the study is software development and IT. AISI estimates that software development and IT tools account for 67% of public tools and 90% of MCP server downloads. That fits the current market. Developers were among the first groups to adopt MCP, and coding agents naturally connect to file systems, git, terminals, package managers, browsers, documentation, and issue trackers.

This shows the current phase of the agent market. Developer agents became action-heavy before general consumer agents did. Development environments make tool calls concrete, and they provide relatively direct feedback. Teams can inspect a diff, run tests, check types, and see whether CI passes. That makes it easier to experiment with powerful permissions such as file writes and code execution.

Still, developer tooling is not low risk by default. Development environments often sit next to tokens, deployment keys, customer data samples, internal packages, and CI permissions. Once an MCP server opens the browser, terminal, and file system, the agent is no longer just a code assistant. It can become an execution actor inside the organization's internal systems. Software development may look like a medium-risk domain, but it is also an entry point into higher-risk systems.

Open web and computer control widen the blast radius

Another important AISI signal is the growth of unconstrained environments. The share of general-purpose tool downloads used in open web or full-computer-control environments rose from 41% to 50%. Even more striking, 95% of general-purpose tool downloads included action capability.

API integrations usually have clearer boundaries. A tool that reads a CRM record or updates a ticket status can be designed with specific inputs, outputs, permissions, and audit logs. Browser automation and computer control are fuzzier. The same browser tool might read a document, click a payment button, change a setting in an admin console, or submit a form with user credentials. The same computer-control tool might run tests, read local secrets, install a dependency, or modify a workspace.

That distinction matters for agent security. A tool named browser.click does not have one stable risk level. The risk depends on which site is open, what the session is authorized to do, whether a human approved the click, whether the action is reversible, and what state changes after the click. As MCP standardizes tool calling, organizations need to layer permission context and policy context on top of the tool interface.

Finance is a small share with a loud warning

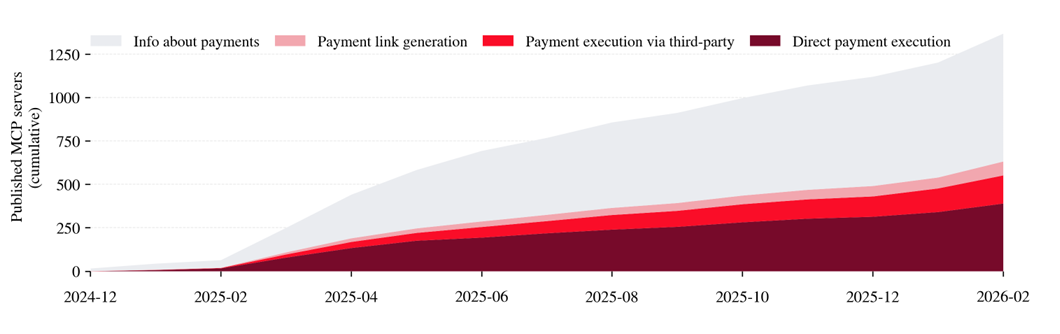

The most sensitive example in the study is finance. AISI says finance and business management tasks account for 14% of tools. Software development and IT still dominate the ecosystem, but the researchers use O*NET impact ratings to map tools to occupation-level consequences, and finance stands out as an area with more high-impact action tools than expected.

The payment data is especially worth watching. MCP servers with payment execution capability grew from 46 in January 2025 to more than 1,200 in January 2026. That includes tools such as cryptocurrency integrations, where transactions can be direct and hard to reverse. This does not mean agents have already caused large-scale financial incidents. It does show that payment capability is entering the agent tool ecosystem quickly.

Finance matters because reversibility and accountability are different. A file edit may be reverted through git. A mistaken email can be followed by a correction. A bad transfer, token movement, or order execution may be difficult or impossible to undo. If an agent can call a payment tool without human approval, the central problem is less about model accuracy than permission design. Who sets limits? Which transactions require approval? Which recipients are allowlisted? Are audit logs complete enough to reconstruct the event?

AI is now creating the agent tools too

One less-discussed part of the study may be just as important. AISI detected AI assistance in 29% of MCP servers and 38% of tools. Among newly created servers, the AI-assisted share rose from 6% in January 2025 to 55% in January 2026. For AI co-authored servers, Claude Code accounted for 66%, while Cursor and GitHub Copilot each accounted for 10%.

This helps explain the speed of growth. Tool creation is no longer limited by the number of developers manually writing integrations. A developer can ask a coding agent to wrap an internal API as an MCP server, and the agent can generate the server skeleton, schemas, authentication code, and documentation. In the best case, this lowers the cost of connecting internal systems to agents. In the worst case, it accelerates the spread of unreviewed tools, overbroad permissions, and inaccurate descriptions.

Tool descriptions are the UI for agents. A human sees a button label; a model sees a tool name, description, and input schema. If an AI-generated MCP server has an imprecise description, omits failure conditions, or blurs permission boundaries, the model may confidently call the wrong tool. "AI is building the tools" is therefore both a productivity story and a quality-control story.

Regulation moves from output to tool calls

AI regulation and safety work have mostly focused on model outputs: preventing harmful answers, reducing privacy leaks, dealing with copyright, and handling misinformation. Those remain important. But in an agentic system, the most important event may be the call, not the sentence. The incident record is often which tool was invoked, with which arguments, under which permission context, and which external state changed.

AISI's paper suggests that governments and regulators can monitor agent deployment risk at the tool layer rather than only at the model-output layer. That is a realistic direction. It is hard to inspect every company's internal prompts and model logs. Public tool ecosystems, package downloads, tool descriptions, permission types, domain distribution, and action capability growth are observable signals. In high-impact sectors such as finance, healthcare, law, education, and infrastructure, tool-level classification becomes a necessary part of the map.

The same logic applies inside companies. Agent adoption cannot start and end with "which model should we use?" Teams need to decide which MCP servers are allowed, which tools remain read-only, which actions require human approval, which tool calls enter security logs, and which tools are kept inside sandboxes. Model selection may change often. Tool permission infrastructure is likely to last longer.

What development teams should check now

For engineering teams, this study is not an argument to avoid MCP. It is closer to evidence that MCP is becoming a default integration path for agents. The issue is that adding MCP servers is starting to feel as easy as installing plugins, while the operational controls need to be stronger than plugin controls.

First, classify tools as perception, reasoning, or action. Not every MCP server should share one risk tier. Reading a file is different from writing one. Running a query is different from changing data. Checking a price is different from placing an order.

Second, treat general-purpose tools as special cases. Browsers, computer control, shell execution, and code interpreters open a much broader surface than narrow API tools. They need limits around network egress, file paths, environment variables, credentials, and the permissions of active user sessions.

Third, review tool descriptions and schemas like code. An MCP server's description changes model behavior. Incorrect descriptions can lead to incorrect calls. Teams should review tool descriptions, input constraints, failure modes, idempotency, and side effects.

Fourth, put payments and external transmissions behind a separate approval layer. Money movement, customer data, email, messaging, deployment, and permission changes leave traces in the outside world. The default for this category should be manual approval, limits, and allowlists.

Fifth, log tool calls as product and security events. When an agent incident occurs, "the model gave a strange answer" is not enough. Teams need to reconstruct which tool was called, with which arguments, what response came back, and who approved the action.

The study has limits

AISI's analysis is based on public MCP servers and download data. That means private enterprise deployments, internal registries, regional package channels, and air-gapped environments may be underrepresented. The blog also notes that geographic distribution can reflect the user base of the Python Package Index, which is weighted toward the United States, Western Europe, and China. Downloads are not the same as execution counts or production usage.

There is another limitation: the existence of a tool does not determine whether it is used safely. More than 1,200 payment-capable MCP servers does not mean all of them are deployed dangerously. Conversely, a domain with fewer tools can still produce a serious incident if one integration is misconfigured. The study is best read as the beginning of a measurement method, not a final risk verdict.

Even with those caveats, the direction is clear. AI agents are gaining more tools. A larger share of those tools can change external state. AI itself is helping generate the tools. Put together, the agent ecosystem can grow faster, wider, and less evenly than traditional software integration layers.

The signal is to watch tools, not just models

The 2026 agent race cannot be explained by model quality alone. Claude Code, Codex, Cursor, GitHub Copilot, Glean, Coder Agents, UiPath, Salesforce Agentforce, and others compete across different surfaces, but the shared question is similar: which tools can the agent use, with what permissions, and how does a human or organization supervise the execution?

AISI's analysis of 177,000 MCP tools makes that question visible in public data. AI agents are already moving beyond reading and analysis into action. Productivity comes from that action. Risk comes from the same place. The next generation of agent infrastructure is therefore less likely to be a better prompt library and more likely to be tool permissions, execution policies, audit logs, approval flows, and high-risk-domain monitoring.

MCP has become a standard port for connecting agents to the outside world. The important question is no longer only what gets plugged into that port. It is who is allowed to plug it in, how much current can flow, and where the circuit breaks when the load becomes unsafe. If AI is moving from speaking to acting, security and governance need to move from the output window to the tool-call log.