Honeycomb turns AI agent black boxes into timelines

Honeycomb Agent Observability shows that the production bottleneck for AI agents is moving from model-call logs to handoffs, tool calls, costs, and failure reconstruction.

- What happened: Honeycomb announced an Agent Observability suite on May 12, 2026, including

Agent Timeline,Canvas Agent, andCanvas Skills.- Agent Timeline is in early access, while the Canvas-related features were announced as available to customers now.

- Why it matters: The operational question is shifting from "was the answer good" to "which tool did the agent call, why, and what did it change downstream."

- Developer impact: Teams need to design

OpenTelemetry GenAIspans, handoffs, retries, token cost, and downstream traces as one event record.- A single LLM-call trace is not enough to explain multi-agent failures, runaway cost, or unsafe external side effects.

- Watch: Observability is not a safety system by itself. Teams still need to decide which state transitions and permission boundaries are recorded.

Honeycomb's May 12 Agent Observability announcement is not the kind of AI news that produces a new leaderboard. It is not a larger model, a coding benchmark result, or a demo where an agent completes an impressive task. For teams actually putting agents into production, however, this kind of release is becoming more important. The easy part is increasingly getting an agent to work once in a controlled demo. The harder question is whether a team can later explain what the agent did in production, step by step.

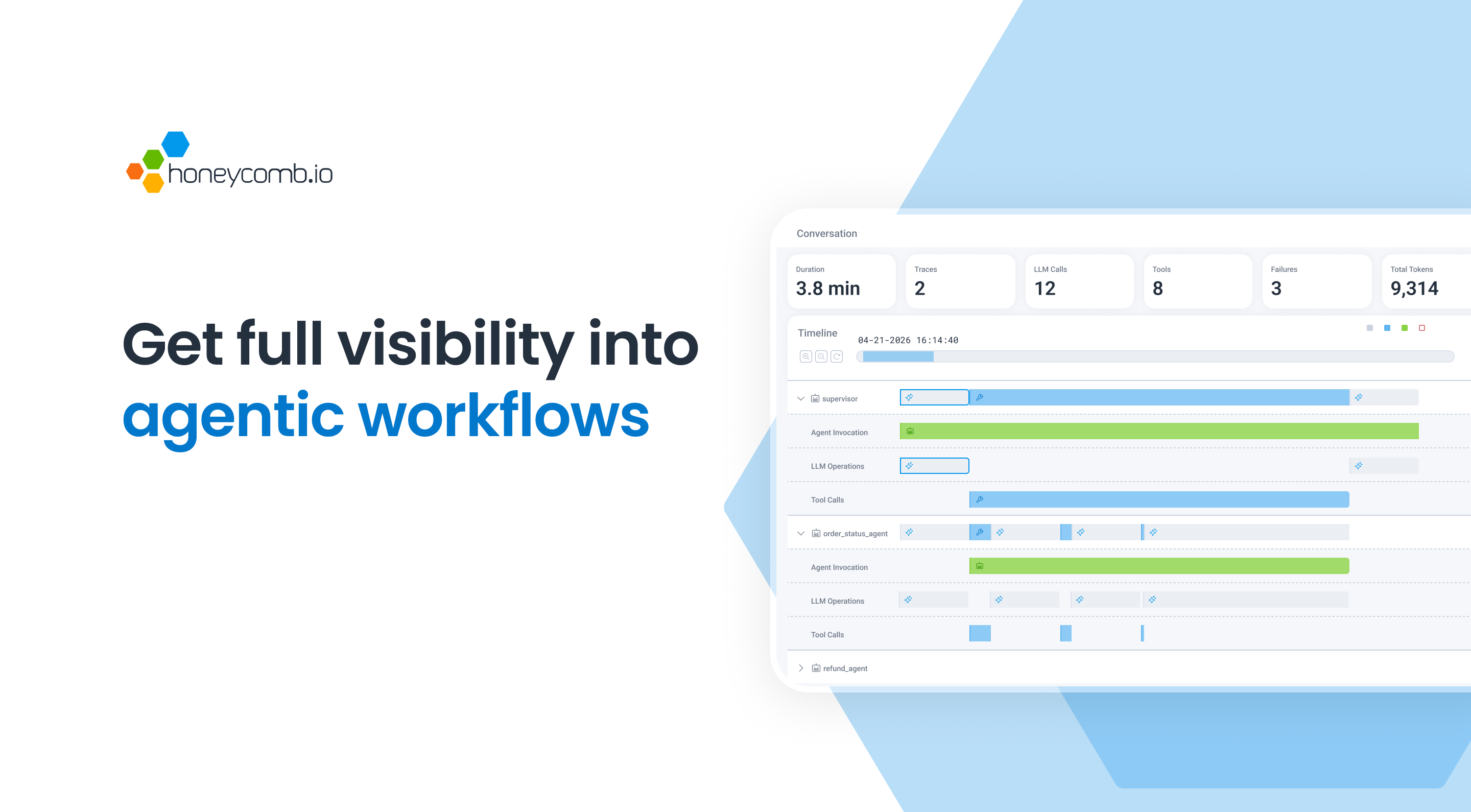

Honeycomb's official announcement bundles Agent Timeline, a rebuilt Canvas experience, Canvas Agent, and Canvas Skills. At a glance, that can sound like an existing observability product with a few AI features added. Read together with the product pages and docs, the message is more specific. Honeycomb is not only trying to display LLM call logs. It wants to connect agent reasoning, tool invocations, handoffs between agents, and the database queries or API calls that follow into one timeline.

Recent AI agent infrastructure news has converged around execution. Red Hat is trying to govern how agents touch infrastructure through Ansible. Salesforce is trying to connect business agents through Agentforce and Tableau MCP. Honeycomb's announcement asks the next operational question. Once agents are allowed to act, what should operators be able to see? If a task looks successful but leaves behind a bad tool input, repeated retries, a slow downstream API, excessive token spend, or a missed permission check, the operator needs to reconstruct the path.

Agent observability is not an LLM log viewer

Early observability for LLM applications was relatively simple. If a team stored the prompt, completion, latency, token usage, model name, and error state, it could answer many of the basic questions. In a request-response chatbot, most investigation begins between one user message and one model response. If cost is too high, switch models or cache more. If quality is low, adjust the prompt, retrieval, or evaluation set. If latency is high, tune streaming, batching, or caching.

Agents change that shape. An agent answers a question while also planning, choosing tools, reading external systems, and sometimes modifying them. Imagine one agent reading customer data from a CRM, another checking order status, and a third applying a refund policy. The final reply may look normal to the user while the internal run misread the customer's tier, retried a timed-out tool call, or dropped an important field during a handoff. From an operations perspective, "the final answer looked fine" is a weak signal.

That is the gap Honeycomb is targeting with Agent Timeline. The product page says it summarizes a conversation by total duration, model calls, tool calls, retries, involved agents, and failures. The more interesting design choice is the horizontal swim lane view. A classic waterfall trace is familiar for sequential service calls, but agent systems often involve parallel work and cross-agent handoffs. The failure may not be "this one span is red." It may be "Agent A summarized the customer state in a way that changed Agent B's premise, and Agent B then allowed a risky tool call."

| Observation target | Single LLM app | Production agent |

|---|---|---|

| Core unit | Prompt and completion | Run, step, tool call, handoff, side effect |

| Failure mode | Hallucination, latency, rising cost | Loop, bad tool input, missing state, permission-boundary breach |

| Debugging question | Why did it answer this way? | Why did it decide this action was safe? |

| Operational signal | Latency, tokens, errors | Handoff quality, retry path, downstream span, human override |

The problem Agent Timeline is aiming at

The most important phrase in Honeycomb's Agent Timeline materials is that AI and non-AI information should be viewed together. That may sound like a UI claim, but it is central to agent operations. Agent failures do not stop at the model layer. The investigation may need to answer whether the model picked a slow tool, whether the tool itself was slow, whether an external API was degraded, whether a database query was slow for one tenant, or whether a handoff passed the wrong customer ID.

Traditional APM is good at showing the execution path through APIs, databases, queues, and browsers. LLM tracing tools are better at prompt, completion, retrieval, evaluation, and model-specific signals. Agents connect those worlds. An agent is both a model caller and an operator. It reasons in natural language, calls APIs, changes state, and passes a summary to the next agent. Agent observability cannot live as a loose compromise between LLM observability and full-stack observability. It has to join both layers under the same run ID and event model.

Honeycomb is connecting this problem to its existing strengths. The company has long emphasized high-cardinality events, traces, and the search for "unknown unknowns." Agent systems fit that message because they produce unpredictable execution paths. The same user request can diverge depending on memory, retrieved context, available tools, model sampling, and policy responses. Operators do not need only an average latency graph. They need to see how a specific run branched, where assumptions changed, and which downstream systems were touched.

Canvas Agent and Skills move operations knowledge into agents

If Agent Timeline is about seeing what happened, Canvas Agent and Canvas Skills are about how an investigation proceeds. Honeycomb's Canvas documentation describes a natural-language investigation surface that can turn questions into Honeycomb queries, analyze traces, logs, and metrics, and continue through follow-up questions. The newly emphasized Canvas Agent points toward automatic investigation after triggers such as alerts or SLO burn events.

The interesting part is Skills. Honeycomb's Agent Skills documentation already describes skills such as query-patterns, production-investigation, slos-and-triggers, otel-instrumentation, and otel-migration. This is a step beyond attaching an MCP server so an agent can run a query. It is closer to giving the agent a reusable version of the organization's debugging practices and instrumentation conventions.

There is tension in that approach. An automatic investigation agent can save operator time, but a poorly designed one can burn tokens while producing shallow hypotheses. Notes from an AI SRE summit discussion on Reddit raised exactly this concern: an incident agent can spend tens of dollars in token cost per incident without producing a useful conclusion if tribal knowledge has not been encoded well. That is not solved by model quality alone. Teams still need policies for when automatic investigation begins, which datasets it may query, which actions remain read-only, and where its cost budget stops.

Why OpenTelemetry GenAI standardization matters

OpenTelemetry is an easy part of the announcement to skip, but it may be one of the most important details. Honeycomb says it integrated OpenTelemetry GenAI semantic conventions v1.40.0 into the platform. The OpenTelemetry GenAI documentation organizes GenAI observability signals across events, exceptions, metrics, model spans, and agent spans. It also connects provider-specific conventions for Anthropic, AWS Bedrock, Azure AI Inference, OpenAI, and MCP.

The caution is that the GenAI semantic conventions are still marked as Development in the OpenTelemetry docs. For practitioners, that means two things. First, it is early to bind an agent-observability design completely to one vendor SDK. Second, if teams invent arbitrary JSON logs now, they may create expensive migration work later. The practical path is to follow the direction of the standard while clearly defining an internal event schema that matches the organization's agent execution model.

At minimum, a production agent system should be able to answer these questions. Which agent run came from a user request? Which model, prompt template, retrieval context, and tool input did each step use? Was a tool output passed to the next step as raw data or as a summary? If an external system was modified, what permission and policy check allowed it? Where did a human approve, reject, or override the agent? When a failure occurred, did the retry use the same model, a cheaper model, or a retrieval-only fallback?

This is not only Honeycomb's problem. Teams using LangSmith, Langfuse, Arize Phoenix, Helicone, or a custom OpenTelemetry pipeline face the same design issue. Community discussions around agent observability tend to converge on the same point: the tool name matters less than the discipline around run IDs, handoff snapshots, state transitions, external side effects, and human corrections. The market looks like a vendor contest, but the real operational advantage may come from schema discipline.

Agent failures often degrade quietly

Agent operations are difficult because failures do not always appear as explosions. In a traditional service, CPU spikes, error rates, and queue growth often produce clear signals. Agents can degrade more quietly. They keep answering while slowly applying a refund policy incorrectly. Cost rises a little each day, but only for one customer cohort. A handoff summary drops a field, and the downstream agent fills the gap with a plausible guess.

Those failures do not fit neatly into a single red span. "Model call succeeded," "tool call succeeded," and "response returned" can all be green while the business outcome is wrong. That is why Honeycomb's framing of failure as a first-class object is worth noting. The product page mentions conversation-level failure counts, red highlights for failing spans, and a failures-only mode. Whether that is enough will depend on implementation, but it signals the right mental model: agent incidents are often a reconstruction problem, not just an exception problem.

Operators should separate two classes of signal. The first is system signal: latency, errors, tokens, retries, cost, timeouts, queue depth. The second is semantic signal: what assumption the agent made, what confidence it attached, which policy rule passed, and which handoff information changed the next decision. Existing observability handles the first category better. LLM and agent tracing must add the second. Production agents cannot be run responsibly with only one of the two.

What engineering teams should prepare

Reading this announcement only as a Honeycomb product update misses the broader change. The checklist for agent projects is changing. Many teams have started with model choice, RAG quality, tool-call success rate, and evaluation score. Those still matter, but "can this agent be operated" needs to move earlier. Operability is not usually something that can be bolted on after the agent is complete. The run model, span model, permission model, and cost model have to be designed with the code.

The first requirement is correlation. User request, agent run, model call, tool call, and downstream service trace should connect as one event. The next is handoff. If agent-to-agent summaries remain only natural-language blobs, it becomes hard to know what disappeared. Critical fields should be structured, summaries should be separated from source references, and the system should record who allowed the next action based on which evidence.

The third requirement is budget. Agents can iterate faster than humans, and those iterations become cost. Cost budgets should not be only monthly finance metrics. They should become runtime guardrails. For a specific incident investigation or customer workflow, teams should limit model calls, tool calls, expensive-model usage, and wall-clock duration. When the budget is exceeded, the agent should move to a human or a cheaper fallback mode.

The fourth requirement is side effect control. Read-only agents and write-capable agents are different operational systems. Tool calls that modify external systems should have separate spans, separate audit events, and explicit approval states. "The agent answered the customer" and "the agent changed the customer's account" cannot share the same observability policy.

Observability is necessary for safety, but not sufficient

Honeycomb's announcement matters because the agent-operations conversation is becoming more realistic. But observability does not automatically make an agent safe. It helps teams explain what happened afterward, reduce repeated failures, and feed automatic investigation. It cannot compensate for weak permission design, weak evaluations, sensitive-data exposure, or an overly broad automation scope.

Stronger observability can also create a new data-risk surface. If prompts, completions, tool inputs, tool outputs, retrieved documents, customer IDs, internal tickets, and database queries all flow into traces, the observability backend becomes a serious security boundary. "Log more" is not the answer for AI agent telemetry. Teams need to decide what is stored as raw text, what is stored as a hash or reference, and what is redacted. Following OpenTelemetry conventions and minimizing sensitive data are related but separate design tasks.

Honeycomb's Canvas docs mention privacy and permission boundaries, which is encouraging. Canvas is described as working within Honeycomb workspace data and existing access controls. Customers still have to do their own review. Does agent telemetry contain PII? Do prompts include trade secrets? Which datasets may an investigation agent query? Can its findings be shared with the whole team? Those are product and governance decisions, not only observability settings.

Production standards for agents are taking shape

Honeycomb Agent Observability is a product update, but it also captures a larger moment: production standards for agents are forming. Early LLM apps could often get by with prompt and completion logs. Modern agent systems need to connect runs, steps, handoffs, tool calls, token cost, downstream spans, policy decisions, and human overrides. It will become harder for development teams and SRE teams to interpret the same incident in separate tools with separate event models.

Whether this becomes a durable competitive advantage for Honeycomb remains open. LangSmith, Langfuse, Arize Phoenix, Datadog, New Relic, Grafana-aligned tooling, and others are moving toward the same problem. OpenTelemetry GenAI conventions are also still stabilizing. But the direction is clear. Production agent reliability will not be explained by model benchmarks alone. Teams learn faster when they can record what the agent did, why it did it, what it changed, and where it should have stopped.

If AI agents become part of the development team, they also become part of the on-call surface. Systems on call need more than logs. They need incident records. Honeycomb's message is pointed at that reality: if an agent works in production, the agent's timeline is production infrastructure.