Glean ADLC says agents now need an SDLC

Glean introduced ADLC and new Work AI agent features, showing how enterprise AI is shifting from smarter chatbots to governed agent operations.

- What happened: Glean introduced the

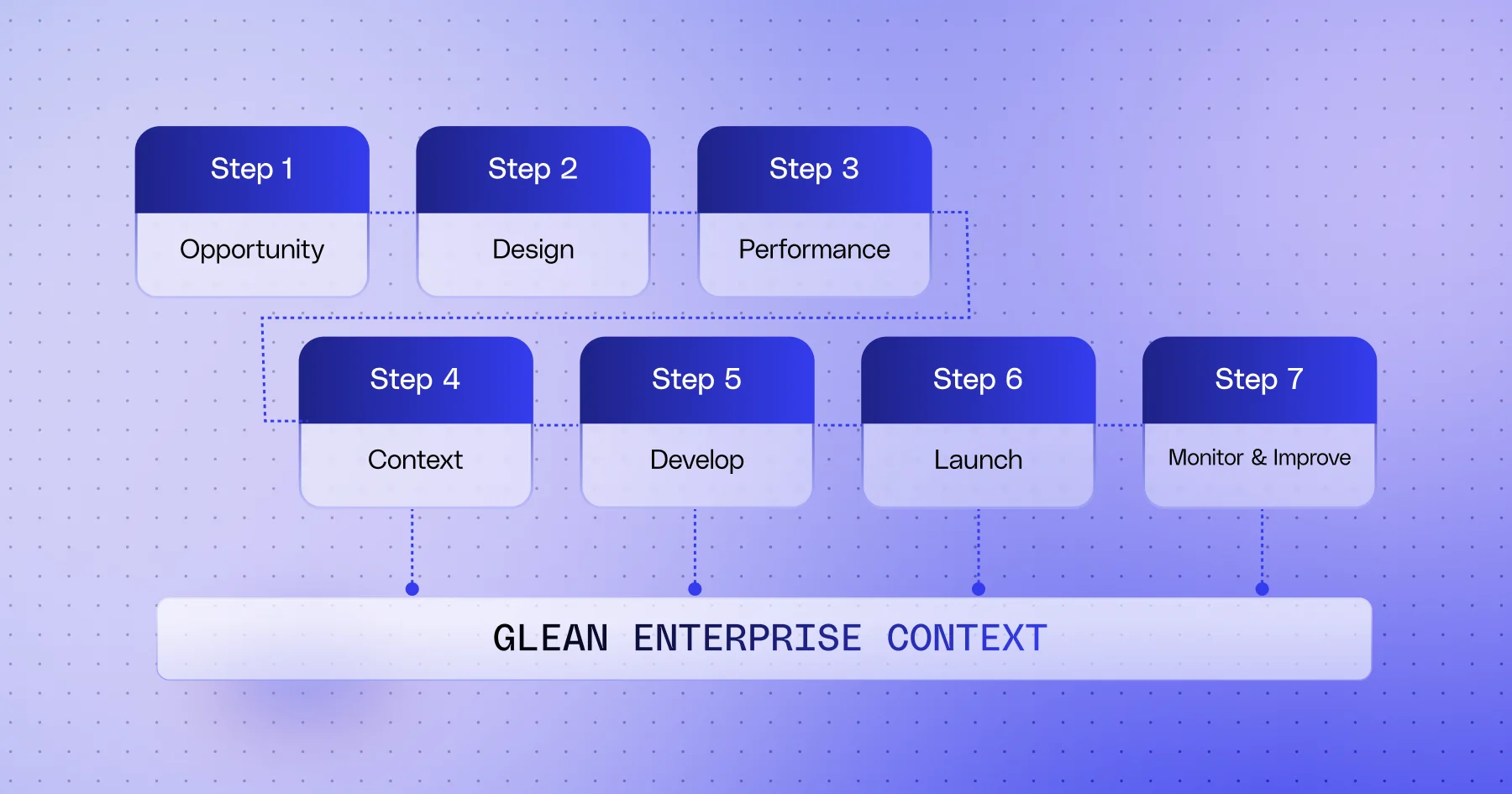

Agent Development Lifecycleon May 12 and said it is being built directly into the Work AI platform.- ADLC treats agents like software products across seven stages: Opportunity, Design, Performance, Context, Develop, Launch, and Monitor & Improve.

- Why it matters: Enterprise AI competition is moving from "smarter chatbot" claims toward operating systems for ROI, permissions, testing, release, and observability.

- Builder impact: Auto-mode, trace views, sub-agents, sandboxes, and access policies sound less like prompt tooling and more like the vocabulary of platform operators.

- Watch: Glean's ROI numbers are vendor claims. The larger question is whether each organization can define success criteria with the same discipline.

Glean introduced the Agent Development Lifecycle, or ADLC, in an official blog post on May 12, 2026. The name sounds like another enterprise framework. The more interesting part is the product assumption behind it. Glean is arguing that agents should no longer be treated as clever chatbots or automation demos. They should be planned, tested, released, monitored, and improved like software products.

That view fits the direction enterprise AI has been taking over the past few days. Microsoft has been pushing Agent 365 into general availability. ServiceNow has expanded AI Control Tower. SAP has placed Joule agents inside ERP processes. Cursor has tied coding agents to team workflows through Teams integration. Each announcement points to the same problem. Agents are no longer personal utilities that someone runs once. They are becoming execution units inside organizations, with permission to read business systems and, increasingly, write back to them.

Once that happens, the question changes. "Which model is smarter?" matters less than "who approved this agent?", "what is it allowed to change?", "where does it stop when it fails?", and "how do we measure return on the work it performs?" Glean ADLC lands exactly on that shift.

Glean's core point is to treat agents like software

Glean's official announcement says CIOs are already seeing agents appear across productivity suites and individual business units, yet many still struggle to measure the overall return. Agents multiply, teams build them in different ways, ownership stays vague, and accountability becomes difficult. Glean describes that as AI sprawl.

Its answer is to manage agents like software. Software systems have requirements, permissions, data dependencies, tests, releases, operations, incident response, and retirement plans. Enterprise agents need the same discipline. The moment an agent reads documents and tickets, updates CRM fields, adjusts calendars, or sends messages in Slack and email, it stops being only a response generator. It becomes an execution path through internal systems.

Glean defines ADLC as a seven-stage lifecycle.

| Stage | Core question | Operational meaning |

|---|---|---|

| Opportunity | Which business problem is worth solving? | Start from an outcome, not a polished demo. |

| Design | Where does the agent's responsibility begin and end? | Define inputs, outputs, trigger conditions, and exclusions before building. |

| Performance | What counts as success or rollback? | Connect business KPIs with agent quality metrics. |

| Context | Which data and tools does the agent need? | Attach permission-aware data, examples, tools, and observability signals. |

| Develop | How do we make behavior predictable? | Use golden examples, parallel runs, and pilots to validate execution. |

| Launch | Who receives the agent, and with what guardrails? | Include training, communication, SLOs, and kill-switch thinking. |

| Monitor & Improve | How do live signals feed the next iteration? | Use dashboards, alerts, feedback, and drift detection to drive improvement. |

The table makes Glean's direction clear. ADLC is not a tutorial telling developers how to code an agent. It is closer to an operating language that lets product leaders, security teams, IT admins, business owners, and data stewards look at the same object. Once an agent can touch business systems, the boundary of responsibility matters more than a better prompt.

The new features all attach to the lifecycle

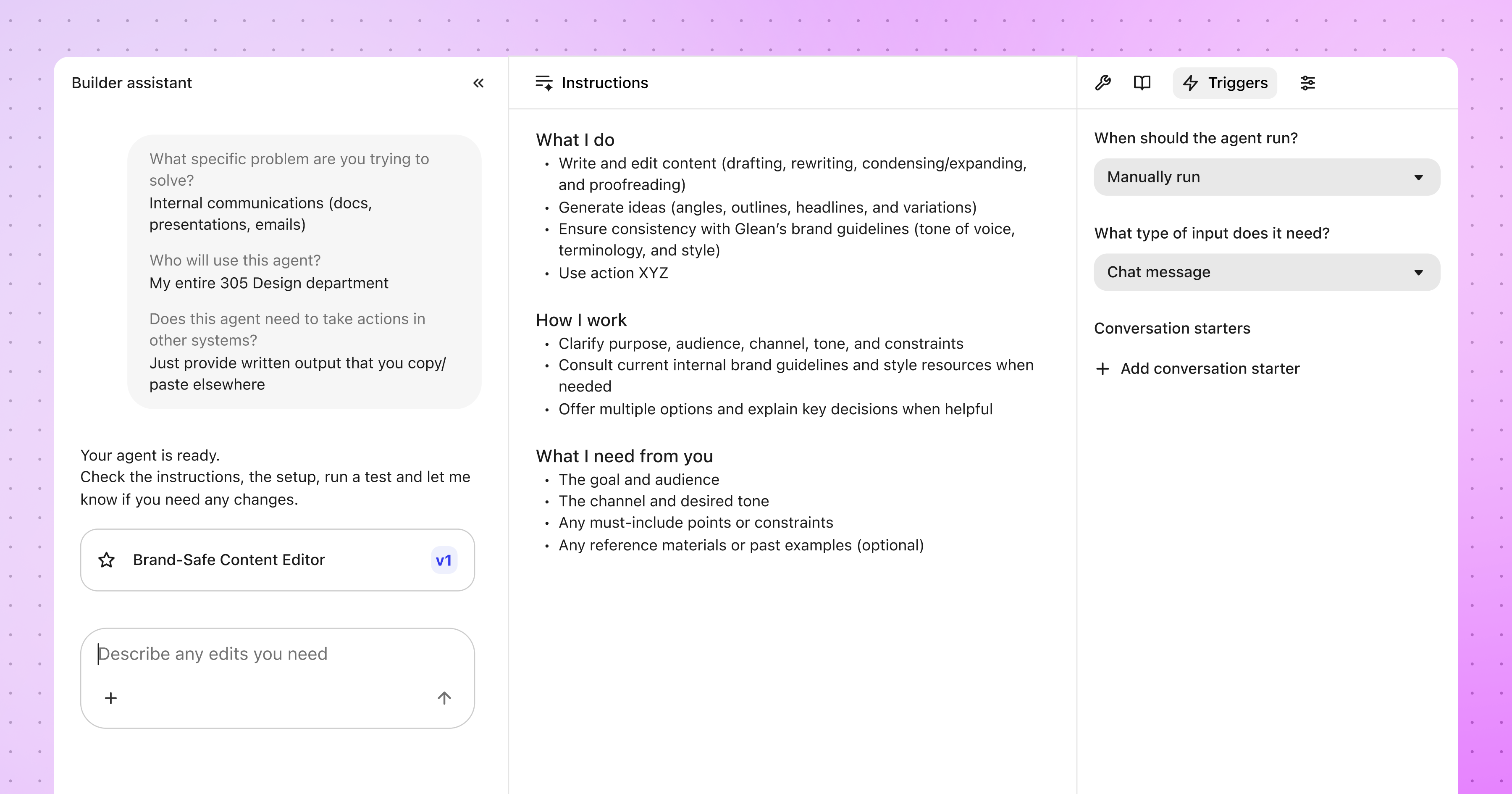

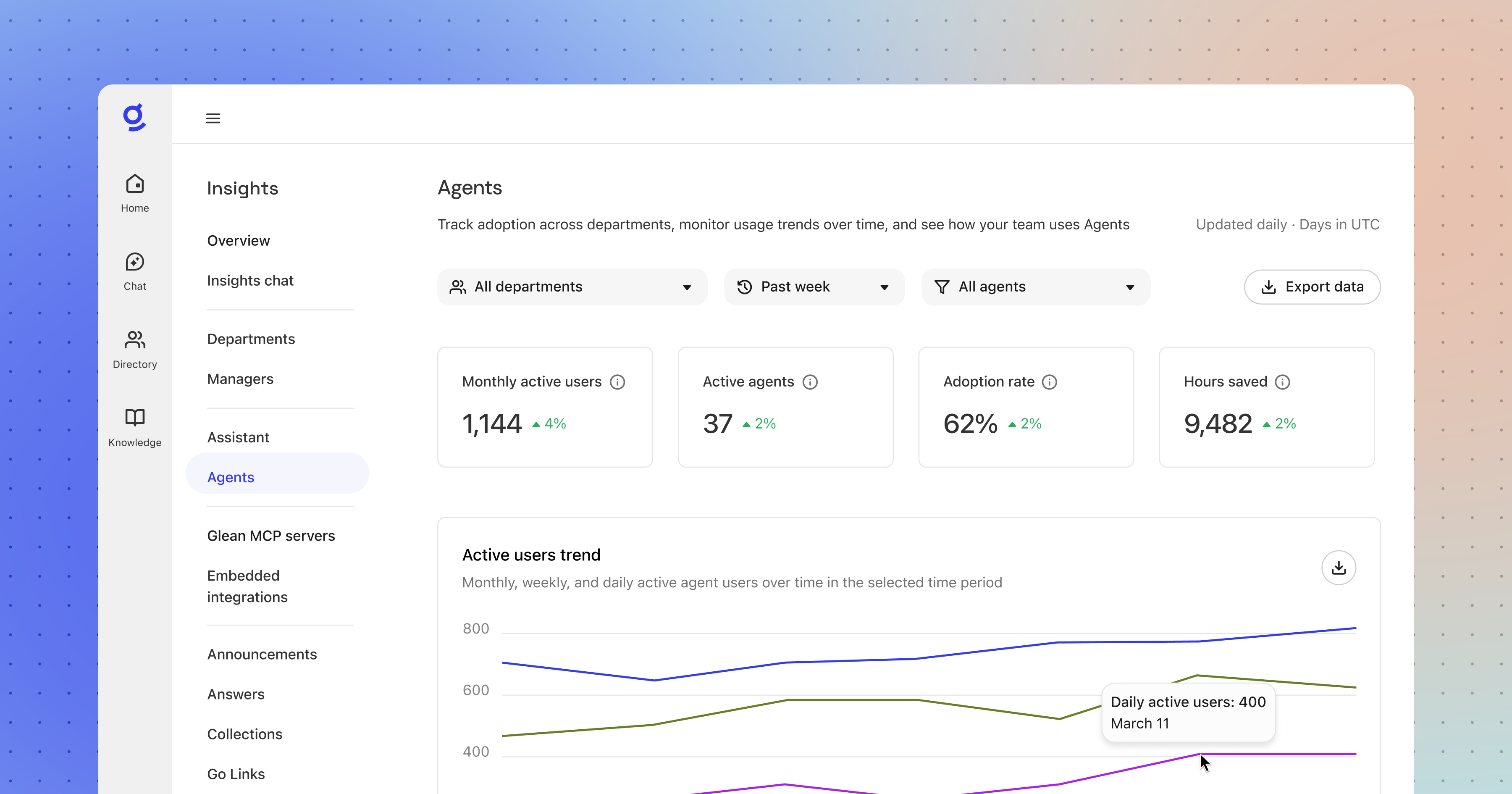

Glean did not leave ADLC as an abstract model. It attached new features to the Work AI platform. Auto-mode agents moved to general availability. Debug and trace views, sub-agents, agent sandbox, agent library, access policies, and agent insights were announced around the same operating model.

Auto-mode agents let users describe a desired goal while Glean plans, reasons, and executes inside the enterprise graph, guardrails, and managed actions. That differs from a traditional workflow builder. Instead of requiring every step to be manually specified ahead of time, it aims to move from intent to a testable agent faster.

The stronger auto-mode becomes, the more visibility operators need. That is why trace views matter. Agents do not fail only by producing a bad final answer. They can retrieve the wrong input, make a bad tool call, take the wrong branch, or carry forward a flawed intermediate result. Glean says debug views show inputs, tool calls, and decision steps. In other words, logs and tracing are becoming default infrastructure for agent operations.

Sub-agents sit in the same pattern. A single large agent that handles every task is hard to maintain. Glean's reusable sub-agent model brings familiar modularity into agent design. The interesting point is that sub-agents are both a way to build more capable systems and a way to make those systems more manageable.

Agent sandbox is a more direct execution environment. Glean says each autonomous agent can receive a secure private runtime where it organizes intermediate work in a file system or uses a code interpreter for computation that would be unreliable if left to language-model reasoning alone. The sandbox pattern that coding agents made familiar is now entering business agents.

Agent library and access policies are the operational layer. When the number of agents grows, discovery becomes a problem in itself. Which agents are verified? Who can see them? Which business functions do they support? Are newly created and untested agents visible to the whole organization? Glean emphasizes admin verification and curation in the library. Access policies are more sensitive. A typical example is preventing an intern from using an agent to write to a system of record.

Together, the feature set shows that Glean is not merely saying "you can build agents more easily." It is saying that enterprises need to operate an agent portfolio.

Why ADLC now?

The agent market is already crowded with builders. OpenAI Agents SDK, LangGraph, CrewAI, Microsoft Copilot Studio, Salesforce Agentforce, SAP Joule Studio, ServiceNow AI Agent Studio, and Google Workspace or Vertex AI tools all tell organizations they can create agents. The ability to create them is no longer enough.

Inside an enterprise, one agent has a much larger surface area than a simple function call. Data permissions, SaaS connectors, approval workflows, user feedback, audit logs, cost, hallucination risk, prompt injection, and business KPIs all mix together. Failure is not one thing either. It can be a wrong answer, a bad record update, an excessive permission request, runaway cost, duplicated agents, or an automation nobody uses.

ADLC is less a new technology than a structured warning. The more easily an organization can create agents, the more it needs a system for deciding what should be created at all. That is why Glean places Opportunity and Performance near the front of the lifecycle. Agent projects often fail not because the model is weak, but because the business problem is unclear, success criteria are missing, or the agent never enters the user's real workflow.

Glean says ADLC has helped customers such as Zillow, Ericsson, and Motive scale millions of weekly agent runs. It also claims that one internal Glean engineering agent has recovered more than 17,000 hours and over $1.7 million in annual ROI. Those numbers are not an independently audited benchmark. They are vendor claims and should not be treated as market averages. The direction still matters. Enterprise AI sales language is shifting from accuracy to recovered time and cost.

How this connects to Glean's May product update

One week before the ADLC post, on May 5, Glean announced a product update around the idea of an "enterprise AI coworker." That update included personalized activity cards, Skills, write tools, Adaptive Reasoning, voice, Canvas revision view, and Library. ADLC wraps those product features in an operating model.

Skills are a good example. Glean describes Skills as reusable instructions, structure, and tools that adapt to how individuals and teams work. That lines up with the Develop stage. Instead of reconstructing a repeated workflow from a fresh prompt every time, a team packages it into a reusable unit that can be tested and improved.

Write tools are more sensitive. Glean says they can edit data across applications such as Microsoft 365, Outlook, Teams, Gmail, Google Drive, and Slack, with a clear preview before execution. Once an agent moves from read-only search into write operations, Launch and access policy become central. Who approves the action? Which systems are write-protected? Which changes should only be previewed rather than executed automatically?

Adaptive Reasoning is the default mode that chooses reasoning depth and model selection based on the work. That is a cost and quality issue. Running every task through the most expensive model with long reasoning is not economical. Sending important work through a lightweight mode is risky. If the platform makes that choice, the organization needs to observe and validate how that decision is made.

Glean's agent documentation also supports this direction. Glean Agents are described around trigger, steps, actions, flow, and memory. Flow supports conditional branching and sub-agent calls. Memory stores intermediate outputs across execution so later steps can reuse them. That structure reinforces the point that an agent is not merely a chat session. It is a stateful work process.

Competition between control planes and lifecycles

Recent enterprise AI announcements can be summarized as a fight over the agent control layer. Microsoft Agent 365 frames agents as identities that can be managed like people and apps. ServiceNow AI Control Tower tries to observe and control AI agents inside business workflows. SAP places agents inside ERP processes through Joule Studio and Business AI Platform. UiPath is connecting coding agents to enterprise orchestration.

Glean ADLC overlaps with that field, but its emphasis is different. A control tower asks how to see and manage agents that are already moving. ADLC asks why an agent should exist, how it should be productized, and how its success should be measured. Glean still provides access policies and insights, but the main vocabulary of the announcement is lifecycle and ROI rather than only security control.

That difference also follows from Glean's starting point. Glean began with enterprise search and a work knowledge graph. It treats company context, more than the model alone, as the core asset for agents. For Glean's agents to become useful, the permission structure of enterprise data, the relationship between people and projects, business artifacts, and SaaS connectors must be well organized. It is not an accident that Context is its own ADLC stage.

There is also risk in this position. Microsoft, Google, Salesforce, and ServiceNow already own many of the systems where work happens. If they quickly absorb search, memory, agent libraries, and access policies, an independent Work AI platform such as Glean has to keep proving the value of a neutral knowledge layer. That is why SaaS and AI communities keep debating whether enterprise search startups can defend their position between large platform vendors.

What developers and AI teams should watch

For developers, the significance of this announcement is not "use Glean." The more important point is that the checklist for agent projects is changing. Until recently, agent design mostly meant model choice, tool calls, prompts, RAG, and memory. Now operational questions have to sit beside those technical choices.

First, each agent needs a clear work unit. Is it resolving one support ticket, analyzing a batch of customer calls, writing a weekly report, or updating system records? If the work unit is vague, evaluation will be vague too.

Second, success metrics cannot stop at output quality. Teams need processing time, user approval rate, rollback rate, error type, cost, and actual business KPI movement. That is why Glean gives Performance its own stage.

Third, context should be designed with least privilege. More data can make an agent more useful, but it also expands the risk surface. The team has to narrow which documents, records, tools, and write permissions are actually necessary.

Fourth, trace and sandbox support are becoming operational defaults. If no one can see which intermediate judgment an agent made, failures cannot be fixed. If long-running work has no environment for intermediate artifacts, reproducibility and auditability suffer.

Fifth, libraries and retirement policies are required. When an organization has ten agents, discovery is easy. When it has more than a hundred, nobody knows which agent is verified. Old agents, duplicate agents, and unused agents need to be hidden, replaced, or retired as part of product operations.

From demos to operations

Glean's ADLC announcement is not as flashy as a frontier model benchmark. It may still matter more in the enterprise AI market. Once agents begin moving real business systems, the bottleneck is no longer a demo that works once. It is an operating model that keeps working safely.

Different organizations will choose different answers. Some may prefer Glean's Work AI layer. Others may lean into Microsoft or ServiceNow control planes. ERP-heavy organizations may prefer agents embedded directly into SAP processes. The direction, though, is becoming clear. Agents are no longer standalone chatbots. They are execution units inside the software portfolio of an organization. That means they need requirements, tests, releases, observability, improvement loops, and retirement.

ADLC may or may not become the standard name. The question Glean is asking will remain useful either way. Who created the agents in your organization? What can they change? By what standard did they succeed? Where do they stop when they fail? If a company cannot answer those questions, its agents are not productivity tools. They are unmanaged shadow automation. Enterprise AI in 2026 is moving from building more agents to building agents that can be operated for a long time.