Glean ADLC turns agent sprawl into a 7-step operating model

Glean ADLC shows enterprise AI agent competition moving from demo counts to lifecycle, trace, access policy, and ROI measurement.

- What happened: Glean introduced an enterprise

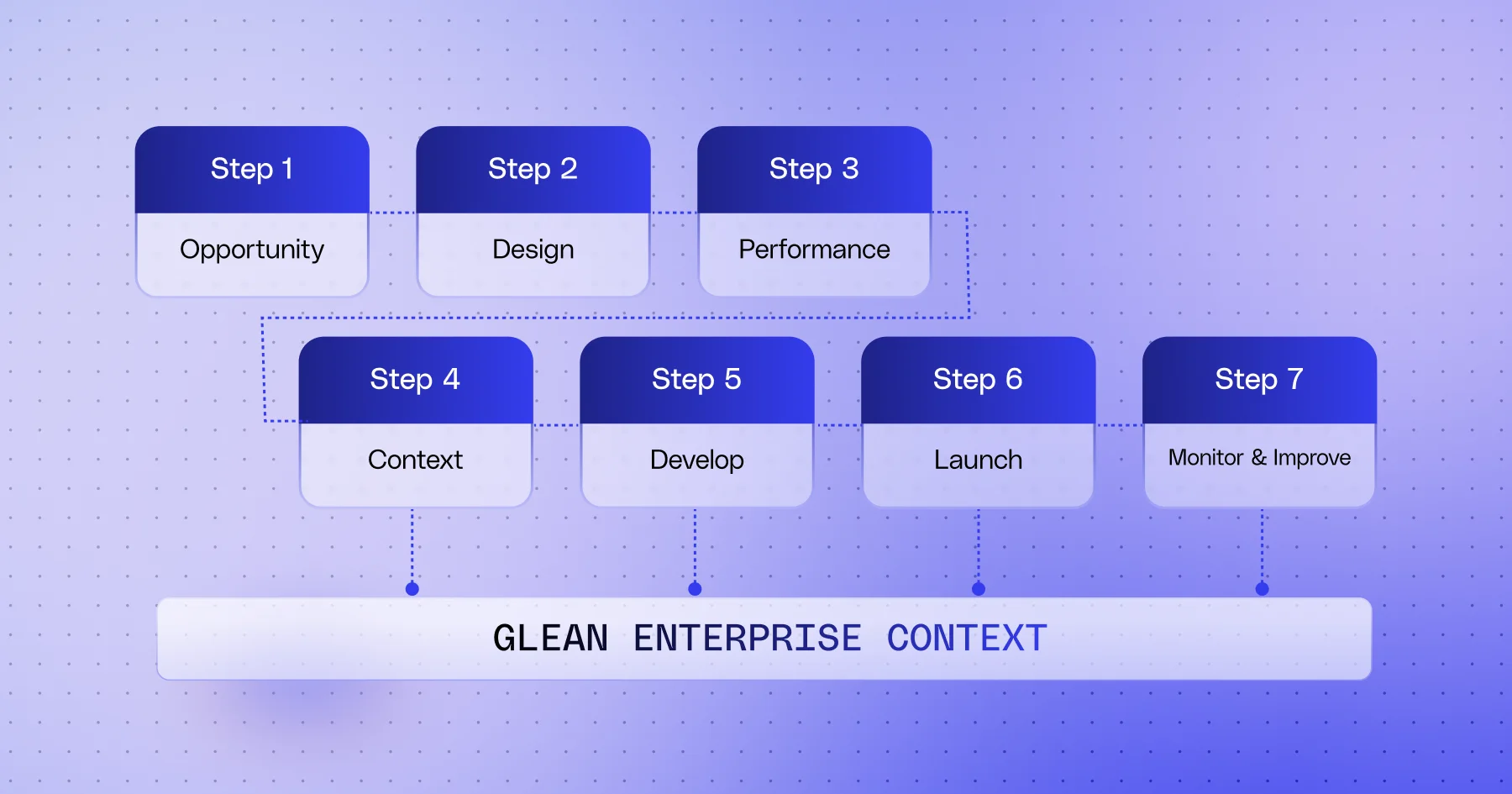

Agent Development Lifecycleand a related set of platform features.- The 7-step lifecycle covers Opportunity, Design, Performance, Context/Input, Develop, Launch, and Monitor & Improve.

- Why it matters: The agent race is shifting from model demos toward operations, traceability, policy, and ROI.

- Product signal: Auto Mode, Debug & Trace, Sub-Agents, Sandbox, Agent Library, and Access Policies arrived as one bundle.

- Glean is betting that enterprises will treat agents less like temporary automations and more like deployed software systems.

- Watch: This is still early, source-led news, so independent validation and repeatable ROI claims need time.

Glean announced its Enterprise Agent Development Lifecycle, or ADLC, on May 12, 2026. At first glance, the name sounds like another enterprise AI framework. The interesting part is what Glean chose to put in front: not a smarter agent demo, but the operating model around agents.

Over the past several months, the enterprise AI market has changed its grammar. In the early phase, vendors mostly tried to prove that they could build agents at all. We saw demos that generated workflows from natural language, called SaaS apps, read internal documents, routed tickets, and drafted answers. Once agents move inside a real organization, however, the questions become different. Who owns this agent? Which data can it access? Who sees the failure? Where does human approval enter the loop? Did the agent actually reduce work, or did it create a new layer of work that only looks automated?

Glean ADLC is a direct answer to those questions. The premise is simple: agents are software. They should therefore move through a lifecycle that includes problem definition, design, performance targets, context, development, launch, monitoring, and improvement. That sounds ordinary, but it marks an important turn in the enterprise AI product market. Glean is lowering agents from magical assistants into systems that must be operated.

What the 7 steps really signal

Glean describes ADLC as a 7-step lifecycle. The press release uses Opportunity, Design, Performance, Input, Develop, Launch, and Monitor & Improve. The blog uses Context where the press release says Input. The wording differs slightly, but the operating idea is the same: define the business problem before building the agent, design the unit of work it should own, set measurable success criteria, minimize the required data and permissions, test it, release it with constraints, and keep improving it through operational metrics.

That flow resembles a conventional software development lifecycle. The difference is that agents are probabilistic, call external tools, and directly handle organizational data and privileges. A normal app can show the wrong button. An agent can press the wrong button. A search system can surface an outdated document. An agent can use the outdated document to update a CRM record or answer a customer. That is why an agent lifecycle quickly becomes an access-control and observability problem, not just a project-management checklist.

Glean's blog frames many stalled agent projects as operating-model failures rather than intelligence failures. The missing pieces are useful context, durable integrations, and success criteria that remain visible after launch. That diagnosis matches what many enterprise AI teams are now running into. In many organizations, AI agents spread through departmental experiments before they become a central platform. Sales teams build CRM summary agents. Support teams build ticket classification agents. Engineering teams build code review or release note agents. The first wave looks productive, but the second wave reveals duplicated permissions, repeated connectors, and overlapping evaluation metrics.

At that point, the CIO does not need another demo. The CIO needs a way to see which agents earn money or reduce cost, which agents hold risky privileges, and which agents should be retired. ADLC packages that demand in lifecycle language. It changes the question from "how many agents did we build?" to "which stage is each agent in, and what has it proven?"

The product bundle around ADLC

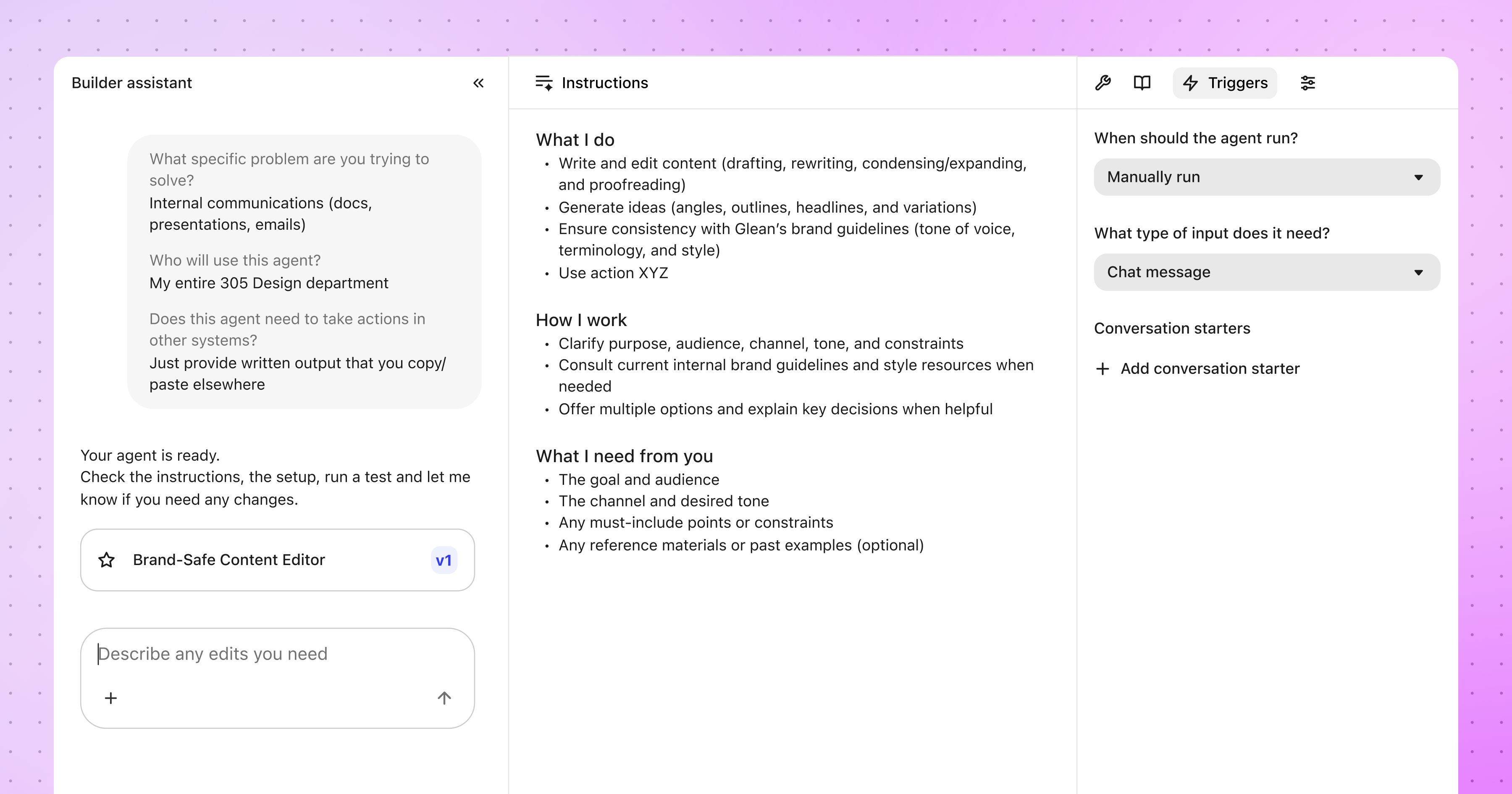

Glean did not announce ADLC as a manifesto alone. It paired the framework with several platform features. Auto Mode Agent Builder lets users describe a desired task in natural language so an agent can plan and execute over the enterprise graph. Debug & Trace Views expose the input, tool calls, LLM decisions, and output of an agent run step by step. Sub-Agents let a parent agent call specialized reusable agents at runtime instead of packing every capability into one large agent. Expanded Agent Sandbox provides a controlled file system and code execution environment inside a customer's VPC.

For developers and platform teams, Debug & Trace is the most important signal in the set. Agent failures are not like traditional bugs. Code may not produce the same output for the same input every time, and the failure may sit in the prompt, context, tool schema, permission layer, model routing, user instruction, or an external SaaS state. If the team only inspects the final answer, debugging becomes guesswork. By making trace a core ADLC capability, Glean is pointing at the real bottleneck in agent operations.

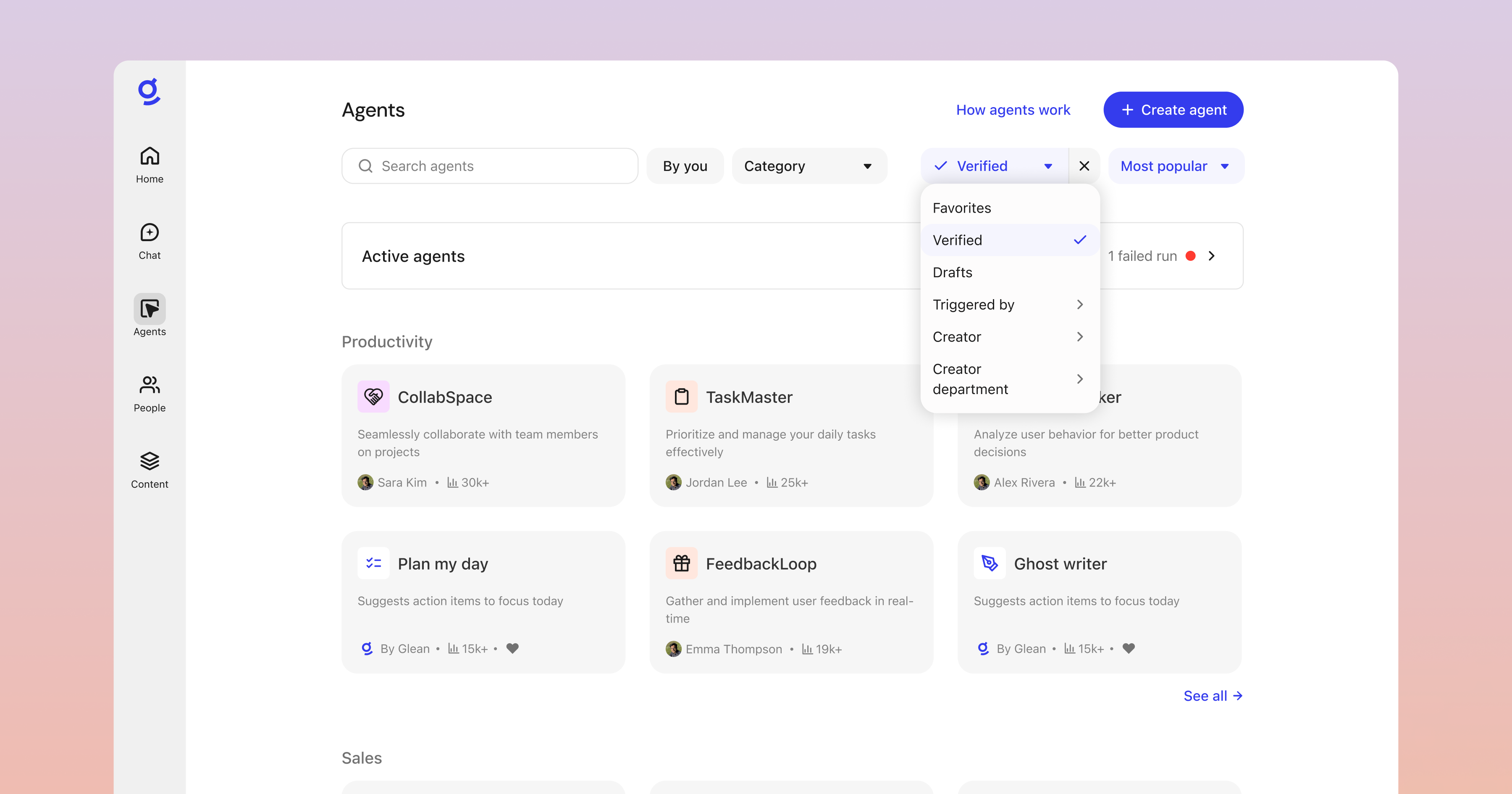

Agent Library controls and Agent Access Policies matter for a different reason. Once agents multiply, discovery and distribution become their own problem. Enterprises need to know which agents are approved, which department they belong to, which agents are deprecated, and which user groups should not see certain agents. Glean says the Agent Library includes verification badges, featured agents, departmental categories, soft delete, and admin restore. At the policy layer, it points to organization-wide guardrails such as sensitive content blocking and system-of-record write restrictions.

These features may look less exciting than a flashy agent demo, but they tend to survive enterprise adoption. When there is one internal agent, a Slack link is enough. When there are dozens, the company needs a catalog. When there are hundreds, it needs owners, policy, access control, usage data, and incident response. Once agents gain write access to business systems, the more important question is not who created the agent, but who is accountable for it.

Why agents need an ROI ledger

Another notable part of Glean's announcement is ROI. Glean says ADLC has helped customers scale agent adoption, and it cites Zillow, Ericsson, and Motive as customers processing millions of agent runs per week. It also claims one internal Glean engineering agent has returned more than 17,000 engineering hours per year and more than $1.7 million in ROI.

Those are strong marketing numbers, and they should be read carefully. Hours saved and ROI can change dramatically depending on the calculation method. Did the task actually disappear? How much human review remains? Where is the cost of fixing agent failures counted? How are security review and change management included? The value of the announcement is not that Glean's numbers will directly apply to every company. The value is that agent ROI is being placed inside the lifecycle itself.

Many AI adoption projects begin with time-saved claims. Meeting notes take 30 fewer minutes. Ticket classification is 70% faster. Code review drafts appear automatically. Those claims can be useful, but they are not enough at a portfolio level. Enterprises need to know whether an agent moves an actual KPI, whether it is only useful in one department, whether users keep using it, whether quality degradation can trigger rollback, and whether compliance evidence is retained.

Glean's Agent Insights Dashboard targets that problem. According to the announcement, the dashboard is designed to track adoption, top use cases, estimated hours saved, and feedback trends. We still need to see how deep that analysis becomes in the live product. The direction, however, is clear. Agents are beginning to face portfolio pressure. It is no longer enough for users to like them. They need to be managed with operational metrics.

Competitors are looking at the same layer

Glean is not alone in this shift. At Think 2026, IBM described watsonx Orchestrate as a control plane for the multi-agent era. IBM's framing is similar to Glean's: once organizations create thousands of agents across many teams and platforms, the hard part is not simply building agents, but preserving governance and auditability close to real time.

Honeycomb announced Agent Observability and Agent Timeline with a focus on putting LLM calls, tool invocations, agent handoffs, and downstream system impact into one timeline. Collibra is trying to connect agents, AI use cases, data lineage, and policy evidence through AI Command Center. ServiceNow, Microsoft, and UiPath are emphasizing their own control planes and orchestration layers. The names differ, but the pressure is the same. If agents perform real work inside organizations, platforms must handle runtime, identity, traces, policy, catalogs, and ROI.

Glean's differentiated angle is enterprise context. The company grew out of workplace search and the enterprise graph. So when Glean talks about agent operations, it naturally centers which data the agent can access, how user permissions apply, and which sources support the answer. That starting point differs from coding-agent or infrastructure-agent companies. Glean's main battlefield is not the developer IDE. It is the company-wide work graph where sales, support, HR, IT, and engineering teams build and manage agents over the same context layer.

That approach also carries risk. A horizontal platform can cover a wide surface but miss domain depth. A sales agent, support agent, and engineering agent need different data, different failure tolerances, and different approval flows. A shared lifecycle gives the organization a common language, but implementation still requires domain-specific evaluation and workflow design. If ADLC is consumed as only a checklist, it can become another governance ritual.

What changes for developers

For developers and AI product teams, the practical message is threefold.

First, agent observability needs to be designed from the start. Saving only prompts and tool schemas is not enough. Teams need to know which context the agent read, which tool it called and why, what intermediate decisions it made, and where user approval or policy checks entered the flow. That is what makes failures reproducible and improvable. It is also harder than application logging because sensitive data and reasoning traces may sit close together.

Second, the agent catalog is not a minor feature. As agents spread through an organization, discoverability and trust become bottlenecks. Users need to know which agents are official. Admins need to hide or restore outdated agents. Security teams need to see which agents hold dangerous write permissions. "Everyone can build an agent" can quickly become "everyone can deploy production automation." The distribution surface needs to feel as simple as a collaboration tool, while the control surface needs the rigor of software release management.

Third, ROI should be an input to design, not a report attached after launch. Teams should estimate which time the agent is supposed to reduce, define a baseline and target range, and decide when the agent should pause or roll back. This matters because many agents do not fully replace human judgment. They move review cost around. That makes end-to-end cycle time and rework rate more useful than raw generation volume.

What still needs proof

This announcement is still mostly based on official sources, and independent community validation is limited. I did not find a major public discussion around this specific Glean release on Hacker News or GeekNews. That makes it safer to read the announcement as a market signal rather than as proof that Glean's customer numbers generalize.

Vendor lock-in is another open question. ADLC looks like a general operating language, but Glean ties it deeply to its Work AI platform. Auto Mode, Enterprise Graph, Agent Library, Access Policies, and Insights Dashboard may work well when they sit inside one platform. In companies already mixing Microsoft, ServiceNow, IBM, Slack, GitHub, Datadog, and internal orchestration tools, ADLC may also become another control plane to reconcile. The next competition in agent operations may not be who draws the best lifecycle diagram. It may be who can gather trustworthy evidence without fighting the systems already in place.

Even with those caveats, Glean ADLC matters. As the agent market matures, impressive demos become less valuable and operable systems become more valuable. Enterprises will eventually ask not only what an agent can do, but whether the agent can explain why it did it, stop when it fails, and prove that it improved an outcome.

Glean has organized that question into a 7-step lifecycle. It may or may not become an industry standard. The direction is clearer than the branding. In 2026, the enterprise AI agent race is becoming less about creating more agents and more about managing agents as an operational portfolio. Models still matter, but models are no longer enough. Once agents take on organizational work, the battleground moves from prompts to lifecycle, traces, policies, and ROI ledgers.