Copilot CLI plugins become an enterprise standard

GitHub has put Copilot CLI managed plugins and Rubber Duck cross-model review into the enterprise control plane for terminal-based coding agents.

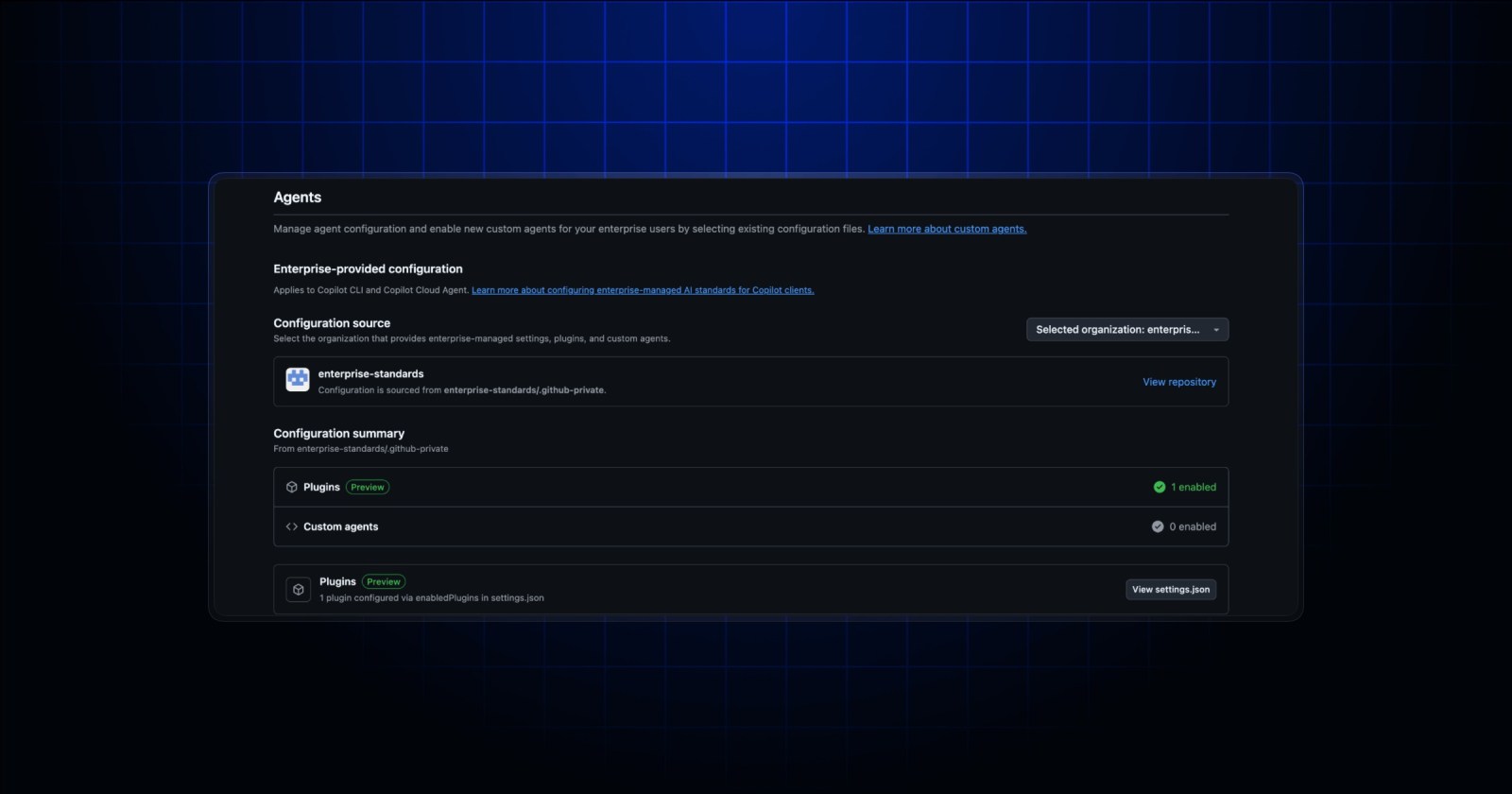

- What happened: GitHub opened public preview for enterprise-managed plugins in

Copilot CLI.- Enterprise owners can use

.github-private/.github/copilot/settings.jsonto publish approved marketplaces and auto-installed plugins.

- Enterprise owners can use

- Why it matters: AI coding agents are moving from personal productivity tools into organization-managed runtime standards.

- Same-week signal: Rubber Duck now lets GPT-led sessions call a Claude-based critic agent.

- In Claude-led sessions, GitHub says GPT-5.5 is now used as the Rubber Duck reviewer model.

- Watch: This is still public preview, so configuration shape and operating policy can change.

GitHub Copilot CLI has moved closer to the enterprise administrator's desk. On May 6, 2026, GitHub announced public preview for enterprise-managed plugins in Copilot CLI. The basic idea is simple: an enterprise owner defines shared plugin marketplaces and auto-installed plugins, then Copilot CLI users with Copilot Business or Copilot Enterprise licensing receive those settings when they authenticate.

That can look like a small configuration feature, but it crosses a meaningful line for AI coding operations. Many AI coding tools have spread through individual local settings, IDE extensions, dotfiles, and team wiki pages. One developer wires up an MCP server. Another writes a custom agent. A third adds a hook that forces tests before every commit. Even inside the same team, different settings can mean different tools, different allowed actions, different review standards, and different access paths into internal documentation. GitHub's update is a signal that those personal variations can now be pulled into a policy file inside a .github-private repository.

This article overlaps with devlery's earlier coverage of Copilot cloud agent secrets and variables, but the operating layer is different. Cloud agent secrets are about how a GitHub Actions-based background agent gets environment values and secrets for internal resources. Copilot CLI plugin standards are about which extension tools and agent practices are distributed by default to a developer's terminal client. One is runtime access. The other is the assembly model for agents on the developer workstation.

The center of the announcement is settings.json

According to GitHub's announcement, managed plugins are defined through .github-private/.github/copilot/settings.json. Enterprises that already configure the source organization for custom agents use the same .github-private repository. The GitHub Docs example shows two important top-level properties. extraKnownMarketplaces defines additional plugin marketplaces that CLI users can see, while enabledPlugins specifies plugins that should be installed for all enterprise users.

The interesting part is that this moves Copilot CLI extension from a personal plugin installation story into a managed distribution story. A platform team could create a company-platform@internal plugin that bundles a deployment-diagnostics skill, internal MCP servers, security-review hooks, and a frontend accessibility review agent. Once an admin adds that plugin to enabledPlugins, a newly onboarded developer gets the same baseline toolset without first reading a long internal setup page.

GitHub also says hooks and MCP configurations can be kept enabled. That detail matters in practice. AI coding agents are no longer just models that produce text. They are executors that call tools. Which MCP servers are allowed, which hooks run before or after tool use, and which custom agents ship as defaults are now part of the development process. For that reason, CLI plugin standards are better understood as agent governance, not just developer-environment customization.

| Area | Copilot cloud agent secrets | Copilot CLI plugin standards |

|---|---|---|

| Managed surface | Secrets and variables used by background cloud agents | Plugins, marketplaces, and extension standards used by terminal CLI users |

| Core file or area | Agents secrets and variables in organization or repository settings | .github-private/.github/copilot/settings.json |

| Practical meaning | Standardizes how agents reach internal packages, MCP servers, and APIs | Distributes shared agents, skills, hooks, and MCP configuration to developer CLIs |

Why CLI standardization matters now

AI coding tools began as autocomplete. Autocomplete is relatively easy to manage even when personal settings differ. A model suggests a few lines, and the developer accepts or rejects them. Agentic CLIs are different. They read repositories, plan work, edit files, run tests, and connect to external systems. In that environment, the question of which tools the agent can see directly affects quality and risk.

When enterprise development teams adopt AI coding agents broadly, the first problem may not be model choice. A bigger operational risk can come from team-by-team local settings, temporary MCP servers, unreviewed custom prompts, and copied internal tokens. GitHub's managed plugins bring that issue inside the product. Administrators can define approved marketplaces, auto-install specific plugins, and leave a change history through the source organization and .github-private repository.

This also changes developer experience. When a new engineer joins a repository, the question "which AI agent setup should I use for this project?" can become less frequent. Platform teams can ship internal standards as plugins. Security teams can block risky tool calls with hooks. Data teams can include an internal documentation search MCP server as part of the default configuration. Instead of manually aligning local setup, the developer logs into Copilot CLI and receives the organization's baseline tools.

Central distribution is not automatically good. If a plugin standard becomes too dense, it can block developer experimentation. If internal tools are low quality, the agent may simply repeat stale organizational habits faster. The central question is not "how much can we control?" but "what deserves to become a shared default?" Security policy, pre-release checks, internal API documentation access, and repetitive onboarding tasks are more realistic starting points than trying to standardize every individual workflow.

Enterprise owner writes policy in the .github-private repository

extraKnownMarketplaces and enabledPlugins are defined

Users receive approved marketplaces and plugins when they authenticate with Copilot CLI

Rubber Duck is another piece of the same shift

If the May 6 update is about distribution standards, the May 7 Rubber Duck update is about agent quality control. GitHub announced that Rubber Duck can now call a Claude-based critic agent during GPT orchestrator sessions. In the other direction, Claude orchestrator sessions use GPT-5.5 as the Rubber Duck reviewer model. Users need to run copilot and enable /experimental on.

Rubber Duck was first introduced in an April GitHub blog post as a cross-model review agent. GitHub's framing is clear: when a coding agent plans, implements, and tests, asking the same model to review its own output has limits. It can share the same training biases and the same blind spots. A reviewer from another model family can provide a short, focused list of issues at moments when feedback has high value, such as after planning, after complex implementation, or after test generation.

GitHub said in that earlier evaluation that Claude Sonnet 4.6 combined with Rubber Duck GPT-5.4 closed 74.7 percent of the gap between Sonnet and standalone Opus performance. GitHub also said the effect was stronger on harder tasks involving at least three files and more than 70 steps. The May update extends that idea into GPT-centered sessions. In other words, Copilot CLI is moving away from a single-model path and toward an operating model that combines an orchestrator with a critic.

That is where plugin standards and Rubber Duck connect. Enterprise plugins ask which tools and procedures should be distributed as defaults. Rubber Duck asks when an agent's plan or implementation should be reviewed from a different model perspective. Both are less about advertising model performance and more about operating structure. Once AI coding tools are used at team scale, a repeatable guardrail can matter more than a single impressive answer.

The competition has moved outside the IDE screen

AI coding tools are often compared through Cursor, Claude Code, Codex, and Copilot as product experiences. The usual questions are model quality, editor speed, SWE-bench results, diff generation, and pull-request slicing. GitHub's update shows that the battlefield is widening beyond the IDE screen.

Enterprises cannot manage AI coding tools as simple apps handed to developers one by one. Internal package registries, private API documentation, security scanners, cloud credentials, Jira, Linear, ServiceNow, code ownership rules, and deployment approval processes all become part of the agent loop. As AI agents enter the real development process, it matters who approves those connections, who updates them, and who can audit the history.

GitHub has a distinctive position here. It already owns repositories, Actions, secrets, code review, organization policy, audit logs, and enterprise controls for many teams. Copilot CLI plugin standards add local agent-extension distribution on top of that platform. If Cursor or Claude Code can emphasize powerful individual and team workflows, GitHub can emphasize an agent standard that enterprise administrators distribute and track.

That does not mean GitHub's approach automatically wins. This is public preview, so the configuration format may change. The plugin ecosystem also has to prove that it can become rich enough to support real internal standards. A .github-private repository-based policy is natural for organizations centered on GitHub, but it may become one more management surface for teams that use multiple code hosts and multiple AI tools. Managed standards are powerful, but they can also bind operating knowledge to a specific platform.

What development teams should check now

For development teams, this news translates into three practical checks.

First, if you are experimenting with Copilot CLI, separate personal settings from team standards early. When arbitrary personal MCP servers and approved internal MCP servers are mixed together, permission incidents become harder to investigate later.

Second, choose shared plugins based on repeatability and risk management before convenience. Good candidates include internal test commands, release-note generation rules, security-review hooks, and internal documentation search MCP servers. The most useful shared default is often the boring step that every team member should perform the same way.

Third, decide where cross-model review belongs before turning it on everywhere. GitHub describes Rubber Duck as being used sparingly at high-signal moments, not after every action. High-risk migrations, multi-file refactors, security-sensitive changes, and test-coverage generation are more natural starting points than routine edits.

The next stage of AI coding tools cannot be explained only by longer context windows or faster diff generation. Teams need shared tool standards, critique from other model families, administrator-controlled extension and permission distribution, and a way to leave those changes in repository history. GitHub's Copilot CLI update points clearly in that direction.

The most important shift is that the agent is moving from "a smart terminal for one developer" to "a managed execution surface for the enterprise." That may look like a convenience feature, but it is really about where operational control sits in AI development workflows. The next phase of AI coding competition will likely be decided not only by who attaches the best model, but also by who gives teams the safest and most repeatable way to run agents together.