GPT-5.5 crossed 50%, exposing the real bottleneck in enterprise document agents

GPT-5.5 became the first model to pass 50% on Databricks OfficeQA Pro, showing that enterprise agents still fail on parsing, retrieval, permissions, and orchestration.

- What happened: Databricks is bringing

GPT-5.5into enterprise agent workflows.- OpenAI says GPT-5.5 is the first model to pass 50% accuracy on

OfficeQA Proand reduced errors by 46% versus GPT-5.4 in an agent harness.

- OpenAI says GPT-5.5 is the first model to pass 50% accuracy on

- Why it matters: The bottleneck for enterprise AI agents is not only reasoning. It is scanned documents, old tables, numeric parsing, and grounded retrieval.

- Deployment path: GPT-5.5 lands inside Databricks

AI Unity Gateway,AgentBricks, andAgent Supervisor API.- That makes this closer to a production agent route with permissions, evaluation, and subagent coordination than another RAG demo.

- Watch: Crossing 50% is a meaningful step, but the OfficeQA Pro paper still frames enterprise-grade grounded reasoning as an unsolved problem.

OpenAI announced on May 15, 2026 that Databricks is bringing GPT-5.5 to enterprise agent workflows. At first glance, this looks like another partner and customer story. The more important signal is different: the success of enterprise document agents is not decided by general model intelligence alone.

According to OpenAI, GPT-5.5 became the first model to exceed 50% accuracy on Databricks OfficeQA Pro, and in an agent-harness setup it reduced errors by 46% compared with GPT-5.4. OfficeQA Pro is not a simple question-answering benchmark. It asks systems to handle scanned PDFs, old tables, long documents, numeric values, and evidence spread across multiple files. In other words, it resembles the disorder inside real companies.

The number is interesting because 50% does not sound high. Frontier model launches have trained the market to expect 80%, 90%, or claims of expert-level performance. Here, passing 50% is the news. That says something about how rough enterprise document work is, and how easily a production agent can collapse after one small parsing mistake. The Databricks and OpenAI announcement is a stronger-model story, but it is also a story about why enterprise AI agents remain hard.

Why OfficeQA Pro Is Difficult

OfficeQA Pro is a Databricks benchmark released on arXiv on March 9, 2026. The paper describes it as an evaluation of grounded multi-document reasoning over a large, heterogeneous document corpus. The corpus contains roughly a century of U.S. Treasury Bulletins, 89,000 pages, more than 26 million numeric values, and 133 questions.

That design is intentionally uncomfortable. This is not clean JSON from a modern SaaS database. It includes old documents, tables, scan quality issues, shifting layouts, year-to-year formatting differences, units, footnotes, and contextual numeric values. Internal contracts, financial reports, audit documents, scanned invoices, policy PDFs, and old spreadsheet exports often share the same failure modes. A system cannot merely find the relevant page. It has to understand which number appears in which row and column, and why that number matters.

The paper's abstract is sharp about the gap. Models such as Claude Opus 4.6, GPT-5.4, and Gemini 3.1 Pro Preview scored below 5% when relying on parametric knowledge, and below 12% even with web access. Frontier agents given direct access to the document corpus averaged 34.1%. Strong models still break when parsing, retrieval, and analysis paths are weak.

Databricks also reported that giving agents structured document representations from ai_parse_document improved average performance by 16.1% relative. That point matters. The bottleneck is not only that the model does not know the answer. It is the form in which the document is represented, how tables and numbers are preserved, and what retrieval unit the agent can operate on.

Where GPT-5.5 Improved

OpenAI says GPT-5.5 passed 50% accuracy on OfficeQA Pro and reduced errors by 46% versus GPT-5.4. Databricks researchers also described GPT-5.5 as better at parsing numbers from old documents and scanned PDFs, while making fewer unnecessary search detours. That sounds less like "longer reasoning" and more like a more stable task path.

Small mistakes compound inside enterprise agents. If a model misreads one number from a scanned document, the calculation built on top of that number is wrong. The next search step may then move in the wrong direction, and the final answer can become coherent but false. RAG systems often start the discussion at search quality, but document work has an extraction step before retrieval. If text and tables are badly structured, retrieval is already searching contaminated material.

The same applies to unnecessary search detours. When an agent works through several steps, a wrong intermediate search can raise cost, add latency, and mix in bad evidence. Humans make a similar mistake when they latch onto the wrong year or table at the start of an analysis. Agents can repeat that failure faster and at larger scale.

That is why GPT-5.5's OfficeQA Pro result matters beyond the score. It is a test of whether a production agent can complete longer document workflows with less supervision, and whether it can make more reliable judgments from numeric and tabular evidence. The fact that this is being routed into Databricks customer workflows is the practical part of the announcement.

Databricks Is Selling The Path, Not Just The Model

Databricks says GPT-5.5 will be available through AI Unity Gateway and inside workflows customers build with AgentBricks and the Agent Supervisor API. That short description carries a lot of architecture. This is not one model call. It is a path that includes an agent workflow gateway, specialized agents, a supervisor, parsing, retrieval, and execution.

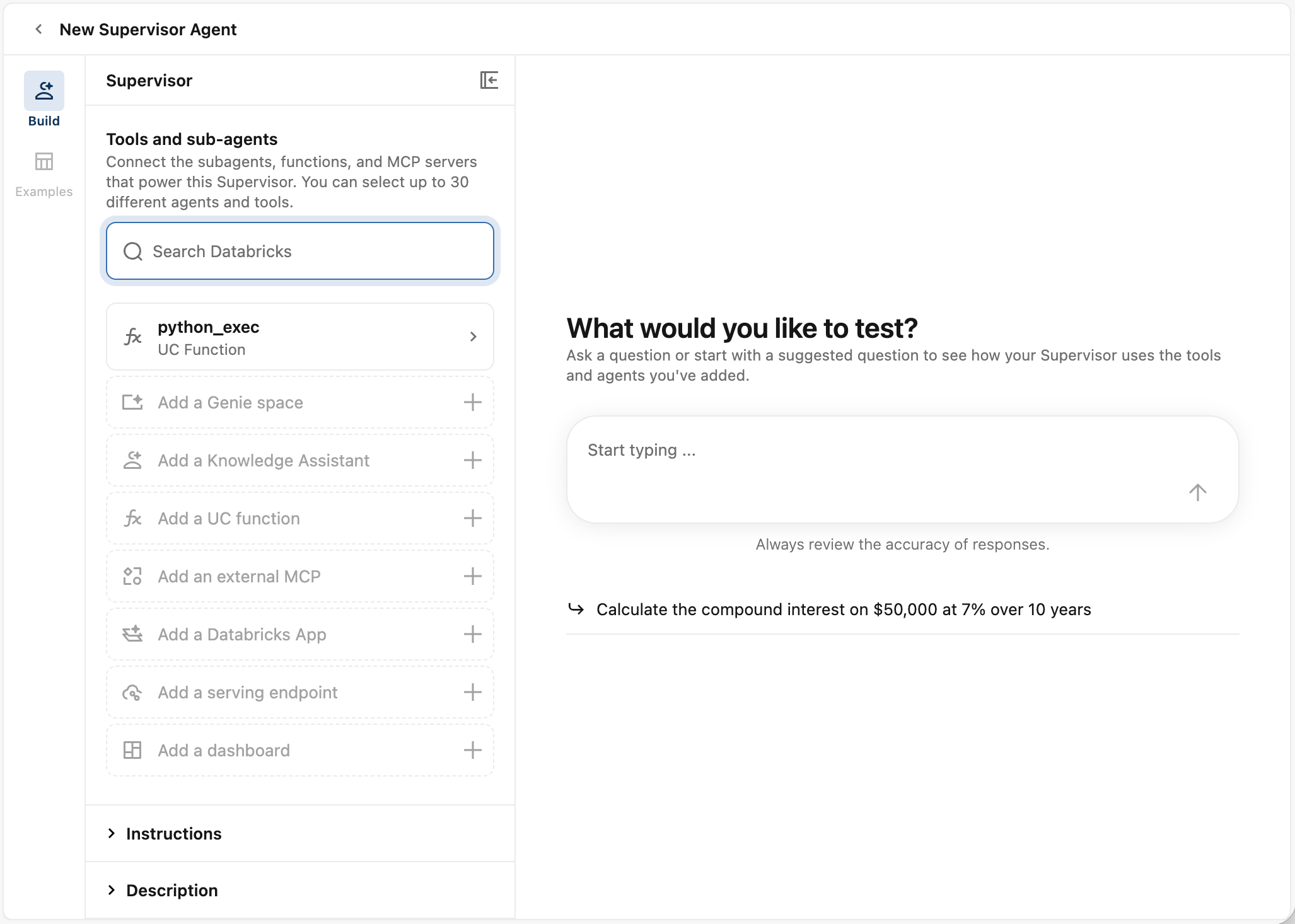

The direction becomes clearer in the Databricks Supervisor Agent documentation. Supervisor Agent coordinates Genie Spaces, agent endpoints, Unity Catalog functions, MCP servers, and custom agents to complete complex tasks. Databricks positions it for use cases such as market analysis, internal process questions, ticket backlog automation, and customer service. Subject-matter experts can also tune quality with natural-language feedback.

The crucial part is permissions. Databricks says the supervisor has built-in access controls and can access only the subagents and data that the end user is allowed to access. If a subagent is a Genie Space, the user needs permission on the underlying Unity Catalog objects. If it is a Unity Catalog function, the user needs EXECUTE. If it is an external MCP server, access is controlled through the relevant Unity Catalog connection permission.

That is the realistic shape of enterprise agent products. The era of promising that a chatbot will "search all company data" cannot last. Real companies care about job roles, teams, regions, security levels, customer-data access, and audit logs. If an agent coordinates multiple subagents and tools, each subagent's data access and execution rights also need to be separated. Databricks is pulling that problem into the management layer around Unity Catalog, AI Gateway, and AgentBricks.

The Problem After RAG Is Orchestration

For the past two years, RAG has been the default enterprise AI answer. Vectorize the documents, retrieve relevant chunks when a question arrives, and send those chunks to a model with citations. That pattern remains useful. But OfficeQA Pro-style tasks are not solved by a single retrieval call. One question can require multiple documents, multiple tables, calculations, and intermediate checks.

Imagine a question asking whether a financial metric changed alongside a specific policy change. The system has to find the relevant documents, extract values from tables, align units across years, handle missing values, locate policy passages, connect the evidence, and explain the final calculation. That is closer to an agent workflow than a search-and-answer loop.

Databricks' emphasis on Supervisor Agent fits that shape. Instead of one giant agent doing everything, a supervisor can coordinate a document-question agent, a structured-data agent, Unity Catalog functions, MCP servers, and custom agents. The supervisor decides which tool to call, when to call it, and how to synthesize the result. GPT-5.5 becomes both a stronger reasoning model and an orchestration model inside that flow.

| Bottleneck | How it appears in OfficeQA Pro | Design implication |

|---|---|---|

| Document parsing | Errors extracting numbers from scanned PDFs and old tables | Evaluate OCR, table representation, and structured document formats |

| Search path | Irrelevant search detours and weak evidence selection | Track retrieval units, reranking, and tool-call trajectories |

| Numeric reasoning | One wrong value can corrupt later calculations and conclusions | Use calculation tools, intermediate checks, and preserved table coordinates |

| Permission routing | An agent cannot assume it may see every document and tool | Enforce Unity Catalog permissions, subagent access, and MCP connection controls |

The Gap Between Benchmark Scores And Product Trust

Passing 50% is a milestone, but it is also a warning. It means many questions are still wrong. The OfficeQA Pro paper itself says there is significant headroom before agents can be trusted for enterprise-grade grounded reasoning. Reading this announcement as "enterprise document automation is solved" would be a mistake.

In practice, 50% accuracy means very different things depending on the workflow. It may be useful for internal research drafts, document exploration, numeric candidate extraction, or audit preparation. It is not enough for final financial reporting, regulatory submissions, lending decisions, medical or insurance claims, or tax judgment. The important question is not only whether the model produced an answer. It is what evidence and intermediate calculations it left behind, and where a human can verify them.

This is where Databricks' evaluation and governance layer becomes important. AgentBricks points toward domain-specific agents improved with subject-matter expert feedback. MLflow evaluation, Unity Catalog governance, and AI Gateway also fit the same pattern. As models improve, the operational question is less "can we swap in a better model?" and more "what did that model swap do to agent trajectories, permissions, and evaluation results?"

OpenAI's 46% error-reduction claim needs the same treatment. It is meaningful, but product teams need to know which errors fell. Was it numeric parsing, document search, multi-step orchestration, or final answer synthesis? Each category demands a different fix. Production teams may care more about error taxonomy and reproducibility than about a single aggregate score.

For Developers, This Is A Document Pipeline Story

For developers, the announcement should not end at model selection. Any team building an enterprise document agent has to inspect the document pipeline first. Which OCR system handles PDFs? Does the table representation preserve row and column meaning? How are low-quality scans marked? How are units, footnotes, and page metadata retained?

Many RAG failures happen because the input representation is weak, not because the model is weak. If a table collapses into line-by-line text, page numbers and section headers disappear, repeated headers are dropped, or currency units are stripped, even a strong model starts guessing. OfficeQA Pro is useful because it evaluates this messy reality directly.

The second area is tool trajectory. Teams need logs showing which document the agent searched first, which subagent it called, what intermediate values it calculated, and when it changed direction. If a wrong answer is treated only as "the model failed," there is little to fix. The failure may sit in parsing, retrieval, delegation, or the absence of a calculation tool.

The third area is permission-aware retrieval. An enterprise document agent must not bypass data access controls. If it cites evidence from a document the user cannot see, that is a security incident. If permissions are too narrow, the agent lacks context and gives brittle answers. Databricks' emphasis on end-user permissions for each subagent exists for exactly this reason.

For AI Teams, This Is A Control Plane Story

For AI platform teams, the larger question is the control plane. Models like GPT-5.5 will keep changing. Claude, Gemini, Mistral, and open-weight models will each have different strengths. But if enterprise workflows are coupled to a raw model call, model replacement and evaluation become painful. Teams need an AI gateway, an agent registry, evaluation, permissions, logging, and feedback loops.

Databricks is trying to solve that layer on top of its data platform. Unity Catalog manages data and function permissions. AI Unity Gateway controls the model access path. AgentBricks and Supervisor Agent coordinate subagents and tools. That structure is competing with moves from Snowflake Cortex AI, Microsoft Fabric and Copilot, Salesforce Agentforce, and ServiceNow AI Agent Orchestrator. The common question is no longer only which model to call. It is how to operate agents inside enterprise data and permission boundaries.

OpenAI's role also changes in this picture. OpenAI is pushing ChatGPT and Codex as direct products, but inside Databricks it also becomes a model supplier and reasoning layer. Customers can use GPT-5.5 inside Databricks' data, governance, and agent-workflow surface. That lets OpenAI participate in enterprise workflows without owning every enterprise workflow UI.

Databricks, meanwhile, cannot put all of its value on one model. Enterprise customers want to compare OpenAI, Anthropic, Google, and open models. The durable value is therefore not just "GPT-5.5 is available." It is whether Databricks can evaluate any model in enterprise document workflows, deploy it under permission controls, and coordinate it through subagents.

Why The Community Reaction Was Quiet

This announcement did not create the kind of explosive developer-community debate that a flashy demo can generate. I did not find a large standalone Hacker News thread about the Databricks announcement. GeekNews had previously summarized GPT-5.5, including the OfficeQA Pro numbers. Reddit agent discussions were more cautious, asking whether GPT-5.5's internal agent benchmarks translate to high-stakes work, and whether observability and human-in-the-loop controls are mature enough.

The quiet reaction makes sense. Databricks customer workflows and OfficeQA Pro are boring in the way real enterprise software is boring. Scanned PDFs, old tables, permissions, evaluation, and subagent orchestration are hard to show in a short video. But these are exactly the issues that decide whether enterprise AI deployments work.

The AI agent market now seems split across two layers. One layer is visible to individual users: Codex mobile, the Copilot app, Claude Code, and similar work surfaces. The other layer sits inside organizational operating systems: data, permissions, workflow, observability, and control planes from Databricks, Snowflake, Salesforce, ServiceNow, Microsoft, and Google. This announcement belongs to the second layer.

What Teams Should Check Now

First, if you are building a document agent, revisit your benchmark. General QA accuracy and LLM-judge scores are not enough. Include scanned documents, old forms, tables, footnotes, unit conversions, multi-document comparison, and missing values. OfficeQA Pro may not match your domain directly, but its failure patterns are worth borrowing.

Second, do not treat the parsing layer as outside the model problem. OCR, table extraction, layout preservation, metadata, and chunking are part of agent quality. In industries where numbers and tables matter, "the model will read it" is a risky assumption. As models get stronger, they may also make bad input look more plausible.

Third, if you use a supervisor pattern, design permissions and observability early. Decide which subagent can access which data, whether the user has rights to invoke that subagent, whether tool-call results are logged, and where a human can verify intermediate values. Databricks also warns that arbitrary-code tools can create sensitive-information exposure risks, which should shape deployment choices.

Fourth, treat every model swap as an evaluation event. Even if GPT-5.5 is better than GPT-5.4 in the announced benchmark, your internal documents and workflows may behave differently. Measure whether search detours fall, whether tool calls increase, how cost changes, whether hallucinations drop, and whether sensitive-data access patterns shift.

What The 50% Wall Says

GPT-5.5 crossing 50% on OfficeQA Pro offers optimism and caution at the same time. The optimistic read is that models and agent harnesses are improving on messy enterprise document reasoning. The cautious read is that the score is still only around the halfway point.

That number captures where AI agents are right now. In demos, many tasks already look possible. In production, scan quality, old tables, permissions, search paths, numeric calculations, and auditability all collide. A strong model is necessary. It is not sufficient.

Databricks and OpenAI's announcement is therefore more than a partnership note. It shows where the next performance gains for enterprise AI agents will come from: not only larger models, but better document representations, more accurate retrieval, more transparent tool trajectories, stronger permission models, and more repeatable evaluation loops. GPT-5.5 crossing the 50% wall is a start. The harder work is turning that score into a trustworthy workflow inside real companies.