Cursor enters Teams, and coding agents become teammates

Cursor brought Cloud Agent into Microsoft Teams and tied PR review, parallel builds, and PR splitting into a broader agent operations layer.

- What happened: Cursor announced a

Microsoft Teamsintegration that lets teams invoke Cloud Agent with@Cursor.- Cursor says the agent can read the full Teams thread, pick the right repository and model, implement the task, and open a PR.

- Related update: Cursor 3.3 added PR review surfaces, plan-based parallel builds, PR splitting, and the

/multitaskcommand. - Why it matters: The coding-agent race is moving from IDE chat into team conversations, work decomposition, and review operations.

- The durable advantage may be less about one model and more about who controls context, permissions, review quality, and spending.

- Watch: The Teams app is useful only if Cursor can make thread context, repo access, PR quality, and team-level cost control predictable.

Cursor announced a Microsoft Teams integration on May 11, 2026. Teams users can now mention @Cursor in a channel to delegate work to Cursor Cloud Agent or bring Cursor information back into the conversation. The feature is easy to describe as another workplace integration, but the product direction is more important than the connector itself. Cursor is moving the coding agent out of the IDE sidebar and into the place where teams actually negotiate requirements, constraints, bugs, and ownership.

The key line is not simply that a user can call Cursor from Teams. Cursor says it uses the prompt and recent agent activity to automatically choose the right repository and model, reads the whole thread for context, implements the change, and creates a PR for the team to review. That means the input to the coding agent is no longer only a carefully rewritten prompt in an editor. It can be a messy team discussion where a product manager describes the desired behavior, a backend engineer adds API constraints, a frontend engineer clarifies UI scope, and QA adds a reproduction path. The output is not just a chat answer. It is a PR that enters the team's review flow.

Viewed alone, the Teams announcement can look small. Viewed next to Cursor 3.3, released on May 7, it becomes part of a larger move. Cursor 3.3 introduced a PR review experience, build-plan parallelism, a quick action for splitting changes into PRs, and the /multitask command. One announcement opens the team conversation surface. The other strengthens the PR surface where software teams make final decisions. The common theme is not only agents. It is operations.

Coding agents do not end inside the IDE

The first wave of AI coding tools competed inside the editor. The questions were familiar: how natural is autocomplete, how well does chat read the repository, how quickly can the model apply a small refactor, and how often does it break nearby code? Cursor became strong in that phase because it brought codebase indexing, inline edits, composer flows, and agent mode into the screen where developers already lived.

As agentic coding grows, the bottleneck moves outside that text box. Real team work is rarely a single file edit. A bug is reproduced in a chat thread, scoped in an issue, constrained by a backend contract, implemented across frontend and server code, turned into a PR, reviewed by several people, revised, checked by CI, and sometimes split into smaller changes before merge. The code generation step matters, but the surrounding workflow is longer.

The Teams integration targets that surrounding workflow. If the agent can be invoked from a team discussion, the task definition can emerge from the conversation itself. The thread may contain the product goal, the rejected alternatives, the security concern, the test case, and the "do not touch this old path" warning. The hard part is selecting useful context from that thread without treating every sentence as a requirement. Still, the direction is clear. The coding agent is shifting from a personal assistant for one developer into an executor that participates in team work.

That puts Cursor in the same broader race as GitHub Copilot, OpenAI Codex, Claude Code, Coder Agents, and other long-running coding systems. Everyone is moving toward background execution, parallel tasks, PR creation, review, and auditability. The difference is the surface. GitHub starts from issues and PRs. OpenAI Codex spans app, CLI, and cloud tasks. Claude Code remains strong in terminal and project context. Cursor began with the IDE, and is now trying to connect that center to Teams and PR review.

Cursor 3.3 turns PR review into a command center

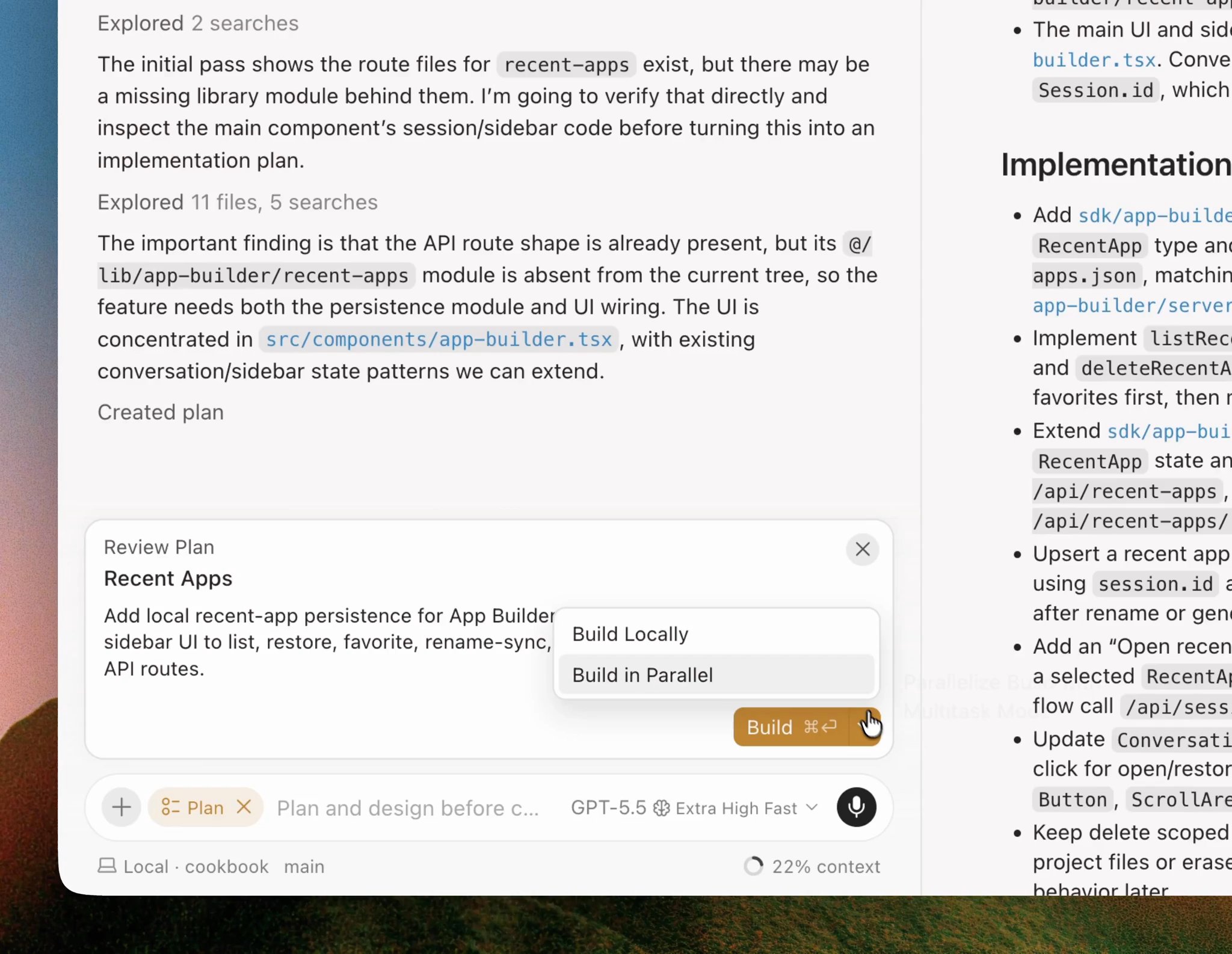

The first major part of Cursor 3.3 is PR review. According to the official changelog, Cursor 3 aims to take a PR from creation through merge in one place. The Reviews tab shows inline review threads and top-level PR comments. The Commits tab focuses on the PR commit history. The Changes tab adds a file tree and change selector for navigating large PRs.

This is not just a clone of the GitHub PR page. When agents write more code, the review surface becomes part of the agent product. A human author usually remembers why a sequence of changes exists because they made the decisions along the way. An agent can modify many files, add tests, adjust docs, and reshape helper code in a few minutes. The human still owns the merge decision, but they may not have watched the work unfold. The PR view becomes the place where generated work has to become legible.

Cursor's mentions of reviewer status, pending review banners, and quick action pills matter for the same reason. In a team setting, "the code exists" is not the finish line. Reviewers need to know who is looking, which comments remain open, what action comes next, whether the PR is too large, and what needs to be verified before merge. As generated PR volume increases, this operational UI becomes as important as raw model quality.

There is also a trust angle. Coding agents reduce the cost of producing diffs, which means they can also increase the cost of understanding diffs. A tool that generates work faster than the team can review it creates a new bottleneck. Cursor's PR review work is an implicit acknowledgement that the last mile of agentic coding is not generation. It is reviewability.

Build in Parallel is a work model, not only a speed feature

The second major part of Cursor 3.3 is Build in Parallel. Cursor says it can identify independent parts of a plan and execute them at the same time with async subagents, while dependent steps still run in order. Inside the editor, the new /multitask command lets users ask for async subagent execution directly.

On the surface, this is a speed feature. Underneath, it changes the work model. The classic AI coding session is one user and one agent carrying a long conversation. The user states a goal, the agent plans, reads files, edits code, runs checks, and iterates. That structure is easy to understand, but it has low parallelism. A larger feature often has loosely coupled pieces: API validation, React form state, tests, docs, migrations, and release notes.

Parallel agents try to handle those pieces at the same time. For example, one agent can inspect the server schema, another can update the frontend form, and a third can add tests and docs. The risk is coordination. Agents can touch the same file, assume different API shapes, duplicate helper logic, or leave integration work for the final merge. That is why Cursor's emphasis on independent parts and ordered dependencies matters. Parallel execution is not magic. It is dependency analysis plus integration review.

In that sense, Build in Parallel is also a management feature. It asks the product to help decide what can be split safely, what must remain sequential, and how the resulting work should be reviewed. Developers already run multiple branches, terminals, worktrees, or background agents manually. Cursor is trying to make that workflow productized and accessible from the IDE. The tradeoff is obvious: a bad split can produce more confusion faster.

| Flow | Earlier AI coding tools | Cursor May updates |

|---|---|---|

| Task request | Developer rewrites context in IDE chat or an issue | Team calls @Cursor inside a Teams thread |

| Context selection | User chooses repository, model, and relevant files | Prompt and recent agent activity guide repository and model choice |

| Execution | One session handles the plan mostly in sequence | Build in Parallel and /multitask run async subagents |

| Review | Humans inspect generated work mainly in GitHub PRs | Reviews, Commits, and Changes tabs are integrated in Cursor |

| Change slicing | Developers manually split branches, commits, and PRs | A quick action proposes PR splits from chat context |

PR splitting may be the most practical feature

The most practical Cursor 3.3 feature may be Split changes into PRs. Cursor says the quick action uses chat context to find logical slices, defaults to independent PRs unless dependency requires otherwise, creates a backup snapshot, and proposes a split plan.

This addresses a real failure mode in AI-assisted development. Agent-generated changes grow large very quickly. A bug fix can pick up a refactor, a test helper rewrite, a naming cleanup, and a documentation update. The agent may have a valid internal reason for the sequence, but reviewers see a swollen PR with several unrelated intents. The time saved in implementation can disappear in review.

Splitting by chat context is the interesting part. A naive splitter based only on filenames or diff size can miss meaning. A conversation-aware splitter can, in principle, map changes back to goals such as "API contract update," "UI state handling," "test coverage," or "docs." That makes the output closer to how teams actually review and deploy. It still needs human approval, because good PR boundaries depend on release strategy, risk tolerance, and team conventions.

There is an important second-order effect here. If a coding tool makes large diffs easy but small PRs hard, teams may stop trusting the tool for serious work. If it makes reviewable slices easy, agents become more compatible with normal engineering discipline. Cursor seems to understand that agent adoption depends on fitting into the review process, not bypassing it.

Teams creates a direct collision inside Microsoft's territory

Cursor entering Microsoft Teams is also strategically awkward for Microsoft. Teams is a core collaboration surface in Microsoft 365, and Microsoft owns both GitHub Copilot and Microsoft 365 Copilot. A third-party coding agent that can be invoked from a Teams channel is effectively competing for developer AI work inside Microsoft's own enterprise surface.

That does not mean a Teams app automatically becomes an enterprise standard. Large organizations care about SSO, audit logs, data retention, repository permissions, model policy, spend control, security review, and procurement. Cursor has been emphasizing model controls, usage analytics, and spend management in recent changelog updates, which suggests the company knows team adoption is not just a viral product motion. It is a governance question.

The balance is difficult. Cursor has to keep the fast, developer-loved agent UX that made it popular while adding the controls that security, finance, and platform teams require. Recent community discussion around Cursor's team pricing, usage limits, and Bugbot billing shows that the pain is not only about features. As AI coding tools spread from individuals to teams, cost predictability and administrative control become part of the product experience.

The Teams integration sharpens that problem because it lowers the friction to start work. When a tool can be called from a channel, more people can create agent tasks. That is useful, but it also means more execution, more PRs, more token spend, and more permission questions. The product has to make it clear who authorized the task, which repo the agent used, what context it read, and what changed as a result.

The agent operations layer is becoming the battleground

The best way to describe Cursor's May updates is that the company is moving toward an agent operations layer. This layer governs where agents are invoked, what context they read, which permissions they have, how they split work, how they produce PRs, who reviews them, and how much they cost. As models become stronger and more interchangeable, this layer becomes more valuable.

Model performance alone may converge quickly. Most coding products can offer a rotating menu of OpenAI, Anthropic, Google, xAI, and internal models. Users will pick based on task, latency, price, and preference. Team workflows are harder to copy. Integrations, review surfaces, audit trails, background execution, dependency splitting, and spending controls create switching costs that are not captured by benchmark scores.

Cursor's advantage is that it started in the place where developers write and inspect code. Its challenge is that the IDE is not the whole software delivery system. Teams define work in chat and issue trackers. They review in PRs. They verify in CI. They release through deployment pipelines. Cursor's Teams integration, PR review surface, Build in Parallel, and PR splitting are attempts to cover more of that flow.

This also explains why the update should not be reduced to "Cursor added Teams." The more meaningful signal is that coding agents are being operationalized. They are being placed inside shared communication channels, given mechanisms for parallel work, asked to produce reviewable PRs, and wrapped with more team controls. That is how a personal productivity tool starts becoming team infrastructure.

What still needs proof

The first unresolved question is context quality. Reading a full Teams thread is convenient, but a thread can contain old decisions, jokes, speculative suggestions, security-sensitive details, and opinions that were never agreed upon. The agent has to distinguish requirements from noise. Users also need a way to inspect what context the agent treated as important.

The second question is permissions. If one person in a Teams thread can mention @Cursor, what exactly determines the repositories, branches, and secrets the agent can touch? A user may have access to a repo, but that does not mean everyone in the channel should see the same output. Collaboration integrations improve productivity, but they can blur access boundaries if the product does not make authority explicit.

The third question is PR quality. Making PR creation easy can increase review burden. Cursor's PR review screen and PR splitting feature are direct attempts to reduce that burden, but the final responsibility still sits with the team. Generated code needs small change units, sufficient tests, clear descriptions, and reproducible verification. The fact that an agent can open a PR does not mean the PR is mergeable.

The final question is cost. Parallel agents, Teams invocations, PR review assistance, Bugbot, and Cloud Agent time all become part of the team's operating budget. That is why spend management and usage analytics are not secondary admin features. They are the difference between an agent workflow that teams can normalize and one that creates monthly surprises.

Conclusion

Cursor's Microsoft Teams integration and Cursor 3.3 PR command center point in the same direction. Coding agents are no longer just helpers that individual developers call inside an IDE. They are becoming participants in team conversations, executors of parallel work, generators of PRs, and objects of review, permissioning, and budget control.

For that shift to work, the model's coding ability is not enough. Teams need to trust the context the agent read, understand the permissions it used, manage the cost of repeated execution, and review generated changes in small, coherent units. Cursor's latest updates are product answers to those questions. The real story is not the arrival of a Teams app. It is the expansion of the coding-agent race from the IDE into the operating layer of software teams.