Cursor Cloud Agents Expose the New Bottleneck in Dev Environments

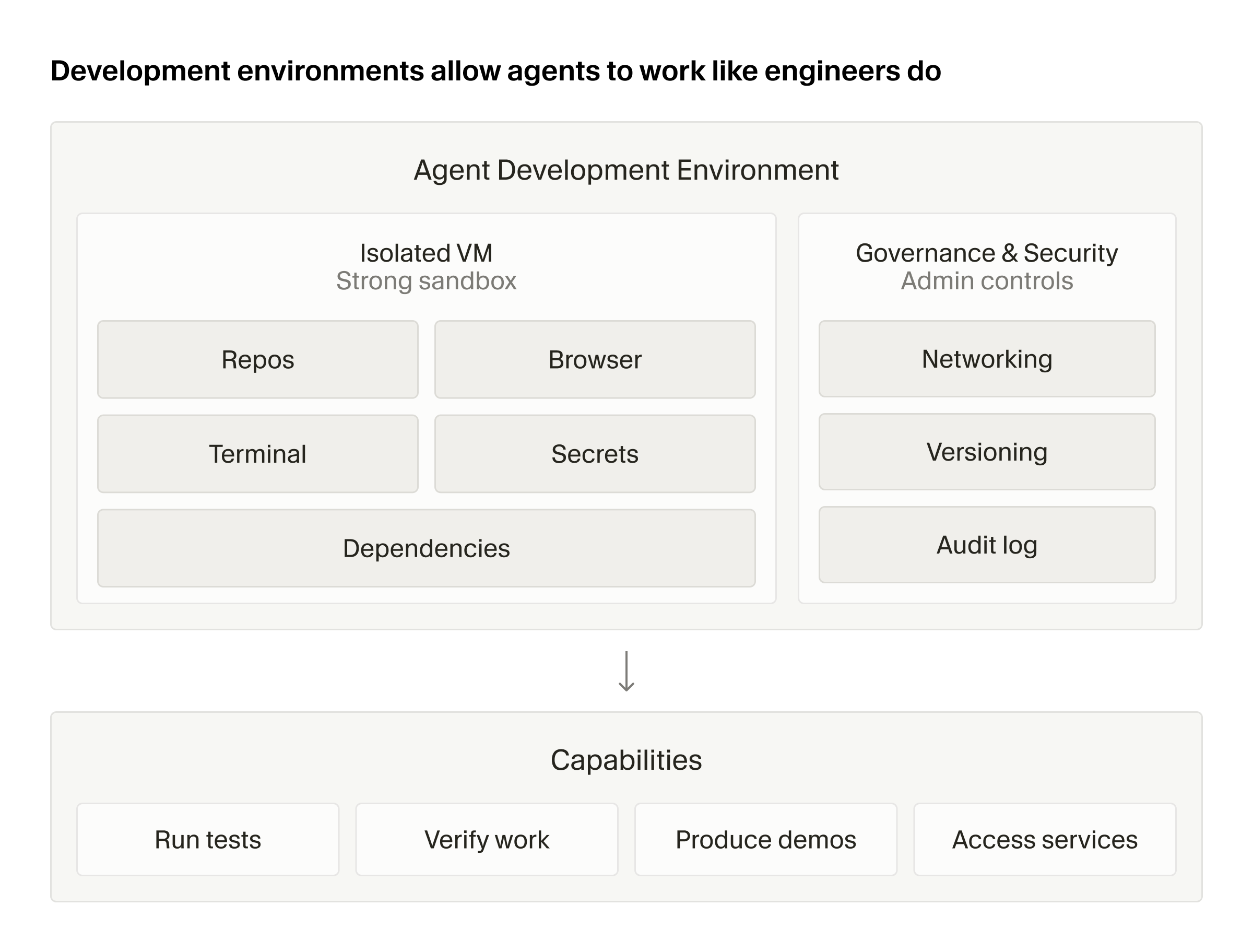

Cursor introduced cloud agent development environments. The coding-agent race is moving from model quality to multi-repo workspaces, secrets, audit logs, and egress control.

- What happened: Cursor introduced

cloud agent development environments.- The core pieces are multi-repo environments, Dockerfile-based setup, build secrets, audit logs, and environment-scoped egress and secret controls.

- Why it matters: The coding-agent bottleneck is shifting from raw model capability to reproducible execution environments.

- Practical impact: Teams can give agents more repositories and permissions, but they need audit and blocking points at the environment level.

- If agents are expected to run tests and builds end to end, internal packages, credentials, and network policy all have to live inside the same workspace boundary.

- Watch: As environments become more powerful, agent failures become platform operations problems, not just code errors.

Cursor introduced cloud agent development environments on May 13, 2026. At first glance, it looks like another configuration feature for cloud coding agents. But the details make the direction of the AI coding market much clearer.

The coding-agent race is no longer explained only by which model writes better code. Claude Code, Codex, GitHub Copilot, Cursor, and xAI Grok Build all claim some combination of file editing, command execution, test running, and PR creation. The next question is more operational. Which repositories can the agent see? Can it reach the internal package registry? How does it receive test credentials? Which external network paths are open? Who rolls back the environment when it breaks?

Cursor's announcement targets exactly those questions. The company wants to give cloud agents something closer to a managed developer laptop, then let teams version, audit, and segment that workspace with secret and egress boundaries. AI coding is moving down from the editor UI into the development platform layer.

Cloud Agents Are Incomplete Without an Environment

Cursor's post starts with the advantages of cloud agents. Compared with local agents, they are easier to parallelize, they can continue while a laptop is closed, and they can be triggered programmatically. That fits the direction Cursor has been pushing with Automations, Teams integration, PR review, and parallel plan execution.

The more important sentence comes immediately after that: agents are only as capable as the environments they run in. An agent may be able to write code, but if it cannot run tests, query internal services, or access the APIs it needs, it cannot close the work loop. For an agent to say "done" in a way a team can trust, it needs conditions that look much more like a human developer's local setup. The repositories must be cloned, dependencies installed, and internal toolchain credentials and build systems available.

This is where AI coding tools become real operational systems. In a demo, changing a few files can be enough. Team software is different. A single service change may touch an API schema, a frontend client, a shared type package, infrastructure configuration, and test fixtures at the same time. If the agent is trapped inside one repository, it loses context. If every repository and secret is opened at once, the security team has a new problem. The missing piece is not always a stronger model. It is a clearer workplace.

Multi-Repo Environments Widen the Agent's Field of View

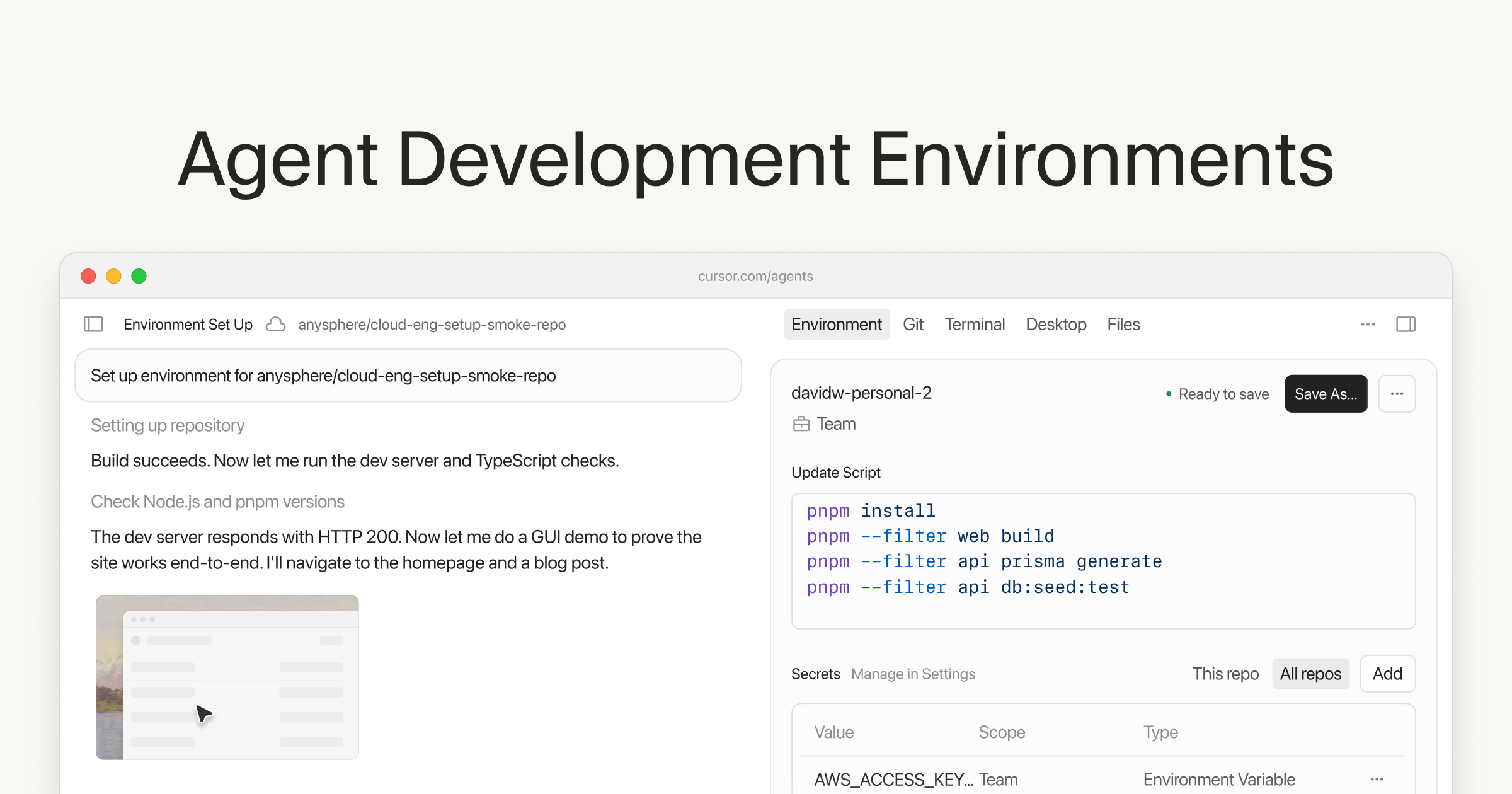

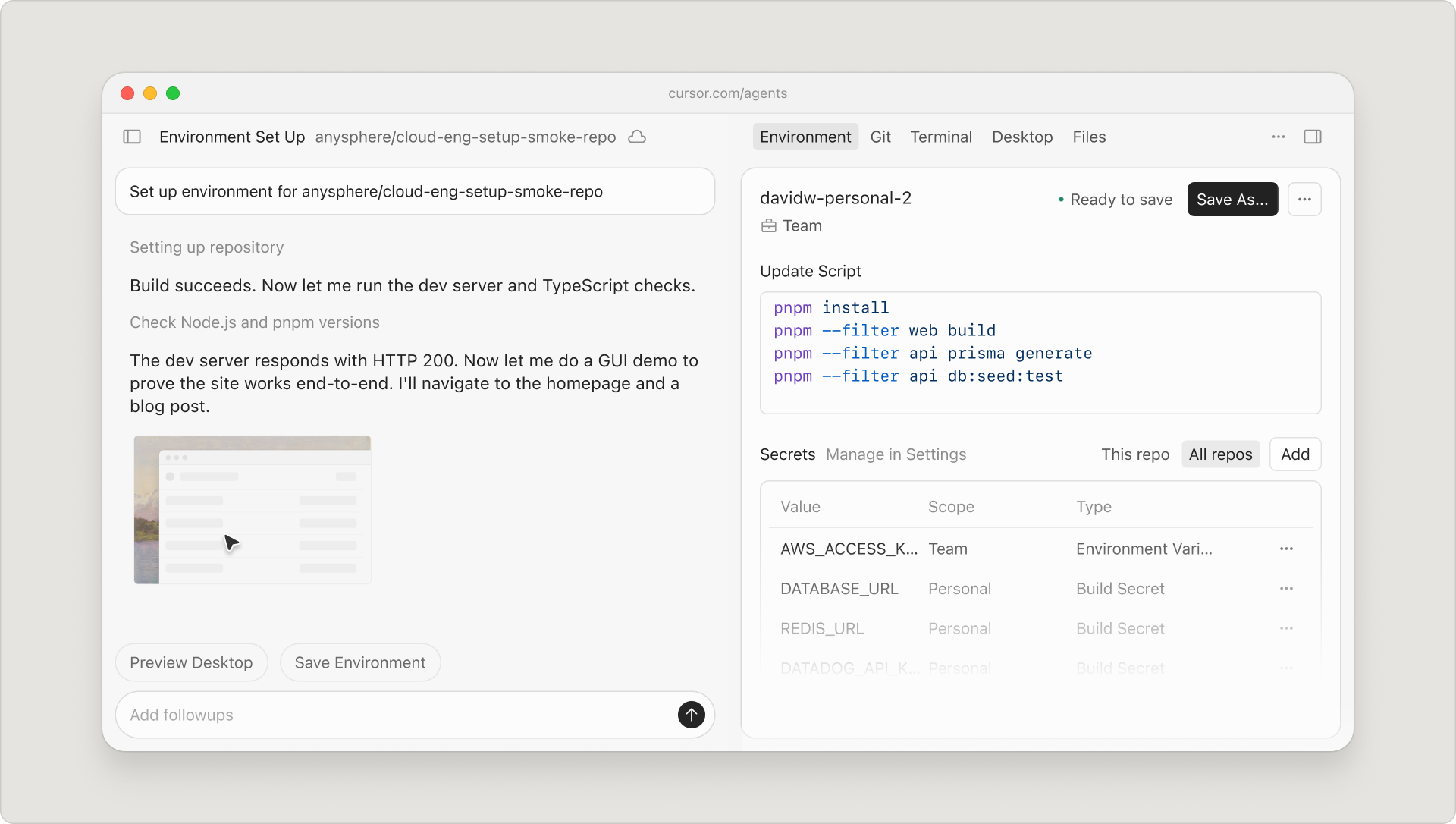

The first change is multi-repo environments. Cursor Cloud Agent and Automations can now put multiple repositories into one environment and reuse that environment across sessions. Cursor frames this as an extension of existing multi-root workspaces.

The importance is straightforward. Enterprise software usually does not live in a single repository. There is a backend service, a frontend app, an SDK, shared type packages, and infrastructure code. If an agent can only work in one repo, it is more likely to produce a change that compiles locally while breaking the actual system.

Cursor's announcement includes an Amplitude example. Amplitude says it runs Cursor Automations from a public Slack channel, and multi-repo support lets the agent investigate a reported issue, determine which repository should be changed, and open a PR in the right place. That is still a vendor case study, but the shape of the problem is realistic. For coding agents to take on team-level work, they need to understand not just "which file should I edit?" but "which combination of repositories defines the boundary of this problem?"

Multi-repo environments are therefore both a context feature and a responsibility feature. Administrators choose which repositories belong in each environment, and agents operate inside that environment. Designed well, the agent's field of view gets wider. Designed poorly, unrelated repositories and permissions collect in one place. The platform team's job becomes less about showing agents everything and more about defining the smallest useful world for each class of work.

Dockerfiles Become the Contract for Agent Environments

The second change is environment configuration as code. Cursor expanded Dockerfile-based environment definitions and introduced build secrets and improved layer caching. Build secrets matter when an environment needs internal dependencies such as private package registries. Cursor says those secrets are scoped only to the build stage and are not passed into the running agent environment.

That design detail is small but important. Secrets inside agent environments are always a double-edged tool. Credentials are often required to complete tests and builds, but the agent must not be able to read them freely or send them elsewhere. Scoping a build secret to the build phase is a way to let the agent benefit from the resulting environment without leaving the raw secret in the runtime workspace.

Cursor also says improved layer caching made cache-hit builds 70% faster. The exact number matters less than the underlying point. Cloud agents create fresh environments often and run many tasks in parallel. If environment builds are slow, waiting time accumulates before model latency or token cost even becomes the bottleneck. Agent performance is shaped by image builds, dependency installation, test warm-up, and artifact caches, not only by model speed.

One especially interesting part is Cursor's planned Dockerfile generation. According to the official post, Cursor will inspect a repository, infer the tools and dependencies it needs, and create an editable, version-controlled Dockerfile. That capability is in private beta and rolling out to Enterprise teams. In other words, coding agents are moving from writing application code toward helping configure the environment in which they work.

Environment Setup Becomes a Conversation With the Agent

The third change is agent-led environment setup. Cursor says its setup flow can ask questions, surface missing credentials, and verify whether the environment is ready. When setup fails, Cursor can fall back to a base image with warnings so cloud agents can keep running instead of stopping immediately.

This flow matches how development environments work in real teams. Documentation and reality often drift. The README may say pnpm install, but the actual setup may need an internal npm scope token, an old protobuf compiler, a particular VPN, or an allowlist entry. A human asks a teammate or searches Slack. An agent does not begin with that implicit knowledge.

Cursor's decision to add questions and verification to setup is an attempt to structure that implicit knowledge. The product surface now includes questions such as which credential is missing, whether this Dockerfile passes tests, and which environment version the agent is using. That is not merely onboarding convenience. If agents are expected to finish work independently, they must distinguish environment failure from code failure. When tests fail, the next action depends on whether the cause is logic, a missing secret, or a bad image version.

Security Focuses on Secrets and Egress

The fourth change is governance and security controls. Cursor says each development environment has version history, users can review and roll back changes, and administrators can restrict rollback to admins only. Audit logs record environment change actions. More importantly, egress and secrets can be scoped to the development environment.

Egress is easy to underestimate in agent security. Many teams focus on where secrets are stored and who can access them, then look at outbound network paths later. If a prompt injection or bad script can send data to an external API, paste service, or arbitrary domain, hiding the secret value is not enough. Cursor's mention of per-environment outbound allowlists matters because cloud agents are more active execution actors than a typical CI runner or devcontainer.

Secret scoping belongs in the same discussion. A secret configured in one environment is not accessible from another environment. That has to be evaluated alongside multi-repo support. The more repositories are grouped into one environment, the broader the agent's possible work. The risk is that secret boundaries become blurry. Environment-level secret isolation is the minimum control needed to balance those forces.

| Problem | Cursor's answer | What teams should examine |

|---|---|---|

| Repository boundaries | Multi-repo environments | Minimize the repo set for each class of work |

| Environment reproducibility | Dockerfile-based config as code | Treat environment changes as reviewable and rollbackable code |

| Internal dependency access | Build secrets and layer caching | Separate build-time secrets from runtime secrets |

| Audit and recovery | Version history, rollback, audit logs | Connect environment changes to the agents that used them |

| Data exfiltration paths | Environment-level egress scope | Separate outbound allowlists by task boundary |

This Is a Different Layer From Cursor in Teams

A few days earlier, Cursor also introduced Microsoft Teams integration. In that flow, a user mentions @Cursor in a Teams channel, the Cloud Agent reads the whole thread, selects a repository and model, implements the change, and creates a PR. devlery covered that announcement as a sign that coding agents are moving into team conversation surfaces.

The development environments announcement sits below that layer. Teams integration asks where work is requested. Development environments answer where that work is performed. PR review and parallel build features ask how a change is submitted and split. Environment controls ask which tools and permissions are available while the change is being made.

These are not separate features. They are upper and lower layers of the same system. If a team wants to invoke an agent from conversation, the agent must descend into a trustworthy execution environment. Without that environment, the Teams mention is only a polished input box. Without the collaboration surface, the environment is disconnected from how teams make decisions. Cursor is filling both directions at once.

The Competitor Is Also the Platform Team

Cursor's direct competitors appear to be GitHub Copilot, OpenAI Codex, Claude Code, JetBrains Junie, and xAI Grok Build. That is true. But this particular announcement reveals another competitor: the internal platform engineering team.

Large companies already operate devcontainers, GitHub Actions runners, self-hosted runners, Kubernetes-based ephemeral environments, and internal developer platforms. Those systems were designed for human developers and CI. Coding agents are becoming a new class of user. Agents create environments more often, run more parallel tasks, demand broader context, and sometimes execute commands that humans would overlook.

Cursor's version history, audit logs, egress controls, and secret scoping show that it understands this market. Enterprise customers do not only ask whether an agent writes good code. They ask which environment the agent used, which secrets were available, where outbound traffic could go, and which version can be restored after failure. Without answers to those questions, coding agents remain personal productivity tools.

Self-Evolving Environments Are Powerful and Risky

Cursor also previewed where it wants to go next. Today's environments are configured at one point in time and rebuilt when they drift from the codebase. Cursor says it is working on environment setup that evolves autonomously as the codebase changes.

That direction feels inevitable. Dependencies change, build tools change, services split apart, and environments must follow. If an agent can read failure logs and add a missing package or adjust a cache strategy without a human editing the Dockerfile every time, cloud agents become much more autonomous.

The risk grows at the same time. Environments can carry broader authority than application code. One Dockerfile line can fetch an external binary, open a network path, or change how secrets are accessed. Self-evolving environments may need stricter policy than ordinary code review. Some changes should be proposed by the agent but approved by a human. Other changes should not be accepted just because tests pass; they should run through security policy checks as well.

The important product race may therefore become not whether an agent can fix its environment, but which policies and audit paths govern environment changes. Cursor's version history and audit logs are a starting point. They are not the whole answer yet.

The Questions Development Teams Should Ask Now

This announcement does not mean every team should adopt Cursor Cloud Agent immediately. It raises a more general checklist. Where does your AI coding agent run today? How different is that environment from a human developer's local setup? Who manages the credentials the agent uses to run tests? Is outbound network access open? Do environment changes go through code review? Can you later prove that a particular agent used a particular secret?

Those questions are not specific to Cursor. They apply whether a team uses GitHub Copilot coding agent, Codex, Claude Code connected to a remote runtime, or an internal agent platform. As AI coding agents move from autocomplete tools toward actual workers, development environments become part of production infrastructure. The old combination of a laptop .env file and a README will not scale to agent workloads for long.

Cursor's cloud agent development environments show the next bottleneck in the coding-agent market. Models will keep improving. But teams are not ultimately buying more plausible diffs. They want verifiable completion. A model alone cannot provide that. Repositories, dependencies, secrets, networks, tests, and audit logs have to connect.

That is the real significance of this release. Cursor did not just add another feature. It signaled that coding agents are moving from the edge of software development into the execution infrastructure of teams. Evaluating AI coding tools now requires more than checking the model name. The more important questions are where the agent works, who controls that environment, and how failures and permissions are traced.