Codex Moves to the Phone as Coding Agents Get a New Control Plane

OpenAI Codex mobile preview shows the coding-agent race moving from model capability toward approvals, remote execution, and cost boundaries.

- What happened: OpenAI brought Codex into the ChatGPT mobile app as a preview.

- The rollout is coming to iOS and Android across supported regions, including Free and Go plans.

- OpenAI says Codex now has more than 4 million weekly users.

- Why it matters: The bottleneck for coding agents is shifting from code generation to

approval,status checks, andcourse correction. - Competitive context: The same week, Anthropic separated Agent SDK and

claude -pusage into monthly credits.- OpenAI is widening access points, while Anthropic is redrawing the cost boundary around automation.

- Watch: Phone-based approvals are convenient, but reviewing diffs and shell commands on a small screen needs new security habits.

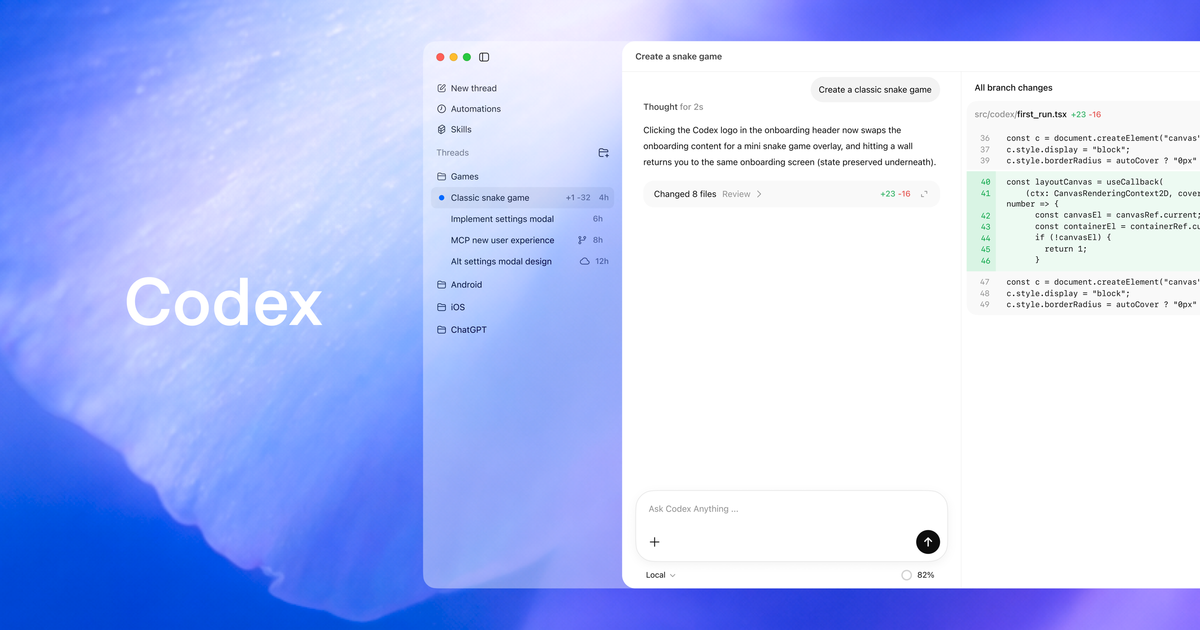

OpenAI announced on May 14, 2026 that Codex is coming to the ChatGPT mobile app as a preview. Read only as a product headline, it sounds like a simple expansion: Codex is now on your phone. The more important shift is that the daily rhythm of coding agents is changing. Codex is no longer just a tool that waits inside a desktop session. It is becoming a long-running worker that can operate on a laptop, devbox, or remote environment while the developer steps in only when judgment is needed.

OpenAI also said Codex has passed 4 million weekly users. That number matters for more than adoption optics. Once coding agents reach this scale, the next bottleneck is not only whether the model can write better code. Longer-running agents ask follow-up questions, wait for shell-command approval, surface test failures, and need direction changes. In practice, a coding agent's productivity depends on when, where, and how cheaply the user can reconnect with the agent.

That is the scene OpenAI emphasized in the announcement: starting a bug investigation while waiting for coffee, choosing a refactor direction on a commute, or asking for an issue summary before a customer meeting. These are not really examples of coding on a phone. They describe an agent already doing work while the human provides short decisions at the right moments. This makes the Codex mobile preview less like a revival of the mobile IDE and more like a control plane for long-running agent work.

The Phone Is an Approval Layer, Not the Runtime

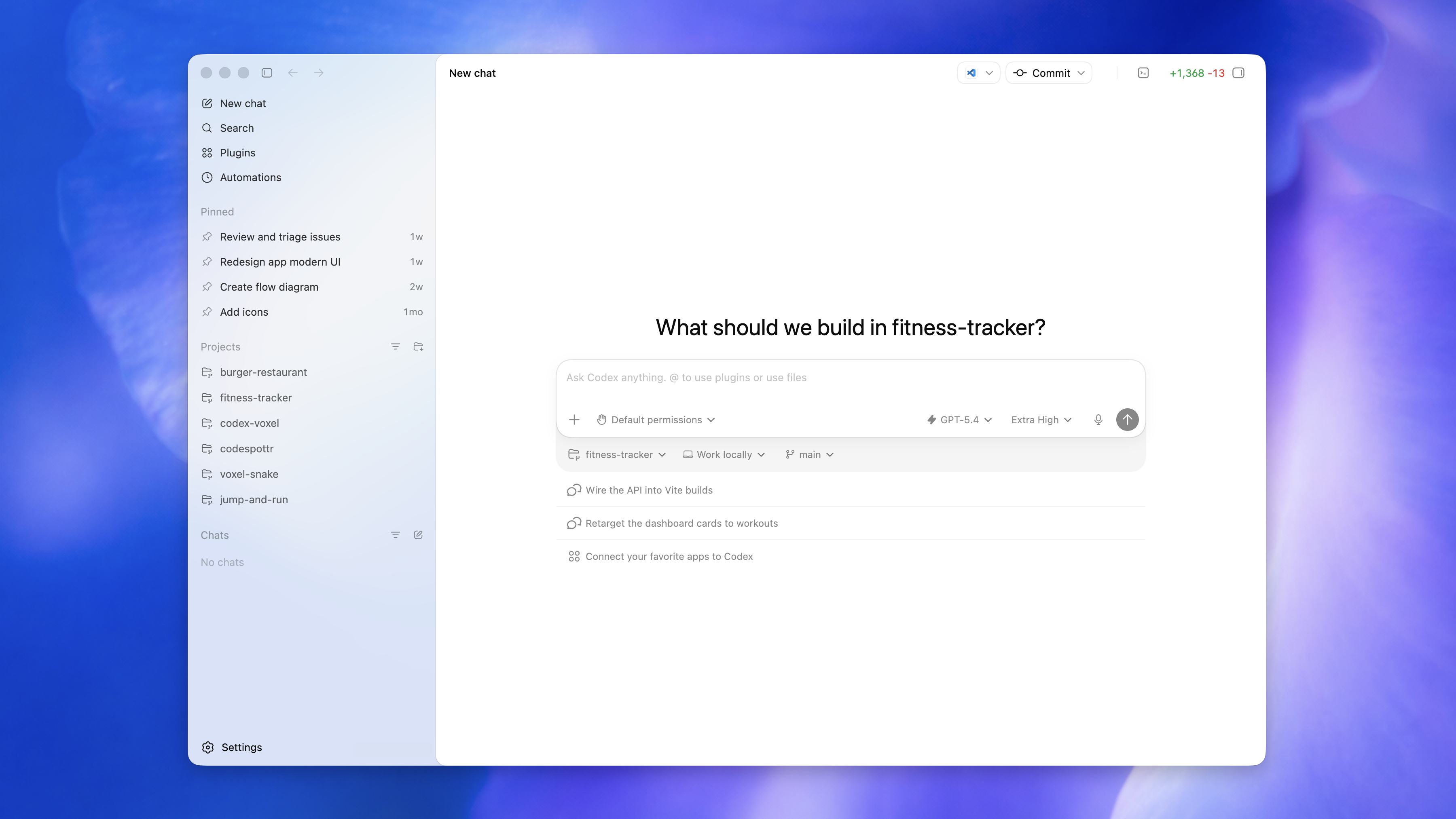

According to OpenAI, Codex in the ChatGPT mobile app connects to the Codex environment already running on the user's machine. That machine might be a laptop, a Mac mini, or a company-managed remote development environment. The mobile app brings over active threads, approval state, plugins, project context, and live state. From the phone, users can move between threads, inspect output, approve commands, switch models, and start new tasks.

The important boundary is where files and permissions live. OpenAI says files, credentials, permissions, and local setup remain on the machine where Codex is running. What flows to the phone are updates such as screenshots, terminal output, diffs, test results, and approvals. That positions the phone not as a copy of the development environment, but as a supervisory layer above the runtime.

This design addresses two tensions in coding agents. One is access: users do not have to sit at the desk to keep an agent unblocked. The other is security: company source code and credentials do not need to move directly onto a phone for the user to review progress and make approvals. That does not remove every risk. It actually makes approval more frequent and pushes it onto a smaller screen. Teams will need clearer rules for what can be approved from mobile and what still deserves a full desktop diff review.

Development environment: laptop, devbox, or Remote SSH host

Codex runtime: files, credentials, and permissions stay on the execution machine

Secure relay: screenshots, terminal output, diffs, test results, and approval state sync across devices

ChatGPT mobile app: inspect, approve, redirect, or start a new thread

OpenAI says this is handled through a secure relay layer, so a trusted machine does not have to be exposed directly to the public internet just to stay reachable from multiple devices. For developers, that detail is not cosmetic. Remote control over a coding agent touches company networks, SSH hosts, secrets, local toolchains, and sometimes browser sessions. As these products mature, the more practical question becomes less "what can the model do?" and more "inside which boundary is the model allowed to act?"

Why Remote SSH and Hooks Matter Here

The announcement was not only about mobile. OpenAI also said Remote SSH is generally available. The desktop app can detect hosts from a user's SSH configuration and create projects and threads inside the remote machine much like it would locally. Mobile access then sits on top of that flow. A developer can connect to the remote environment from desktop, start the work there, and later check progress or approve the next step from a phone.

That combination is especially relevant inside companies. Many development teams already prefer managed remote environments over local laptops because approved dependencies, internal credentials, security policies, and larger compute can live in one controlled place. If Codex runs there and mobile receives only state and approvals, organizations can expand agent workflows without giving every personal device the full execution surface.

Hooks becoming generally available points in the same direction. OpenAI describes Hooks as a way to scan prompts for secrets, run validators, log conversations, generate memory, and customize behavior per repository. That moves Codex from an interactive assistant toward an automation surface a team can shape. A repository might require a particular validation command before deployment files change, block prompts containing customer data, or record repeated decisions into memory for future runs.

Programmatic access tokens arrived in the same release cluster. For Enterprise and Business plans, workspace settings can issue scoped credentials for CI pipelines, release workflows, and internal automations. Taken together, these features show the product direction clearly. Codex is expanding from a coding helper in a single chat window into a development operations layer with remote execution, automation hooks, scoped tokens, and a mobile approval app.

The Same Week, Anthropic Redrew the Cost Boundary

The interesting contrast is that Anthropic sent an important signal from nearly the opposite side of the problem. According to Anthropic's Help Center, starting June 15, 2026, Claude Agent SDK and claude -p usage will no longer count against the normal Claude plan usage limit. Instead, eligible users on Pro, Max, Team, and Enterprise plans can claim separate monthly Agent SDK credits.

The credit amounts vary by plan. Pro and Team Standard receive $20. Max 5x and Team Premium receive $100. Max 20x and some Enterprise Premium seats receive $200. These credits apply to the Agent SDK, non-interactive claude -p commands, the Claude Code GitHub Actions integration, and third-party apps built on the Agent SDK. They do not apply to interactive Claude Code, Claude web, desktop or mobile chats, or Claude Cowork.

This reads like Anthropic's answer to a hard subscription question: can one monthly plan support near-unbounded automation? Anthropic is keeping subscription limits focused on interactive use while moving automation and third-party agent usage into a separate budget. When the credit is exhausted, requests move to standard API pricing only if extra usage is enabled. Otherwise, Agent SDK requests stop until the next cycle. Credits also do not pool across a team.

| Area | OpenAI Codex Mobile | Anthropic Agent SDK Credits |

|---|---|---|

| Core change | Inspect progress, approve commands, and redirect work from mobile | Split non-interactive SDK usage into separate monthly credits |

| Developer message | Keep agent work moving outside the desk setup | Manage automation with experimental credits and API pricing |

| Operational question | Mobile approval policy and remote execution boundaries | Credit exhaustion, per-seat budgets, and production automation costs |

The two announcements look like separate product updates, but they point at the same underlying problem. Long-running coding agents are hard to treat as an add-on to a chat service. Someone has to design the approval experience, and someone has to define the budget boundary around automation. Agents can consume tokens and tool calls far faster than humans in a chat window, so "monthly subscription" and "unbounded automation" collide quickly.

What Development Teams Should Watch Now

First, approval policy is becoming a product feature. Until now, coding-agent approvals have been scattered across terminals, IDE diffs, and pull-request reviews. Codex mobile pulls that process into its own interaction layer. A phone approval button means users can intervene more often, but it also raises the chance of looser approvals.

In practical terms, teams need to classify command types. Read-only investigation, tests, linting, and type checks may be reasonable to approve from mobile. Database migrations, production credential access, large file deletions, dependency lockfile changes, and external API calls are different. The success of Codex mobile will depend less on the raw number of features and more on how well teams can express and enforce those policies.

Second, remote development environments become more valuable. Remote SSH becoming generally available, paired with mobile supervision, helps teams that want to move agent execution away from personal laptops and into managed environments. Developers will increasingly ask less "where am I coding?" and more "which isolated runtime should I assign this work to?" Environment creation, secret injection, audit logs, network policy, and browser-automation permissions start to become one package.

Third, agent cost is no longer a background concern. Anthropic's Agent SDK credit change makes that especially clear. Individual users may find monthly credits enough for experiments and small automations, but teams running production automation should expect API keys and usage-based billing. OpenAI is also limiting programmatic access tokens to Enterprise and Business. The convenience path for individuals and the automation path for organizations are unlikely to share the same authentication and billing model for long.

The Community Reality Check

Reaction across GeekNews mirrors, Reddit, and AI developer communities focused mostly on practical utility. If a user can check progress and approve the next step from a phone, long-running work is less likely to stall. That is especially useful when an agent fails a test, reaches a fork between two implementations, or waits at a command that needs permission.

The concerns are just as clear. A small screen can make it harder to inspect diffs carefully, and a request arriving like a mobile notification can invite reflexive approval. Axios also noted the risk of mistakes on a small screen in a multitasking context. This is not just a UX issue. Once a coding agent can touch the shell, filesystem, browser, and cloud CLIs, approval is a security control. If approval UX becomes looser, the incident surface expands alongside productivity.

The answer is not to declare mobile approval too dangerous to use. The useful distinction is which approvals are suitable for mobile and which are not. Mobile is strong for brief decisions that keep work moving. Major architecture changes, security-sensitive actions, data deletion, and deployment decisions still deserve wider context and slower review. A mature coding-agent workflow will make that difference explicit in both product design and team process.

The Coding-Agent Race Is Moving Outside the Model Card

Through 2025, the debate around coding AI was mostly organized around model capability and benchmarks: which model scored higher on SWE-bench, which one handled long context better, and which IDE integration felt smoother. The 2026 pattern is different. Models still matter, but a growing part of the competition has moved outside the model itself.

OpenAI's announcement spends more time on execution boundaries than on model names: mobile, secure relay, Remote SSH, Hooks, programmatic access tokens, and HIPAA-compliant local environments. Anthropic's same-week update is not a model improvement either. It is documentation about which budget pays for Agent SDK usage. The market is moving from "who is smartest?" toward "who lets teams operate long-running agent work safely and predictably?"

For developers, that is both useful and uncomfortable. The useful part is that agents are moving deeper into real workflows. Bug investigations, tests, refactors, documentation updates, and pre-release checks can be bundled into longer units of work. The uncomfortable part is that model choice is no longer enough. Teams need to design approval policies, budget limits, remote environments, audit trails, secret handling, and recovery paths.

That is why the real meaning of the Codex mobile preview is not that developers can fix code from a phone. It is that coding agents no longer have to wait for the user's desk time in the same way. The agent keeps working, and the human enters briefly when a decision is needed. That decision is no longer trapped inside the desktop app, IDE, or pull-request screen.

OpenAI is widening access. Anthropic is clarifying the cost boundary for automation. Seen together, the conclusion is straightforward: the next front in coding agents is not the model card. It is the operating console. Sometimes that console is a desktop app. Sometimes it is a CI token. Now it can also be a small approval button on a phone.