Coder Agents move coding agents into the control plane

Coder opened the beta for self-hosted Coder Agents, showing how coding-agent competition is moving into enterprise control planes.

- What happened: Coder opened the beta for

Coder Agents, a coding-agent workflow that runs on self-hosted Coder infrastructure.- The agent loop runs in the Coder control plane, then provisions workspaces only when it needs to inspect code, edit files, or execute commands.

- Why it matters: Coding-agent competition is moving beyond model quality and IDE UX into runtime location, permissions, audit logs, and model choice.

- Builder impact: Teams in finance, government, healthcare, and other boundary-sensitive environments can evaluate a design that keeps LLM API keys away from individual workspaces.

- Watch: Coder Agents is still a beta, and broad default workspace networking can still give agents a large access surface.

Coder announced the beta for Coder Agents on May 6. At a glance, it may look like another AI coding-agent launch. The more interesting part is not "a smarter coding chatbot." Coder is asking a different operational question: once coding agents multiply inside a company, where do they run, who controls their permissions, and what logs and policies govern their work?

Over the last year, the AI coding market has been organized around model quality and developer experience. Claude Code emphasized terminal-native work. Codex emphasized parallel tasks and codebase exploration. Cursor emphasized the experience of editing inside an IDE. For an individual developer, that competition is intuitive. The useful question is which tool reads the repository well, fixes tests reliably, and feels natural to work with.

Enterprise adoption changes the order of concerns. If every developer installs a different agent, drops in a personal API key, and runs long tasks on a laptop or temporary cloud sandbox, the operating model gets tangled quickly. Security teams want to know which networks the agent reached. Platform teams want to control cost and model usage. Engineering managers want work products to end up in reviewable branches, pull requests, logs, and audit trails. Coder Agents targets that layer: the operating surface for coding agents.

What Coder Announced

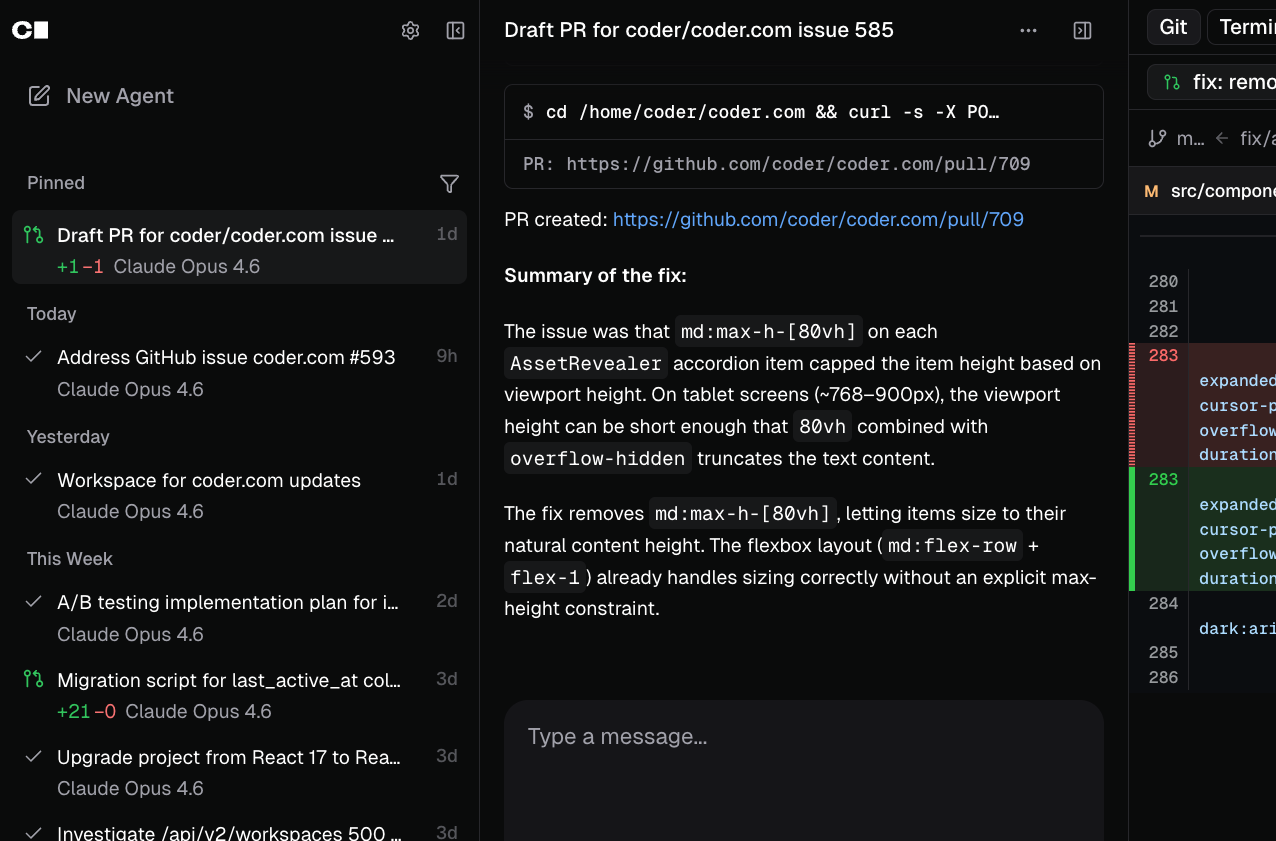

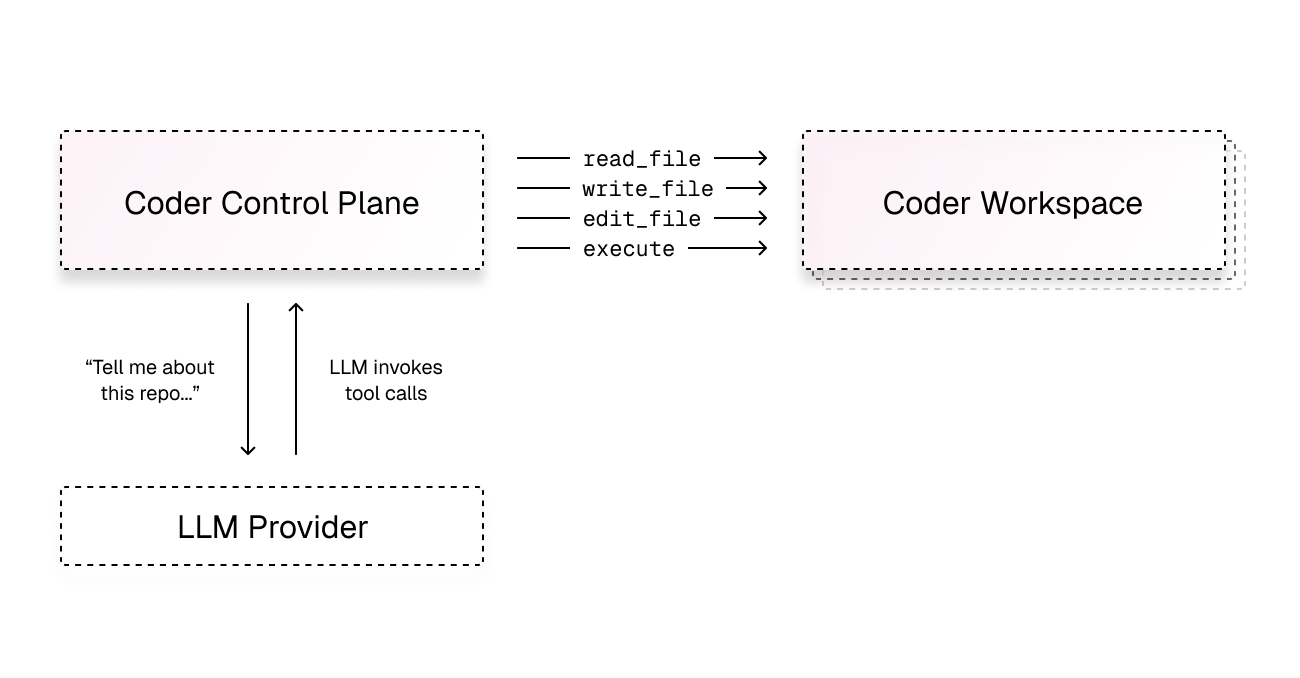

According to Coder's official announcement, Coder Agents is a beta feature for running AI development workflows on an existing self-hosted Coder deployment. Developers can hand work to an agent through the UI or API. The agent can then provision a Coder Workspace when it needs to read code, edit files, or run shell commands.

The important architectural distinction is where the agent loop runs. Coder's documentation says the Coder Agents loop runs in the Coder control plane. The workspace remains a standard development environment. It does not need to contain LLM API keys or a separate agent runtime. Model calls are handled by the control plane, while tool calls such as file reads, file writes, and command execution travel through the existing workspace connection path.

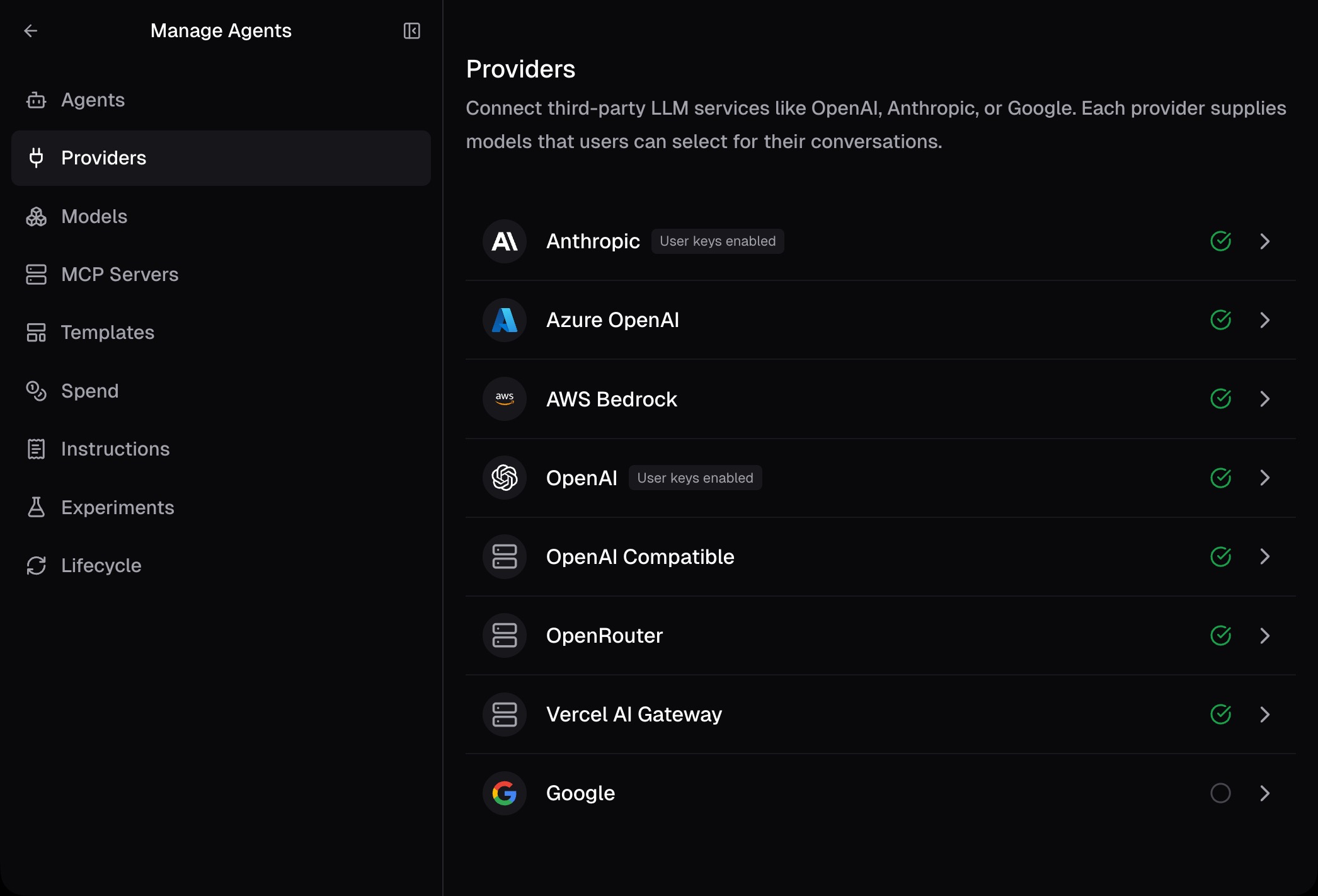

That makes the product subtly different from many coding agents that run inside a user's local machine, an IDE, or an individual cloud workspace. In those designs, the agent software, model-provider credentials, network access, and logs tend to be tied to that environment. Coder pulls the loop upward into a central control plane. Models can be swapped across Anthropic, OpenAI, Google, Azure OpenAI, AWS Bedrock, OpenAI-compatible endpoints, OpenRouter, Vercel AI Gateway, and other supported providers. Platform teams can manage models, prompts, MCP, skills, and usage policies centrally.

Coder also says this is not a wrapper around Claude Code or Codex. The official documentation describes Coder Agents as a standalone agent written in Go, implementing familiar agent patterns such as sub-agent delegation, context compaction, file editing, and shell execution. At the same time, Coder continues to support running third-party agents such as Claude Code or Codex inside Coder Workspaces. The positioning is therefore less "one more agent" and more enterprise rails for where agents run.

Why The Control Plane Is The Fight Now

Coding agents have moved beyond individual productivity demos. More teams are asking agents to handle issue triage, refactoring, test writing, migration tasks, and documentation updates. At first, "one Claude Code session on my laptop" may be enough. At team scale, the questions change. Who used which model? What code did the agent read? Which commands did it execute? Which network destinations did it reach?

Coder's December 2025 announcement about AI development infrastructure pointed in the same direction. At that time, the company described AI Gateway, Agent Firewall, and Coder Tasks as parts of a unified approach to model access, policy, and execution environments. In April 2026, Coder organized those concepts around AI Gateway and Agent Firewall. Coder Agents is now presented as the native orchestration layer that will eventually replace Coder Tasks.

This maps to the maturity curve of coding agents. Early attention went to whether models could edit code at all. The next phase emphasized IDE integration, terminal UX, and context management. The current phase is about the operational problems that appear when agents are assigned to many developers and many repositories at once. Coder Agents is a product for that third phase.

What It Means To Keep API Keys Out Of Workspaces

One of the most important details in Coder's docs is that the workspace does not need LLM API keys or agent software. In a traditional setup, the same environment that reads code and executes commands often also holds model-provider credentials. The development environment needs outbound access to the model provider, and the agent runtime has to be installed and maintained there.

Coder Agents separates those responsibilities. The control plane talks to the LLM provider. The workspace receives tool-execution requests. In that design, a workspace does not need direct access to Anthropic, OpenAI, or another LLM API. For sensitive environments, teams can apply stricter egress rules to agent-specific templates and open the network only toward needed destinations such as a Git provider or internal package registry.

That is a practical distinction for security teams. The fact that an agent can see code still matters. But the design reduces several operational problems: API keys scattered across workspaces, agent software installed and updated in every development environment, and tool permissions depending on local user setup. Coder says every action is tied to the identity of the user who submitted the task, and that an agent cannot access templates or workspaces the user could not access.

This does not erase all risk. If an agent workspace inherits the same broad network permissions as a default development workspace, the access surface is still large. Coder's documentation warns that agents can reach the internet if the default template does not restrict outbound networking. The point is not "put the loop in the control plane and everything is safe." The point is that the architecture gives an organization a central place to enforce policy.

Why Model Independence Matters

Another core part of the Coder Agents story is model agnosticism. The coding-agent market has often paired a model ecosystem with a product experience. Claude Code is naturally tied to Claude. Codex is optimized around OpenAI models and tool flows. Cursor and Copilot provide model choices inside their own product surfaces, but adoption is still shaped by each vendor's UX, policies, and runtime.

For companies, that coupling is useful and risky at the same time. One model may be best this month, but there is no guarantee it will remain best on cost, latency, security posture, and coding quality next month. Some teams may prefer Claude models, others may prefer OpenAI models, and regulated deployments may require Azure OpenAI or Bedrock. Some organizations also want to connect self-hosted or OpenAI-compatible endpoints.

Coder is trying to separate the agent operating model from model selection. Its announcement argues that intelligence comes from the model, but the way agents run, the way compute and workspaces are provisioned, and the way behavior is controlled should remain consistent across the organization. That shifts the unit of competition. Models can change while the operating layer stays in place.

| Comparison axis | Coder Agents | Traditional coding-agent rollout |

|---|---|---|

| Execution layer | Centered on a self-hosted control plane | Centered on local machines, IDEs, or individual SaaS sandboxes |

| Model choice | Supports multiple providers and self-hosted endpoints | Often tied to a tool's default model and billing stack |

| API-key location | Managed in the control plane | Can be spread across individual workspaces or user environments |

| Audit and policy | Central settings and user-identity-based controls | Dependent on each tool's logs and each user's configuration |

| Expansion path | API, CI/CD, GitHub Actions, and Slack integrations | Limited by each product's integration surface |

The Problems The Community Is Already Naming

Coder Agents is not happening in isolation. Around the same period, developer discussions increasingly focused on the infrastructure required to run file-system-using agents. A Hacker News Launch HN thread for Terminal Use captured the burden clearly: if you want to deploy agents that operate on files, you have to assemble sandboxing, streaming, persistent state, file upload and download, and versioning. Developers broadly understood the need, while also asking how multi-agent systems should preserve state order and semantic consistency when several agents read and write the same workspace.

Reddit discussions around self-hosted control layers point in the same direction. Coding agents are starting to behave like background workers, but the control layer remains fragmented. Once an agent reaches beyond code edits in an IDE and starts handling browsers, desktop apps, internal tools, and external services, a final diff is not enough evidence. Teams need to know which tool call caused which effect, where a human approved or rejected an action, and which files and systems the work touched.

These reactions converge on the same point. When an agent is a personal tool, UX can look like the whole product. When an agent becomes an organizational worker, observability, permission boundaries, persistent state, and auditability become core product requirements.

Limits And Unanswered Questions

Will Coder Agents immediately become the standard for enterprise AI development? It is too early to say. Coder calls the release a beta and says APIs may change. Scalability, API flexibility, and overall performance still need to be proven through future releases and larger deployments.

Agent quality is another open question. A well-designed control plane does not automatically produce better code changes. Actual coding performance depends on the model, agent loop, context construction, and tool-use strategy. Whether Coder Agents as a standalone agent can compete with dedicated coding agents such as Claude Code or Codex will require more real-world evidence. Coder's continued support for running third-party agents inside Coder Workspaces reflects that reality.

Pricing also needs attention. Coder says introductory access through September includes Premium capabilities and no usage-based limits during the beta. What happens after that will matter for enterprise adoption. Coding agents consume not only tokens, but also workspace compute, long-running task time, and parallel execution capacity. Central control is valuable, but teams still need to see whether the control layer makes total cost predictable.

Finally, "self-hosted" does not mean the same thing to every organization. For regulated industries, finance, healthcare, government, and disconnected-network environments, it can be a strong selling point. For a small startup or individual developer, operating Coder infrastructure may be more overhead than value. Coder Agents is closer to a platform-engineering product than a mass-market coding assistant.

The Next Scene For Coding Agents

Coder Agents is interesting not because it claims to be the most powerful coding agent of the week. It is interesting because it shows the stage widening. Coding-agent competition can no longer be explained only through model scores, IDE features, and terminal convenience. Infrastructure for running agents safely, repeatedly, with swappable models and traceable results is becoming its own market.

Developers should expect the question "which AI coding tool should we use?" to stop ending with "which model is best?" Teams will also ask where the agent runs, where API keys live, who can see the logs, how permissions map to user identity, and whether the workflow survives a provider change. Those questions are less exciting than a polished demo, but they determine whether agents can operate inside real software organizations.

Coder Agents is one answer to those questions, not the final answer. The direction is clear, though. The next phase of AI coding is moving beyond the race to make one agent smarter. It is becoming a race to decide how many agents should be placed inside an organization's development system, under which policies, with which evidence trail. In that phase, the control plane may matter more than the IDE, policy may matter more than the prompt, and auditable execution may matter more than the launch video.