Berget Code puts a 150 euro price on sovereign AI coding

Berget Code turns the AI coding-agent market into a data-residency, jurisdiction, and procurement question for European teams.

- What happened: Berget AI launched

Berget Codeon May 13, 2026, as an agentic coding service running on Swedish infrastructure.- The launch message centers on open-source harnesses such as OpenCode and Pi, open models, and code data processed inside Sweden.

- Why it matters: AI coding competition is moving beyond model quality and IDE polish into

data residency, jurisdiction, seat pricing, and procurement fit. - Builder impact: Coding agents operate near source code, prompts, logs, tests, and secrets, so infrastructure location is becoming a product requirement rather than a legal afterthought.

- Watch: The 150 euro monthly seat price and sovereign infrastructure story are clear, but teams still need evidence on model quality, catalog stability, and operational maturity.

Berget AI announced Berget Code on May 13, 2026. On the surface, it is another AI coding agent. A developer asks for work in a terminal, a model reads files, edits code, and runs tests. That loop is now familiar from Claude Code, OpenAI Codex, GitHub Copilot, Cursor, Cline, and OpenCode. The interesting part is not that Berget is promising a more convenient coding assistant. The sharper claim is that coding agents should be evaluated by the country, infrastructure, and legal regime through which a company's code passes.

The first sentence of Berget's launch post is direct: Berget Code is an agentic coding service hosted in Sweden, built on open-source software and open models. The company starts from the observation that Claude Code and Codex have changed how developers work, including in organizations with strict security requirements. Its answer is a service for developers who want to use AI while keeping code in Sweden.

That can sound like a small regional launch. It becomes more important when read against the current AI coding market. Over the past few months, coding-agent competition has shifted from features alone into operations. Codex added mobile approval loops. Copilot separated AI credits and premium plans. Anthropic exposed more of its Agent SDK billing structure. Teams are no longer asking only which model writes better code. They are asking who controls usage limits, who stores logs, who can train on code, which court can order data access, and which region goes down when a provider has an outage.

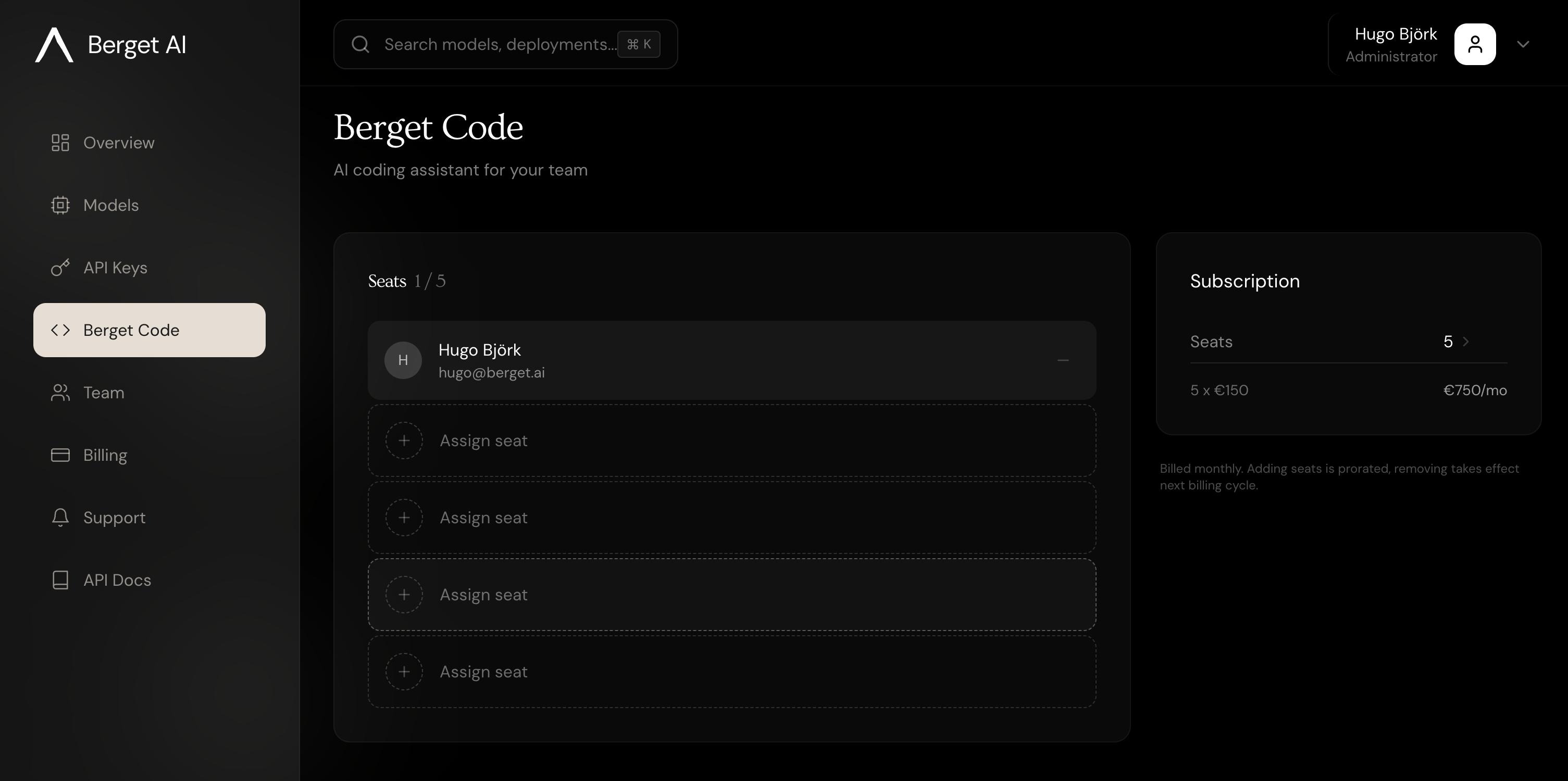

Berget Code sells a very specific answer to those questions. Its product page puts European teams in the foreground, says data stays in Europe, and says inference runs on Swedish infrastructure. The pricing is also more procurement-friendly than token-based usage. Berget lists a fixed price of 150 euros per developer per month. That is expensive for casual individual use. For public-sector, finance, healthcare, or regulated software teams, predictable spend and procurement approval may matter more than a cheap trial button.

What Berget Code actually ships

Based on the launch post, Berget Code is "agentic coding hosted in Sweden." The announcement highlights Kimi K2.6, describing it as one of the stronger open models in the market and pointing to a 256,000-token context window that can analyze a whole codebase in one context. It also names Google's Gemma 4 and Mistral Medium 3.5. The post says the product runs in OpenCode or Pi.

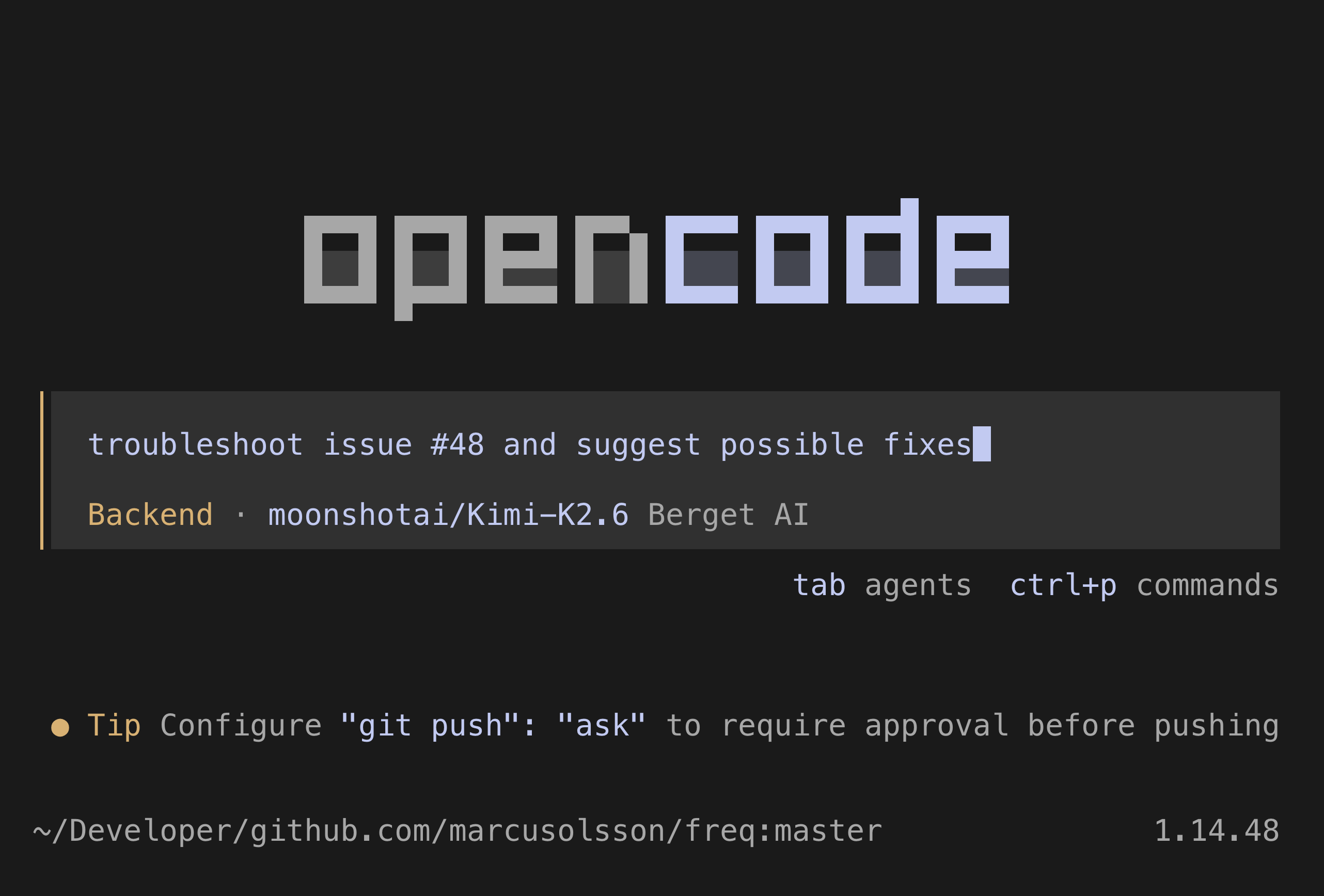

The product page is more operational. Berget says it officially supports OpenCode and Pi as open-source harnesses, and runs them on Berget's sovereign Swedish infrastructure. Developers who have tried OpenCode can expect a Claude Code-like terminal experience, while the surrounding product claims include LSP integration, multi-session parallel agents, and agent skills.

The documentation gives a more concrete setup path. Berget's OpenCode integration guide tells users to install OpenCode, run npx berget code init to configure the Berget provider, start opencode, and choose a model through /models. Interestingly, that documentation recommends GLM 4.7 for coding tasks, while the launch post emphasizes Kimi K2.6. That may mean the early model catalog is moving quickly, or that the launch, product, and docs messaging is not fully aligned yet.

That mismatch is not necessarily the core issue. For a sovereign AI infrastructure company, the thing being sold is less a single model name and more an operating location where models can be swapped. If Kimi is the strongest coding model this week, route to Kimi. If GLM or Mistral becomes better next month, move the workload. Berget's documentation also emphasizes an OpenAI-compatible API, a model catalog, and capability matrices. The commercial logic is not "we cloned one U.S. proprietary assistant." It is closer to "keep the coding-agent workflow, but route the models and infrastructure through Europe."

| Buying criterion | U.S.-centered coding agents | Berget Code's claim |

|---|---|---|

| Core value | Latest proprietary models, ecosystem depth, IDE integration | Swedish infrastructure, EU jurisdiction, open harnesses |

| Cost structure | Mixed subscriptions, credits, tokens, and usage limits | Fixed seat pricing at 150 euros per developer per month |

| Control point | Strong dependence on vendor policy and region settings | Berget-owned GPU nodes and Swedish data centers |

| Adoption risk | Data transfer, training policy, legal review, shifting limits | Model quality, product maturity, smaller ecosystem, price |

Why coding agents make data sovereignty unavoidable

AI coding tools handle more sensitive data than ordinary chatbots. Users do not send only abstract questions. Repository structure, private source code, internal API names, failing test logs, configuration near .env files, vulnerability descriptions, fixtures that may contain customer-like data, deployment scripts, and CI output can all flow into prompts and context. The more useful an agent becomes, the more authority it requests. It reads files, runs shell commands, opens browsers, creates pull requests, closes issues, and touches deployment pipelines.

That makes a coding agent both a productivity product and a data-processing pipeline. This is why adoption of Claude Code, Codex, or any similar system rarely ends with the statement that developers like it. Security teams ask which data leaves the company. Legal teams ask where processing happens and who the subprocessors are. Platform teams ask about audit logs and account deprovisioning. Finance teams ask about usage spikes and seat costs. As agents move closer to source code, those questions become sharper.

Berget aims directly at that point. The launch post says all processed data stays within Sweden's borders and that Berget AI does not train models on code sent to the service. The product page goes further. It says inference runs on GPU nodes owned by Berget AI in Swedish data centers, and frames that as a matter of physical infrastructure and EU law rather than a contractual flag or region setting.

For European companies, this claim has practical weight. GDPR compliance is not just a matter of being careful with personal data. Buyers need to know where data is processed, which national laws apply to the provider, how government access requests are handled, which subprocessors are involved, and whether customer data is used for model training. Even when a U.S. provider offers an EU region, questions can remain about jurisdiction and parent-company access. Berget presents itself as a Swedish-owned company outside U.S. CLOUD Act exposure.

That claim still needs diligence. Data sovereignty is easy to turn into a slogan. Real adoption should check the data processing agreement, security certifications, penetration testing, log-retention policy, incident response, backup locations, support access, telemetry, model supply chain, and who operates the GPU infrastructure. Open models do not automatically make a system safe. Teams still need to inspect model licenses, weight provenance, fine-tuning policy, eval methodology, and tool-call handling.

Even with those caveats, Berget Code asks the right question. Once coding agents become central to development workflows, "where does our code go" is not a side legal checkbox. It becomes a product-selection criterion.

Why the open-source harness matters

Another pillar of Berget Code is OpenCode and Pi. The important word is not model, but harness. In a coding agent, the harness is the working environment around the model. It handles file exploration, context selection, todos, command execution, diff generation, tool calls, session state, and subtask decomposition. The same model can produce very different results when the harness changes.

Claude Code is strong partly because of the model, but also because the terminal experience makes it natural to read files, plan, run tests, and propose diffs. Codex is not just a model API either. It combines a workspace, approvals, sandboxing, remote execution, and mobile confirmation loops. A sovereign coding agent therefore cannot win by hosting a good open model alone. It needs a harness developers can actually use.

Berget's choice of OpenCode and Pi is a way to avoid rebuilding the whole interface from scratch. Instead of making a proprietary IDE, it supports open-source harnesses that already fit into developer workflows. The OpenCode integration docs show a provider-configuration flow through the Berget CLI, then model selection inside OpenCode. To the developer, this feels less like learning a new assistant and more like changing the backend behind a familiar agent workflow.

That approach has strengths and limits. The strength is fast compatibility. If the open harness improves, Berget can benefit from that ecosystem. Customers may also feel less locked into one proprietary assistant. The limit is experience control. OpenCode or Pi quality, plugin behavior, error handling, UI maturity, and enterprise-management depth all shape the perceived Berget experience. Buyers will not ask only whether Berget's models are good. They will ask whether actual agent sessions feel as stable as Claude Code or Codex.

This is where Berget's dedicated GPU claim becomes important. The product page says Berget Code uses dedicated GPU hardware that is not shared with other Berget workloads, aiming for higher throughput, stronger session adherence, and better rate and token limits. Coding agents have longer sessions than ordinary chatbots. They read multiple files, incorporate test results, and revise code across turns. If a rate limit interrupts the loop or context collapses midway, developers quickly lose trust. A sovereign product that lags badly on performance will struggle to survive on legal advantages alone.

What the 150 euro price says about the market

The price became a point of debate quickly on Hacker News. One commenter said 150 euros per month felt expensive for trying the product. That reaction is natural. For an individual developer comparing Claude Code, ChatGPT, Copilot, and Cursor subscriptions, Berget Code is not a casual experiment. U.S.-based services often make the first step easier through free trials, low-cost personal plans, or bundled subscriptions.

But Berget does not appear to be aiming primarily at hobby developers. Its FAQ mentions the public sector, financial institutions, healthcare providers, and companies building applications for those verticals. For those teams, the calculation is different. A 150 euro monthly developer seat can be expensive, but it may be cheaper than failing legal review, banning AI coding tools entirely, or allowing only a restricted subset of repositories into U.S.-hosted assistants.

Fixed seat pricing fits the same procurement logic. Many AI coding products now combine credits, usage tiers, model classes, priority queues, and rate limits. Once developers start using agents heavily, costs become harder to predict. Procurement teams prefer a structure that can be stated as "40 developers at 150 euros per month." Berget Code's price is not just a technical packaging decision. It is shaped for the buying process.

The price only works if performance and reliability follow. If a team pays 150 euros per developer and the model is meaningfully weaker than major proprietary coding models, context handling is brittle, or OpenCode sessions fail too often, data sovereignty will not be enough. Coding agents spread through internal word of mouth. If developers conclude that the tool is slow, misses tests, or gets lost during large refactors, security-team approval will not create real adoption.

The real evaluation metric for Berget Code is therefore not a benchmark headline. It is how many real tasks the system can close in regulated repositories, how well it holds context in large monorepos, whether model switching preserves the workflow, how consistently it can read and fix CI failures, and whether audit logs and admin controls match enterprise requirements.

Why the community reaction is cautious

Berget Code appeared in a Hacker News discussion about moving a digital stack to Europe. That context matters. The product was received less as a pure AI coding-tool launch and more as part of a broader conversation about European digital infrastructure, dependence on U.S. big tech, and data sovereignty. Some commenters showed interest in European alternatives and mentioned developer infrastructure such as Codeberg.

The skepticism was just as visible. People questioned whether European services can truly avoid U.S. dependencies, whether U.S. infrastructure companies such as Cloudflare are still in the path, and how strong EU sovereignty claims are in day-to-day operations. Some saw European companies as still deeply dependent on U.S. technology. Others argued that sovereignty rhetoric becomes weak if it turns only into anti-U.S. sentiment rather than better products.

That caution is healthy. Sovereign AI can easily become symbolic politics. A product does not become good simply because it avoids a U.S. API. Developers need to use the tool every day. Companies need to survive outages and security incidents. Models need to fix real code. If European infrastructure is going to matter, product quality, documentation, support, performance, ecosystem depth, and transparency have to improve together.

Berget Code sits inside that tension. On one side, it sees a real market gap. Organizations exist that want AI coding agents but cannot easily send private source code to U.S. APIs. On the other side, it remains an open question whether that gap is a large enough paid market, and whether Berget can keep pace with proprietary coding-agent speed.

What development teams should check now

Whether Berget Code is immediately relevant outside Europe is a separate question. The launch is still useful because it clarifies how AI coding-tool evaluations are changing. First, teams should separate data policy by repository. Not every repository has the same sensitivity. Public code, internal tools, customer-data backends, security products, and regulated-industry projects deserve different agent policies.

Second, it is not enough to ask whether code is used for model training. Teams should also inspect prompt retention, log retention, support-engineer access, abuse monitoring, telemetry, crash reports, third-party subprocessors, and region failover. Coding agents naturally collect failure logs and source snippets. Even if that data is not used for training, it can still remain in operational logs.

Third, cost limits should be connected to engineering workflow. Credit-based tools can look cheap early, then expand quickly when agents begin handling tests, refactors, documentation, and CI fixes. Fixed seat pricing is predictable, but expensive if utilization is low. Teams should look beyond monthly cost per developer and measure cost per completed task, review-time reduction, CI-failure resolution time, and fewer security exceptions.

Fourth, harness dependency matters. Even if a model provider can be swapped, the user experience can still lock a team into one IDE or CLI. OpenCode, Cline, Continue, Cursor, Copilot, Codex, and Claude Code each manage context and tool calls differently. A mature adoption strategy will not push every workflow into one assistant. It will operate different tiers based on repository type and risk.

Fifth, agent permissions still need least privilege. Sovereign infrastructure does not prevent an agent from running a risky local command, committing the wrong file, or exposing a secret. Data location is necessary, not sufficient. Sandboxing, approval steps, secret masking, network-egress limits, branch policy, and human review are still required.

The next axis of AI coding competition

Berget Code may not become a mass-market replacement for Claude Code or Codex. Major U.S. products are likely ahead in model quality, ecosystem depth, user experience, plugins, mobile and remote loops, and GitHub integration. The importance of the launch is that it changes the axis of competition. AI coding tools are moving from developer productivity apps into software supply-chain infrastructure.

Once a tool becomes supply-chain infrastructure, the questions change. Which provider serves the model? Which data center runs inference? Who owns the GPU? Which jurisdiction applies? Does code enter training data? How long do logs remain? How are seats and API keys revoked? When an employee leaves, what happens to agent sessions and tokens? If a security incident happens, which contracts and laws apply?

Berget Code compresses that into one message: coding agents are part of data sovereignty. That sentence will not carry the same weight for every team. Fast-moving startups may still choose the strongest model and the smoothest developer experience. Individual open-source developers may prefer cheaper options than a 150 euro monthly seat. Public-sector, finance, healthcare, defense, industrial software, and sensitive SaaS teams will make a more complicated calculation.

For Berget Code to succeed in that calculation, it has to prove two things at once. It needs control that legal, security, and procurement teams can understand. It also needs enough speed and accuracy that developers want to use it daily. The first without the second becomes an expensive compliance checkbox. The second without the first forces Berget into a direct race with much larger U.S. platforms. The opportunity is in the middle.

Until recently, AI coding headlines mostly said that models can write code faster. In 2026, the sentence is becoming more complicated. Write code faster, but on whose infrastructure. Read more context, but where does that context remain. Let agents run more commands, but who controls the authority and cost. Berget Code is an early European attempt to push those questions into the product itself. Whether it wins or not, it shows that AI coding can no longer be explained by feature demos alone.