Claude Agent SDK credits move agent automation into metered budgets

Anthropic is separating Claude Agent SDK usage into monthly credits, making coding-agent automation a budgeted workflow rather than plain subscription usage.

- What happened: Anthropic is moving

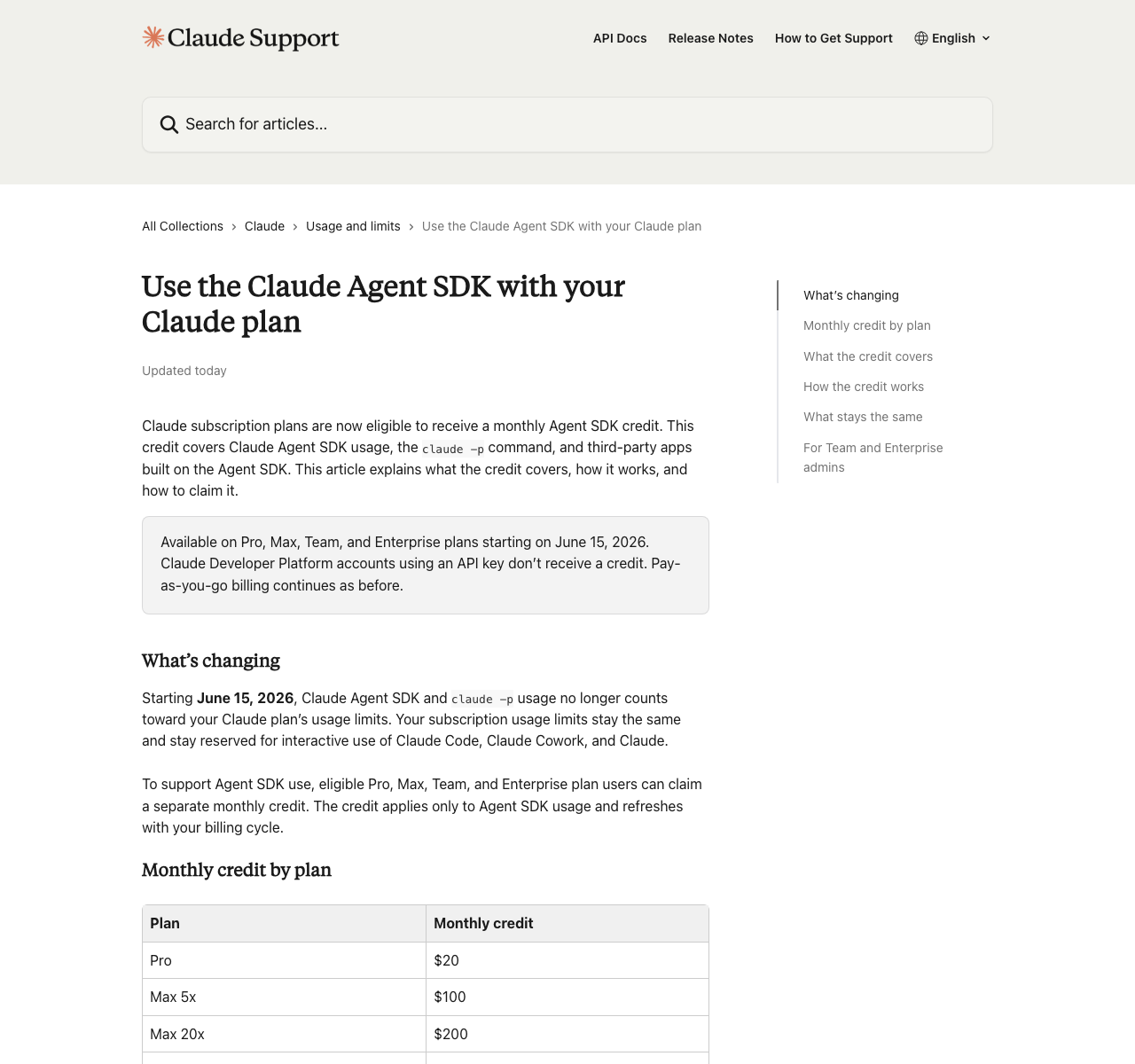

Claude Agent SDK,claude -p, GitHub Actions, and third-party Agent SDK apps into a separate monthly credit.- The change starts on June 15, 2026, with $20 for Pro, $100 for Max 5x, and $200 for Max 20x.

- Why it matters: Interactive Claude Code and programmatic agent automation are no longer treated as the same subscription workload.

- Builder impact: Long-running coding agents now need budget limits, retry policies, queues, token accounting, and stop conditions.

- Watch: The policy reopens a path for third-party apps, but it also turns subscription compute arbitrage into an explicit metered budget.

Anthropic has redrawn an important boundary inside Claude subscriptions. According to the company's Help Center, starting June 15, 2026, Claude Pro, Max, Team, and Enterprise users can receive a separate monthly credit for Claude Agent SDK usage. The credit covers Claude Agent SDK projects, claude -p, Claude Code GitHub Actions, and third-party apps built on the Agent SDK. Interactive Claude Code in a terminal or IDE, Claude conversations on web, desktop, and mobile, and Claude Cowork remain under the existing subscription usage limits.

At first glance, this can look like a simple benefit: subscribers get extra credits. For developers, the more important story is budget separation. The same Claude models are now being accounted for differently depending on whether a human is interacting with Claude Code or a script is repeatedly invoking an agent workflow. That is a meaningful signal for the coding-agent market. The competition is no longer only about model quality, IDE integration, MCP support, sandboxes, or multi-agent interfaces. It is also about which kinds of automation belong inside a subscription and which need API-like economics.

The official numbers are straightforward. Pro users get $20 per month. Max 5x users get $100, and Max 20x users get $200. Team Standard seats get $20, Team Premium seats get $100, usage-based Enterprise users get $20, and seat-based Enterprise Premium users get $200. Credits are per user and cannot be pooled across a team. Unused credits do not roll over. The monthly credit drains first, then accounts with extra usage enabled continue at standard API rates. If extra usage is disabled, Agent SDK requests stop until the next billing-cycle reset.

This is not just a price-table update. Over the past few months, the AI coding-tool ecosystem has been circling a hard question: how far can a Claude subscription be stretched into automation? The claude -p command makes Claude Code callable in non-interactive mode, which means users can wire it into shell scripts, CI jobs, background workers, and custom agent harnesses. Third-party frameworks such as OpenClaw became symbols of that pattern. A person pays a subscription, an agent runs for a long time, and the model provider carries the underlying compute cost.

Anthropic's new policy resolves that ambiguity with a middle path: allow the workflow, but meter it through a separate budget. The Help Center says the monthly Agent SDK credit applies to Python or TypeScript Agent SDK projects, claude -p, Claude Code GitHub Actions, and third-party apps that authenticate through the Agent SDK. It also draws a line around what the credit does not cover: interactive Claude Code, normal Claude conversations, Claude Cowork, and other features that draw from extra usage. Anthropic is not simply blocking OpenClaw-style usage. It is moving that usage out of general subscription limits and closer to an API cost model.

| Usage flow | Budget charged | Question for builders |

|---|---|---|

| Interactive Claude Code in terminal or IDE | Existing Claude plan usage limits | Can human-approved work keep the same workflow? |

claude -p non-interactive calls | Agent SDK monthly credit | How small should scripts and long-running tasks be? |

| Claude Code GitHub Actions | Agent SDK monthly credit, then extra usage if enabled | Should CI repair and PR automation have monthly caps? |

| Third-party apps such as OpenClaw or Conductor | Agent SDK monthly credit | Does provider routing and cost predictability matter more than convenience? |

The first practical question for developers is simple: which mode are you actually using? If you talk with Claude Code in a terminal, inspect edits, and approve work as it proceeds, that remains part of the ordinary subscription experience. If the same terminal tool is invoked through claude -p, the workload moves to the Agent SDK credit. Once GitHub Actions asks Claude to repair failed tests, or a worker keeps pulling backlog issues, it becomes difficult to think about the system as normal chat usage.

That distinction also makes technical sense. In an interactive coding session, a human often decides when to stop, which files to read, which build command to run, and whether a failed attempt is worth continuing. Programmatic agents repeat more easily. They can reinject large contexts, rerun tests, discard a failed branch, start again, and keep trying through the night. When they succeed, they can save real time. When they fail, cost grows quickly. From a provider's perspective, those two modes are hard to price as one flat subscription workload.

The community reaction is split for exactly that reason. In Anthropic's official Reddit notice, some users accepted the change as a stability move because ordinary subscription limits were not changing. Others saw it as a downgrade for people who had built personal automation around claude -p. A high-scoring r/ClaudeCode thread framed the old shared limit as an opaque but heavily subsidized pool and criticized the move to explicit budgets such as $200. VentureBeat described the change as both a return path for third-party agent usage and the end of unlimited-looking subscription compute arbitrage.

This is less a question of who is morally right than of what product users thought they had bought. A user may feel that a $20 or $200 monthly Claude subscription buys access to the model wherever they use it: terminal, IDE, CI, OpenClaw, or a custom bot. Anthropic sees Claude, interactive Claude Code, the Developer Platform API, and Agent SDK automation as different product surfaces. Third-party harnesses can create token-consumption patterns that Anthropic does not directly optimize or control. From that view, separate budgeting is predictable, even if it is unpopular with heavy automation users.

For teams building coding-agent workflows, the default design posture changes. Many individual developers have been experimenting under the rough assumption that subscription limits are a large sandbox. Now each task has to be understood as a budgeted unit. A PR repair job, a flaky-test investigation, a large-repo refactor plan, and a long-running documentation-and-test cleanup all have different cost profiles. As agents become more capable, the work unit also becomes an accounting unit.

The most fragile workflow is the always-on personal coding assistant. Imagine a system that watches an issue queue, creates a branch, runs tests, patches failures, responds to PR comments, and keeps retrying until it has a reviewable change. When it works, the developer gains hours. When the stop conditions are loose, it can burn through model calls and tool executions. Under the new policy, credit exhaustion becomes part of product behavior. With extra usage enabled, the bill continues. With extra usage disabled, the automation stops.

That means developers need to design three boundaries more explicitly. The first is budget per task. An agent should not only receive "fix this issue." It should receive limits such as "try this at most N times" or "stop if the same build failure repeats." The second is model routing. Classification, log summarization, patch planning, and final review do not necessarily need the same model. The third is observability. If prompts, file reads, build retries, and tool calls are not recorded, the only thing left at the end of the month is surprise about where the credit went.

The policy is also mixed news for OpenClaw-style frameworks. Positively, Anthropic is explicitly describing third-party Agent SDK apps as covered by the credit. That is a better signal than a complete shutdown. OpenClaw's own provider documentation has treated Claude CLI reuse and claude -p as allowed after Anthropic clarified the earlier restrictions. The tradeoff is that one of the big attractions of these harnesses, a broad-feeling subscription usage pool, is weaker. Their next competitive edge has to come from routing, cost caps, caching, sandboxing, failure recovery, and security controls.

Enterprise teams may find the message cleaner. If a company wants to use Claude Code GitHub Actions in CI or attach the Agent SDK to an internal developer platform, it now has to manage per-user credits and extra-usage policy. The per-user, non-pooled rule matters. A power user or automation account cannot automatically consume idle credit from the rest of the team. That helps cost predictability, but it may push serious shared automation toward Claude Developer Platform API keys and pay-as-you-go billing.

Human-approved Claude Code session

Existing Claude plan usage limits

SDK, claude -p, GitHub Actions, third-party app

Agent SDK credit, then extra usage or stop

The competitive context is broader than Anthropic. OpenAI, GitHub, Cursor, Google, Alibaba, Zhipu, MiniMax, and others are all experimenting with how to price coding agents and long-running automation. Some products add premium request pools to subscriptions. Some stay close to API billing. Some charge credits per agent task. Anthropic's move lands on the side of saying a single flat subscription cannot indefinitely cover both interactive coding and programmatic agent loops.

That choice puts pressure on competitors too. Unlimited-looking subscriptions can win users quickly, especially developers who run agents heavily. Over time, though, GPU cost, inefficient automation, and abusive workloads become harder to absorb. The providers that survive this market will likely separate human-in-the-loop work, API-like automation, and production agent workloads more clearly.

The immediate action item for builders is not complicated. Split Claude usage into three buckets: interactive human sessions, non-interactive scripts or CI calls, and third-party harnesses acting on the user's behalf. If the second and third buckets are large, estimate how long they will last under the Agent SDK credit after June 15. A $20 credit may be enough for small experiments. A $200 credit is useful, but for a professional large-repo automation workflow it is better understood as a starting budget than a safety net.

Another practical rule is to avoid letting expensive models loop on operational failures. When logs repeat, agents tend to read more context, form more hypotheses, rerun more tests, and make more edits. Some failures need a stronger model. Others are broken environment variables, bad mocks, external service limits, or flaky tests. In those cases, explicit rules such as "stop after the same error repeats twice," "rerun this test at most three times," or "ask before installing a new dependency" are cost controls, not bureaucracy.

The larger point is that AI agents are becoming software with operating costs, not just demos. In the chatbot era, the pricing question was simpler: buy a subscription or pay for API tokens. In the agent era, the questions multiply. Who starts the task? Does a human intervene? Who stops it after failure? Which tools can it access? What happens when the credit runs out? Does it halt, or does it continue into metered usage? Anthropic's Agent SDK credit policy brings those questions into the product documentation.

I would not read this only as a retreat by the Claude ecosystem. It is better understood as a settlement phase that becomes unavoidable as agent usage matures. A near-unlimited subscription is excellent for early developer adoption. But once agents read files, run tests, control browsers, and execute repeatedly in CI, the idea of one person's usage becomes blurry. Work continues even when the person is not clicking, and a bad loop can spend money all night.

The next standard for AI coding tools will not be set only by model benchmarks. Strong tools will show which tasks are expensive, make stop conditions easy to configure, reduce repeated context through caching and summaries, mix cheap and strong models naturally, and leave readable logs when automation fails. Anthropic's policy makes the cost boundary more explicit. That is inconvenient, but it is also part of treating agents as production systems.

The conclusion is practical. Developers who use Claude Code interactively may not feel a major change immediately. Developers who have normalized claude -p, GitHub Actions, OpenClaw, Conductor, or custom Agent SDK workers should revisit their automation design before June 15. The next coding-agent race is becoming less about who can think the longest and more about who can finish the task with fewer failed loops and more predictable cost.