The AI agent control-plane war has started

Microsoft Agent 365 GA, ServiceNow AI Control Tower, and Cisco Astrix landed in the same week. Agent competition is moving toward control and identity.

- What happened: Microsoft moved

Agent 365to general availability, while ServiceNow and Cisco strengthened agent control and security layers in the same week.- Microsoft is extending visibility from local OpenClaw agents toward GitHub Copilot CLI, Claude Code, AWS Bedrock, and Google Cloud agent registries.

- Why it matters: Agent competition is shifting from model capability to registration, identity, permissions, logs, and blocking.

- Watch: Some control products are still in preview or Innovation Lab stages, so operational maturity and vendor lock-in need separate validation.

- Builder impact: Enterprise agent deployment now needs IAM, endpoint policy, audit traces, cost visibility, and decommissioning plans beside prompts and tool calls.

The most interesting AI news of the first week of May was not a new model launch. It was quieter and far more operational. Microsoft said on May 1, 2026 that Agent 365 is now generally available for commercial customers. ServiceNow announced an expanded AI Control Tower at Knowledge 2026 on May 5. Cisco, one day earlier, announced its intent to acquire Astrix Security, a non-human identity security company.

Read separately, each announcement looks like ordinary enterprise product news. Put together, the direction becomes clearer. The enterprise AI agent market is moving from "can we build agents?" to "how does an organization control agents?" As coding agents, workflow agents, SaaS agents, and local desktop agents multiply, the problem changes. Who created the agent? Which credential does it use? Which data can it reach? How can it be stopped when something goes wrong?

That shift is a direct signal for developers. Deploying an agent in an enterprise will no longer mean only designing good prompts and tool calls. Agents will have to be registered, assigned owners, limited by permissions, logged during execution, and blocked when they violate policy, in the same way organizations already manage people accounts, service accounts, devices, and SaaS applications. For agents to do real work inside a company, they have to be treated less like AI features and more like operational digital actors.

Agent 365 turns agents into managed assets

Microsoft's message is explicit. Agent 365 is a control plane for agents. The official blog says Agent 365 covers not only agents built with Microsoft AI, but also ecosystem partner agents for observability, governance, and security. General availability started on May 1, and several preview capabilities were announced at the same time.

The most striking piece is local-agent and shadow-AI discovery. Microsoft described preview capabilities that use Defender and Intune to find and manage local AI agents running on Windows devices. OpenClaw is the first cited example, and Microsoft says it plans to extend support to widely used agents such as GitHub Copilot CLI and Claude Code. That sentence matters. For an enterprise security team, Claude Code or Copilot CLI is no longer just a tool used by developers. It is an agent operating on an endpoint with meaningful privileges, so it becomes part of inventory and policy.

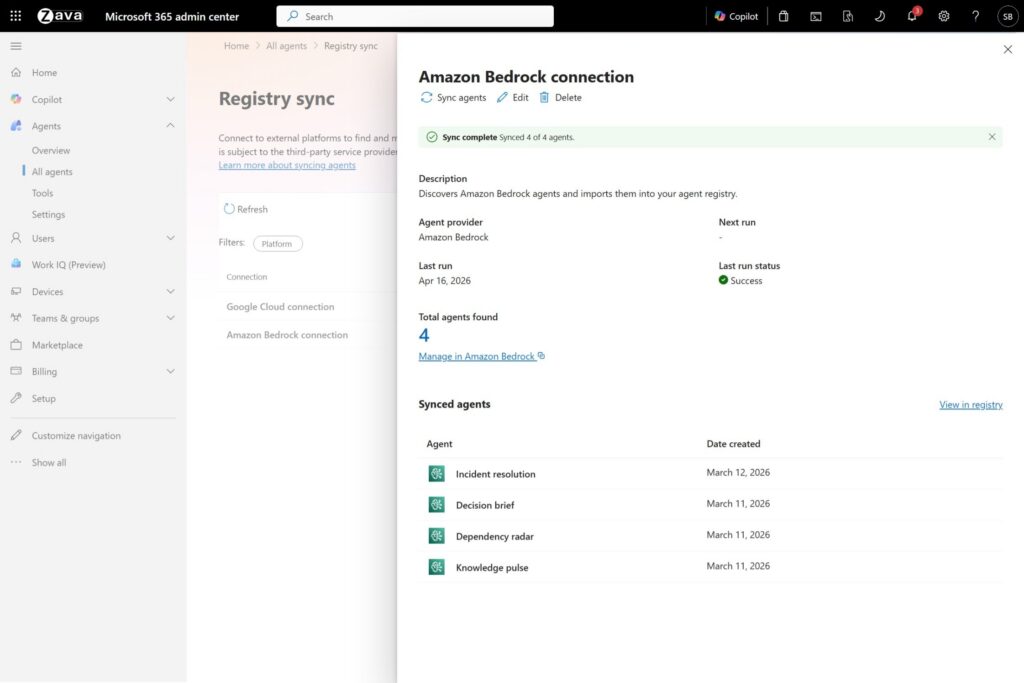

The second axis is multicloud registry sync. Microsoft announced public-preview Agent 365 registry sync through AWS Bedrock and Google Cloud connections. The official screenshot shows an Amazon Bedrock connection inside the Microsoft 365 admin center, with four Bedrock-side agents synchronized. The point is simple. Agent 365 is trying not to remain a Copilot management console inside Microsoft 365. It wants to pull in agent inventory created in competing clouds as well.

The third axis is execution environment. Windows 365 for Agents is in public preview and provides a new kind of Cloud PC where agents can work inside a policy-controlled environment. Desktop apps and legacy business systems still sit at the center of many enterprise workflows. If an AI system has to manipulate a browser or desktop because there is no clean API, security teams will ask where that activity runs. Microsoft is answering with Windows 365 and Intune, which are already part of its management stack.

Pricing also says something about the product shape. Agent 365 is included in Microsoft 365 E7 or available standalone at USD 15 per user per month. The interesting part is that Microsoft is charging by the people who manage, sponsor, or use agents rather than by the number of agents. The design treats agents as software assets, but it still connects responsibility back to humans inside the organization.

ServiceNow brings the control tower into workflow

ServiceNow's AI Control Tower expansion looks at the same problem from another angle. The company says AI Control Tower now spans five dimensions: discover, observe, govern, secure, and measure. The announcement bundles 30 new enterprise integrations, runtime observability based on the Traceloop acquisition, risk frameworks aligned with NIST and the EU AI Act, permission control based on Veza's access graph, and cost and ROI dashboards.

For developers and platform teams, the observe and secure parts are especially important. ServiceNow says Traceloop lets teams observe AI agent behavior at runtime and see how agents reason and where decisions are made. That is a bigger claim than basic log collection. It assumes that when an agent fails, the useful question is not only "why did the model answer badly?" but "which step, tool, and permission path created the problem?"

The secure pillar introduces Veza's access graph. ServiceNow says it can apply least privilege across AI systems, agents, and identities, and detect and interrupt agents that move outside their permissions. The Register covered the announcement through the lens of agent "kill switches." If agents can act faster than humans, blocking systems have to be faster than human approval queues too. The claim still needs practical validation: teams will need to see whether detection is accurate enough and whether false positives are manageable in production.

ServiceNow's strength is that it already sits inside work systems. Microsoft starts from identity, endpoints, and the productivity suite. ServiceNow starts from incident management, approvals, HR, ITSM, CMDB, and workflow transactions. Its announcement emphasizes 100 billion workflows and 7 trillion workflow transactions per year. In agent control, the important question is not only "can we see the agent?" but "which business process is this agent touching?" ServiceNow is competing from that position.

Its launch status is different from Microsoft's. AI Agent Advisor and Intelligent Approvals were announced as generally available in May 2026, but the AI Control Tower enhancements are entering the Innovation Lab in May, with general availability expected in August 2026. The direction is clear, but not every capability is shipping with the same maturity today.

Cisco sees the problem as non-human identity

Cisco's intent to acquire Astrix Security is news at a slightly different layer. Cisco describes Astrix as a company focused on securing credentials used by modern systems, including API keys, service accounts, and OAuth tokens. Cisco's thesis is that AI agents use those credentials and can misuse them.

That framing matters. Agent security does not end with input filtering or prompt-injection defense. Agents that perform real work access calendars, email, code repositories, deployment systems, CRM, payment APIs, and data warehouses. That access is ultimately represented by credentials and permissions. Since the actor is not a human account, the usual story around MFA or human approval does not fully explain the risk surface. Cisco names this as an agentic workforce and non-human identity problem.

Cisco summarizes Astrix's capabilities across discovery and governance, access and lifecycle management, threat detection and response, and secrets management. It also plans to integrate Astrix into Cisco Identity Intelligence, Secure Access, Duo Identity and Access Management, and Splunk after the acquisition closes. If Microsoft Agent 365 and ServiceNow AI Control Tower look like operational consoles, Cisco's approach is to bring the identities and credentials used by agents into the security stack.

The three announcements reveal the stack

| Question | Microsoft | ServiceNow | Cisco |

|---|---|---|---|

| What does it observe? | Microsoft, local, SaaS, and cloud agent inventory | AI assets, workflows, runtime behavior, and ROI | AI agents and non-human identities |

| Where does it start? | Microsoft 365 admin, Defender, Intune, and Entra | CMDB, workflows, AI platform, and operational data | Identity, zero trust, secure access, and Splunk |

| What does it promise? | Control over agent sprawl and shadow AI | One control tower for observability, risk, permissions, and cost | Defense against agent credential and permission abuse |

What the comparison shows is not just a set of product names. It shows layers. The agent platform is splitting into at least four layers.

The first is the model and reasoning layer, where OpenAI, Anthropic, Google, xAI, and open-source model makers compete. The second is the agent runtime and tool-execution layer, where Codex, Claude Code, OpenClaw, Bedrock AgentCore, Windows 365 for Agents, and Amazon WorkSpaces for AI agents fit. The third is identity and permission, where Entra, Duo, service accounts, OAuth tokens, access graphs, and secrets management become central. The fourth is the control plane and observability layer, where Agent 365 and AI Control Tower are trying to sit.

The early agent market focused on the first two layers. News centered on how smart agents were, how long they could work, and how many tools they could use. In real enterprise deployment, the third and fourth layers often block progress first. If a company cannot tell which agent accessed production data, it cannot audit the system. If agents call other agents or modify SaaS workflows without clear identity boundaries, accountability blurs. If cost and ROI are invisible, pilots can multiply while budgets stall.

Development teams get a new deployment checklist

For AI teams and platform engineers, this trend turns into concrete requirements. "We deployed an agent" will not be enough if it only means an endpoint exists. Teams will need to answer a harder set of questions.

Which registry contains the agent? Who owns it? Does it act through delegated access on behalf of a person, or does it have its own credential and permissions? Which data and tools can it reach? Where are execution logs and reasoning traces stored? Which system blocks it when a policy violation or suspected prompt injection appears? How are usage and cost measured? When an employee leaves, a project ends, a model changes, or an agent is decommissioned, how are permissions revoked?

These questions used to sound like security-team or IT-operations language. They are now design inputs for agent developers. Tool-calling schemas have to consider scope and auditability. MCP servers have to make identity clear. When a company allows coding agents such as Claude Code or Codex internally, it has to explain not just the productivity gain, but which commands the local agent can run and which repositories, secrets, browsers, and internal documents it can access.

Shadow AI may surface first inside development organizations. Developers adopt new CLIs and editor plugins early. Before security teams finish selecting an official platform, local agents may already be reaching codebases, terminals, browsers, and internal docs. That is why Microsoft explicitly named OpenClaw, GitHub Copilot CLI, and Claude Code. These tools are personal productivity tools, but they are also part of the enterprise security surface.

Skepticism is still necessary

"Control plane" is not a magic phrase that solves every agent problem. Several hard questions remain.

The first is discovery accuracy. Agents can hide as browser extensions, CLIs, SaaS bots, workflow automations, and custom serverless functions. Teams have to test how much of their real agent surface a product's inventory actually captures.

The second is the realism of the permission model. Sometimes an agent should act through delegated access. Sometimes it needs its own credential. Microsoft discusses both types. In production, the boundary gets blurry. If a coding agent uses a developer's GitHub permissions to open a pull request, read CI secrets, and inspect deployment logs, is that the person's action or the agent's action? Whose name should appear in audit logs?

The third is vendor lock-in. Microsoft emphasizes AWS Bedrock and Google Cloud registry sync, but a control plane deeply tied to one productivity and security stack can make an organization follow that vendor's agent ontology. ServiceNow's workflow and CMDB context is a strength, but that strength also assumes a ServiceNow-centered operating model. Cisco's identity framing is tied to its existing security stack. Before standards mature, organizations may end up with overlapping control layers.

The fourth is kill-switch precision and accountability. Real-time agent blocking sounds attractive, but the system has to explain why it blocked an agent. In customer support, security response, or deployment automation, a wrong block can become an outage. A late block can become a permission-abuse or data-leak incident. Control products eventually need policy languages, risk scoring, human approval models, and rollback strategies, not just a stop button.

The direction is still clear

The shared signal from these three announcements is strong. Enterprise AI agents are moving beyond the "chatbot with a stronger model" phase. An agent is becoming an execution actor with permission inside an organization. If that is true, IAM, endpoint management, observability, CMDB, cost governance, and incident response all have to enter the agent world.

For developers, the good news is that this shift is not only about slowing agent adoption. Better control and identity systems make it possible to assign agents more dangerous and more valuable work. Today's local coding assistant can become tomorrow's pull-request reviewer, deployment investigator, security-patch generator, or customer-support automation. The missing piece is not only a longer prompt. It is an operating structure that clearly limits and tracks who can do what.

Microsoft Agent 365 general availability signals that this market is no longer only experimental. ServiceNow's AI Control Tower expansion is an attempt to observe and stop agents inside business processes. Cisco's intent to acquire Astrix shows that the core of agent security is shifting toward non-human identity. The starting points differ, but the conclusion is the same. The next bottleneck in the AI agent era is not intelligence. It is control.

So the news to watch over the next few months is not only the next agent demo. It is which vendor captures the de facto agent registry, how MCP and OAuth connect to enterprise IAM, how local coding agents enter security policy, and which metrics enterprises use to measure cost and risk. The more agents organizations create, the more valuable the technology for operating agents responsibly becomes.